.avif)

.png)

Image annotation outsourcing involves hiring specialized companies to label images for AI and machine learning projects, providing cost savings, scalability, and access to expert teams. Outsourcing delivers faster turnaround times, higher quality annotations, and allows internal teams to focus on core AI development rather than time-intensive labeling work.

Machine learning models don't understand images the way humans do. To a computer vision system, an image is just a grid of pixels with numerical values. The bridge between raw pixels and real-world understanding starts with annotation—the process of labeling images so algorithms can learn to detect, classify, and interpret visual content.

But here's the thing: annotation is labor-intensive. A single autonomous driving dataset might require millions of labeled frames. A medical imaging project needs pixel-perfect segmentation. An e-commerce visual search platform demands consistent product tagging across thousands of categories.

That's where outsourcing comes in. According to industry research, the global data collection and labeling market was valued at $2.22 billion in 2022, and is expected to expand at a compound annual growth rate of 28.9% from 2023 to 2030 driven by AI adoption across industries. Organizations are increasingly turning to specialized annotation providers rather than building in-house teams.

This guide walks through everything needed to outsource image annotation successfully—from understanding the basics to selecting the right partner and managing quality control.

What Image Annotation Actually Means

Image annotation is the process of adding metadata to images to make them comprehensible for AI systems. These labels identify objects, regions, shapes, boundaries, or attributes within an image and translate visual content into machine-readable data.

The annotation process supplies training data for computer vision models. Each labeled image teaches the algorithm what to look for, helping it recognize patterns and make accurate predictions on new, unseen data.

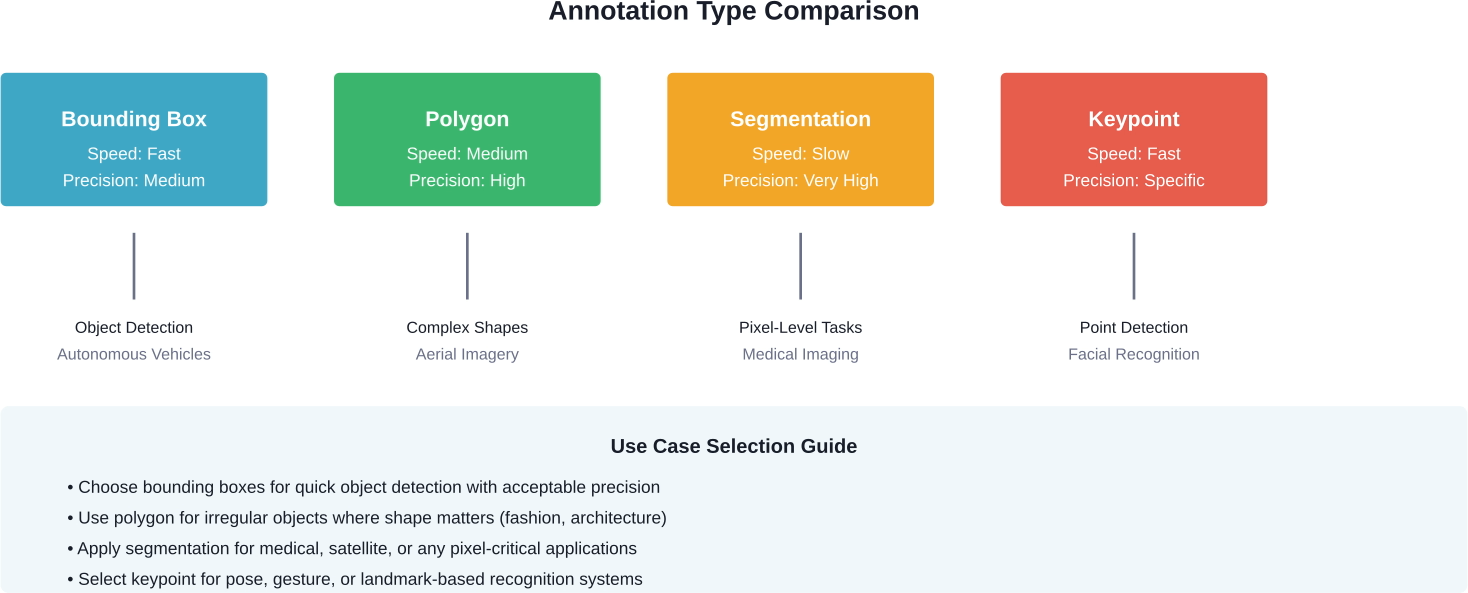

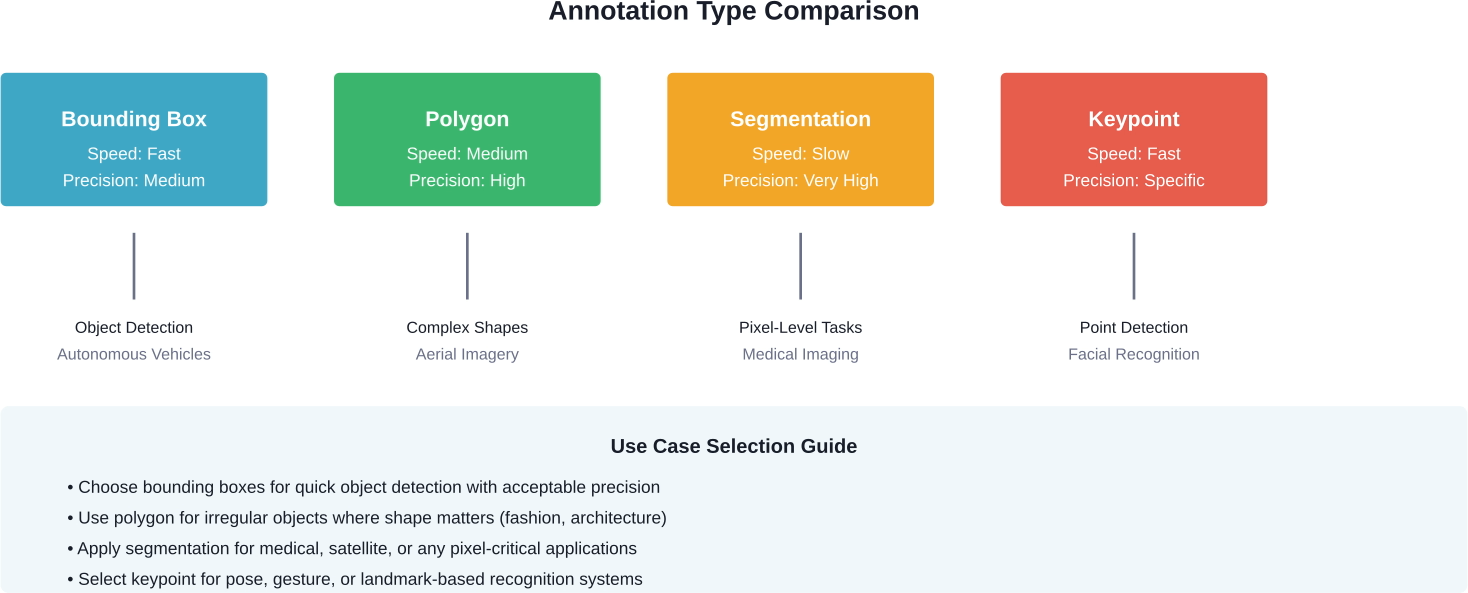

Common Annotation Types

Different computer vision tasks require different annotation approaches. Bounding boxes draw rectangular frames around objects—the simplest and fastest method, commonly used for object detection in autonomous vehicles and surveillance systems.

Polygon annotation traces irregular shapes with multiple points, providing more precision than bounding boxes. This works well for objects with complex boundaries like vehicles, buildings, or people in various poses.

Semantic segmentation assigns a class label to every pixel in an image. Medical imaging relies heavily on this technique to identify tumors, organs, or tissue types with pixel-level accuracy.

Keypoint annotation marks specific points of interest on an image—like facial landmarks for emotion recognition or joint positions for pose estimation. Retail and fashion applications use this for virtual try-on features.

Classification tags entire images with categorical labels. A dataset of animal photos might be classified as 'dog', 'cat', 'bird', or 'other'—useful for content moderation, product categorization, and search functionality.

Why Organizations Outsource Annotation Work

Building an in-house annotation team sounds straightforward until the real costs emerge. Hiring, training, managing annotators, investing in annotation platforms, and maintaining quality control systems adds up quickly.

Outsourcing image annotation offers several compelling advantages over in-house operations.

Cost Reduction

Specialized annotation companies operate at scale, distributing infrastructure and tooling costs across multiple clients. They've already invested in annotation platforms, quality assurance systems, and trained workforces.

Organizations save on recruitment, training, benefits, office space, and software licenses. The pay-per-project or pay-per-label pricing model converts fixed overhead into variable costs that scale with actual needs.

Speed and Scalability

Annotation providers maintain large teams that can ramp up or down based on project requirements. Need 100,000 images labeled in two weeks? An outsourcing partner can assign dozens of annotators immediately.

In-house teams face capacity constraints. Hiring and training new annotators takes weeks or months. Projects with fluctuating volumes create staffing challenges—too many people during slow periods, not enough during crunches.

Quality and Expertise

Professional annotation companies specialize in this work. They've developed workflows, quality control processes, and expertise across multiple annotation types and domains.

Many employ tiered annotation teams—entry-level annotators handle straightforward tasks while specialists tackle complex medical, satellite, or technical imagery. Quality assurance teams review work systematically rather than relying on spot checks.

According to research on annotation work practices, professional annotation centers typically employ graduates with relevant education, with more than 50% of employees being female, bringing diverse perspectives to labeling tasks.

Focus on Core Competencies

Data science and engineering teams should build models, not spend weeks drawing bounding boxes. Outsourcing frees internal resources to focus on algorithm development, architecture design, and model optimization.

Annotation is essential but rarely a competitive differentiator. The competitive advantage lies in what's built with the annotated data, not in the annotation process itself.

Ensure Accuracy With Image Annotation Outsourcing

Image annotation outsourcing requires precision and process control. NeoWork provides trained remote teams for data labeling, evaluation, and AI dataset preparation. With a 91% annualized teammate retention rate and a 3.2% candidate selectivity rate, projects benefit from stable annotators who understand your guidelines in depth. This reduces inconsistency and improves dataset quality.

Ready to Strengthen Your Annotation Pipeline?

Talk with NeoWork to:

- build a dedicated remote annotation team

- improve labeling accuracy and QA workflows

- scale dataset production efficiently

👉 Reach out to NeoWork to plan your image annotation outsourcing strategy.

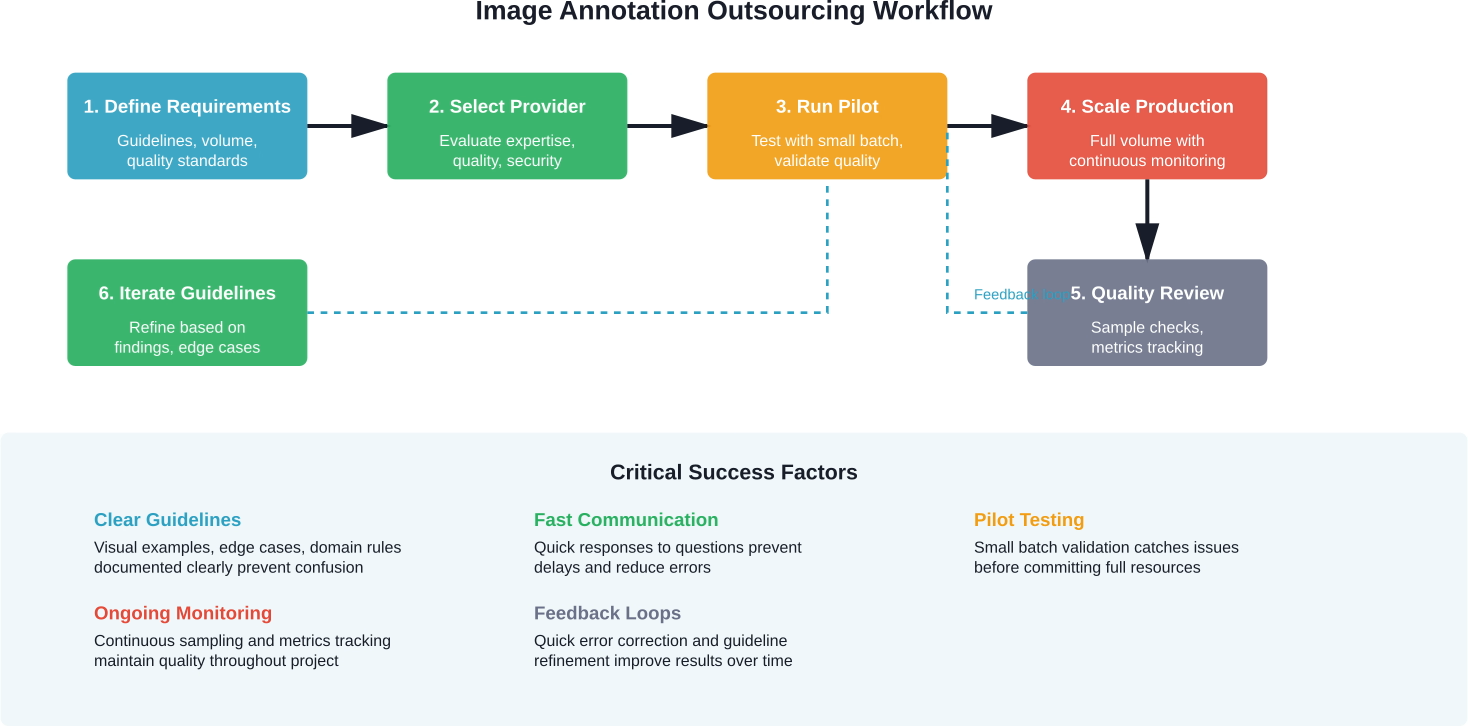

The Outsourcing Process Step by Step

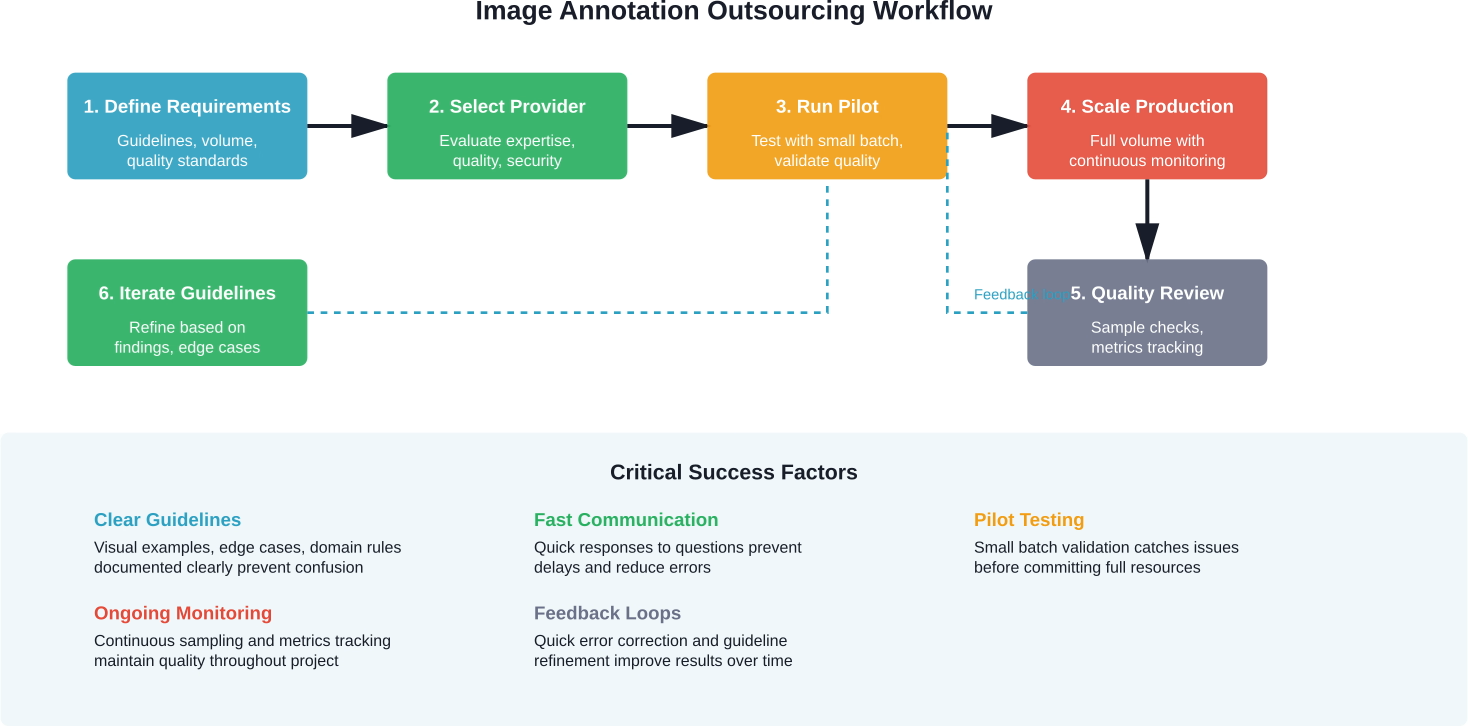

Successful annotation outsourcing isn't just about finding a vendor and sending them images. The process requires planning, clear communication, and active management.

Define Project Requirements

Start by documenting exactly what needs to be annotated. Which annotation types are required? What classes or categories should be labeled? Are there specific quality thresholds?

Create detailed annotation guidelines. These should include visual examples of correct and incorrect annotations, edge case handling, and any domain-specific rules. The clearer the guidelines, the better the results.

Specify volume and timeline requirements. How many images need annotation? What's the deadline? Are there multiple batches or phases?

Select the Right Partner

Not all annotation providers are equal. Evaluate potential partners based on several criteria.

Look for domain expertise relevant to the project. Medical imaging requires different knowledge than retail product photos. Ask about previous similar projects and request sample work.

Assess their quality control processes. How do they ensure accuracy? What's their error rate? Do they use multiple annotators per image? How are disagreements resolved?

Check their security and compliance practices. Where is data stored? Who has access? Do they comply with relevant regulations like GDPR or HIPAA?

Review their technology stack. What annotation tools do they use? Can they integrate with existing workflows? Do they provide APIs for programmatic access?

Understand pricing structure. Is it per image, per label, per hour? Are there minimum commitments? What happens if quality doesn't meet expectations?

Run a Pilot Project

Never commit to a large project without testing the provider first. Start with a small batch—maybe 500-1,000 images that represent the full dataset's complexity.

Evaluate the pilot results carefully. Check annotation accuracy against ground truth or expert review. Measure how well the provider followed guidelines. Look for systematic errors or misunderstandings.

Assess communication and responsiveness. How quickly do they address questions? Are they proactive about clarifying ambiguities?

Use pilot results to refine guidelines and processes before scaling up.

Establish Clear Communication Channels

Set up regular check-ins—weekly or bi-weekly depending on project duration. These shouldn't be formal status meetings, but quick sync-ups to address questions and review samples.

Create a shared system for questions and clarifications. Annotation teams will encounter edge cases and ambiguities. They need a fast way to get answers without pausing work.

Define escalation paths for quality issues or delays.

Monitor Quality Continuously

Don't wait until the end to check quality. Sample and review deliverables throughout the project.

Track key metrics: annotation accuracy, consistency across annotators, turnaround time per batch, and error types. Some errors are more problematic than others—a slightly misaligned bounding box matters less than a completely mislabeled object.

Provide feedback quickly. When errors appear, document them with examples and share them with the provider immediately. This prevents repeated mistakes across thousands of images.

Some organizations implement automated quality checks—scripts that flag obvious errors like impossible box dimensions, missing required fields, or class imbalances that suggest systematic mislabeling.

Quality Control Strategies That Work

Quality control separates successful annotation projects from disasters. Even experienced providers make mistakes without proper oversight.

Multi-Annotator Consensus

Have multiple annotators label the same images independently, then compare results. Agreement indicates confidence, while disagreement flags ambiguous cases that need expert review.

Research on crowdsourced annotation suggests that overlapping annotations improve quality significantly, though they increase costs. The trade-off depends on project requirements—mission-critical applications justify the expense.

Golden Datasets

Create a small set of perfectly annotated images—the gold standard. Periodically insert these into the workflow without telling annotators which ones they are.

Track how well annotators perform on golden images. Scores below threshold trigger additional training or reassignment to simpler tasks.

Hierarchical Review

Structure quality control in tiers. Entry-level annotators handle initial labeling. Mid-level reviewers spot-check and correct errors. Senior specialists audit complex cases and maintain consistency.

This approach balances cost and quality—junior annotators are less expensive but need oversight, while specialists focus their time where expertise matters most.

Automated Validation

Build scripts that catch obvious errors: bounding boxes outside image boundaries, impossibly small annotations, required fields left empty, or class distributions that deviate dramatically from expected patterns.

Automated checks don't replace human review, but they catch simple mistakes before human reviewers waste time on them.

Inter-Annotator Agreement Metrics

Measure consistency across annotators using metrics like Cohen's kappa or Intersection over Union for bounding boxes. According to research on annotation variability, paired bootstrap methods help assess whether observed differences are statistically meaningful.

Track these metrics over time. Declining agreement suggests guideline drift or inadequate training.

Common Challenges and How to Handle Them

Even well-planned projects hit obstacles. Knowing what to expect helps address issues quickly.

Ambiguous Guidelines

Annotators encounter edge cases that guidelines don't cover. Should a person wearing a helmet be labeled 'person' or 'person-with-helmet'? Does a partially visible car get annotated?

Solution: Build a living FAQ section in annotation guidelines. When edge cases emerge, document the decision and add it to guidelines immediately. Share updates with all annotators.

Quality Drift Over Time

Annotation quality sometimes degrades as projects progress. Annotators get fatigued, guidelines are forgotten, or subtle misunderstandings compound.

Solution: Regular quality sampling throughout the project, not just at the start. Periodic refresher training helps, especially for long projects.

Domain Knowledge Gaps

Medical imagery, satellite photos, or technical diagrams require specialized knowledge that general annotators lack.

Solution: Work with providers who recruit annotators with relevant backgrounds. Medical imaging needs annotators with clinical training. Satellite imagery benefits from geography or environmental science backgrounds.

Data Security Concerns

Sending proprietary or sensitive data to third parties creates risk.

Solution: Vet providers' security practices thoroughly. Check data handling policies, storage locations, access controls, and compliance certifications. For highly sensitive data, consider on-premise or private cloud annotation platforms with provider-managed labor but client-controlled infrastructure.

Scope Creep

Projects expand as requirements evolve. New annotation types get added, class definitions change, or dataset size grows beyond original estimates.

Solution: Build flexibility into contracts. Define change request processes and pricing adjustments upfront. Treat major scope changes as new projects with fresh pilots and guideline reviews.

Selecting an Annotation Provider

The market has dozens of annotation providers ranging from crowdsourcing platforms to specialized boutique firms. Each model has trade-offs.

Managed Service Providers

These companies employ dedicated annotation teams and handle the entire process—from tool setup to quality control. They typically offer higher quality and better communication but at premium pricing.

Best for: Projects requiring high accuracy, complex annotation types, or domain expertise.

Crowdsourcing Platforms

Platforms distribute tasks across large pools of gig workers. They're fast and inexpensive but quality varies significantly. Research on crowdsourced labeling indicates that while platforms lack internal QA mechanics similar to dedicated companies, they work well for simple tasks with redundancy.

Best for: Large-volume simple tasks where redundancy can compensate for variable quality, or projects with tight budgets.

Hybrid Models

Some providers combine crowdsourcing for initial labeling with expert review for quality control. This balances cost and accuracy.

Best for: Projects with moderate quality requirements and medium budgets.

Specialized Domain Experts

Niche providers focus on specific industries—medical imaging, autonomous vehicles, agriculture, or retail. They employ annotators with relevant expertise.

Best for: Projects where domain knowledge is critical to accurate labeling.

Key Evaluation Criteria

When comparing providers, ask specific questions. What's their typical accuracy rate for similar projects? How do they measure and report quality?

Request client references and check them. Ask references about communication, adherence to deadlines, and how the provider handled problems.

Evaluate their annotation platform. Is it intuitive? Does it support the required annotation types? Can it export in needed formats?

Check team qualifications. What's the annotator screening process? What training do they receive? How much turnover do they have?

Understand project management approach. Who's the main point of contact? How often will they provide updates? What metrics do they track?

Pricing Models Explained

Annotation pricing varies based on complexity, volume, quality requirements, and provider type.

Per-Image Pricing

Simple flat rate per image regardless of annotation complexity. Works for projects where images are relatively uniform.

Advantage: Predictable budgeting. Disadvantage: Doesn't account for variation in image complexity.

Per-Object Pricing

Charged based on number of objects annotated. An image with 50 labeled cars costs more than one with 5.

Advantage: Fairer for variable object counts. Disadvantage: Final costs uncertain until annotation is complete.

Per-Hour Pricing

Annotators bill based on time spent. Common for very complex or exploratory projects where per-unit pricing is hard to estimate.

Advantage: Flexible for unpredictable tasks. Disadvantage: Incentivizes slow work without proper oversight.

Tiered Pricing

Different rates for different annotation types or complexity levels. Bounding boxes cost less than polygon segmentation, which costs less than pixel-perfect semantic segmentation.

Advantage: Matches cost to effort required. Disadvantage: More complex to estimate and invoice.

Most providers offer custom quotes based on project specifics. Provide detailed requirements to get accurate estimates. Always ask about minimum commitments, rush fees, and revision policies.

When In-House Annotation Makes Sense

Outsourcing isn't always the answer. Some situations favor building internal annotation capabilities.

Highly sensitive data that can't leave the organization for legal, competitive, or ethical reasons might require in-house annotation. Healthcare data subject to HIPAA, classified government imagery, or proprietary product designs fall into this category.

Continuous, ongoing annotation needs with relatively stable volume justify the fixed costs of an in-house team. If annotation is a permanent operational requirement rather than project-based, the economics shift.

Projects requiring deep proprietary domain knowledge that's difficult to transfer externally sometimes work better in-house. When annotators need weeks of specialized training, building internal expertise makes more sense.

Real-time or extremely tight feedback loops between model development and annotation benefit from integrated teams. If machine learning engineers need to iterate on annotation schemas daily, in-house teams offer more agility.

That said, many organizations successfully use hybrid approaches—maintaining small in-house teams for sensitive or exploratory work while outsourcing high-volume production labeling.

Future Trends in Annotation Outsourcing

The annotation industry continues evolving as AI advances and demand grows.

Automation-assisted annotation is expanding. Tools now offer pre-labeling using existing models, with human annotators correcting and refining rather than labeling from scratch. This speeds up work and reduces costs while maintaining quality through human oversight.

Active learning strategies help prioritize which images need annotation. Instead of labeling entire datasets randomly, systems identify the most informative examples—those where the model is most uncertain or where labels would improve performance most.

Synthetic data generation reduces annotation needs for some applications. Computer graphics can create labeled training data for scenarios difficult or expensive to capture and annotate manually.

Organizations increasingly recognize annotation as a strategic function requiring formal governance, not just a commodity service. This drives more sophisticated vendor management and quality frameworks.

The annotation workforce is professionalizing. According to research on annotation work practices, professional annotation centers are employing more educated workers, often recent graduates, and developing career paths in annotation rather than treating it purely as low-skill labor.

Making Outsourcing Work

Image annotation outsourcing offers compelling advantages for most AI and machine learning projects. Cost savings, scalability, access to expertise, and faster time-to-market make it the practical choice for organizations focused on building models rather than managing annotation operations.

Success requires treating outsourcing as a partnership rather than a transaction. Clear communication, detailed guidelines, pilot testing, and continuous quality monitoring separate successful projects from problematic ones.

The annotation industry has matured significantly. Professional providers employ sophisticated quality control processes, experienced teams, and proven workflows. The market offers options for every budget and requirement—from low-cost crowdsourcing to premium specialized services.

Start small. Run pilots with multiple providers to evaluate quality, communication, and fit. Scale successful partnerships gradually while maintaining quality oversight. Build feedback loops that continuously improve guidelines and processes.

Data quality of training datasets fundamentally determines model performance. Investing time in finding the right annotation partner and establishing robust quality processes pays dividends throughout the model lifecycle. Poor annotations create technical debt that compounds as models are deployed and iterated.

Ready to outsource your annotation project? Define requirements clearly, evaluate providers thoroughly, pilot cautiously, and scale confidently. The right partner transforms annotation from a bottleneck into a competitive advantage.

Frequently Asked Questions

Topics

Image Annotation Outsourcing Guide 2026

Image annotation outsourcing involves hiring specialized companies to label images for AI and machine learning projects, providing cost savings, scalability, and access to expert teams. Outsourcing delivers faster turnaround times, higher quality annotations, and allows internal teams to focus on core AI development rather than time-intensive labeling work.

Machine learning models don't understand images the way humans do. To a computer vision system, an image is just a grid of pixels with numerical values. The bridge between raw pixels and real-world understanding starts with annotation—the process of labeling images so algorithms can learn to detect, classify, and interpret visual content.

But here's the thing: annotation is labor-intensive. A single autonomous driving dataset might require millions of labeled frames. A medical imaging project needs pixel-perfect segmentation. An e-commerce visual search platform demands consistent product tagging across thousands of categories.

That's where outsourcing comes in. According to industry research, the global data collection and labeling market was valued at $2.22 billion in 2022, and is expected to expand at a compound annual growth rate of 28.9% from 2023 to 2030 driven by AI adoption across industries. Organizations are increasingly turning to specialized annotation providers rather than building in-house teams.

This guide walks through everything needed to outsource image annotation successfully—from understanding the basics to selecting the right partner and managing quality control.

What Image Annotation Actually Means

Image annotation is the process of adding metadata to images to make them comprehensible for AI systems. These labels identify objects, regions, shapes, boundaries, or attributes within an image and translate visual content into machine-readable data.

The annotation process supplies training data for computer vision models. Each labeled image teaches the algorithm what to look for, helping it recognize patterns and make accurate predictions on new, unseen data.

Common Annotation Types

Different computer vision tasks require different annotation approaches. Bounding boxes draw rectangular frames around objects—the simplest and fastest method, commonly used for object detection in autonomous vehicles and surveillance systems.

Polygon annotation traces irregular shapes with multiple points, providing more precision than bounding boxes. This works well for objects with complex boundaries like vehicles, buildings, or people in various poses.

Semantic segmentation assigns a class label to every pixel in an image. Medical imaging relies heavily on this technique to identify tumors, organs, or tissue types with pixel-level accuracy.

Keypoint annotation marks specific points of interest on an image—like facial landmarks for emotion recognition or joint positions for pose estimation. Retail and fashion applications use this for virtual try-on features.

Classification tags entire images with categorical labels. A dataset of animal photos might be classified as 'dog', 'cat', 'bird', or 'other'—useful for content moderation, product categorization, and search functionality.

Why Organizations Outsource Annotation Work

Building an in-house annotation team sounds straightforward until the real costs emerge. Hiring, training, managing annotators, investing in annotation platforms, and maintaining quality control systems adds up quickly.

Outsourcing image annotation offers several compelling advantages over in-house operations.

Cost Reduction

Specialized annotation companies operate at scale, distributing infrastructure and tooling costs across multiple clients. They've already invested in annotation platforms, quality assurance systems, and trained workforces.

Organizations save on recruitment, training, benefits, office space, and software licenses. The pay-per-project or pay-per-label pricing model converts fixed overhead into variable costs that scale with actual needs.

Speed and Scalability

Annotation providers maintain large teams that can ramp up or down based on project requirements. Need 100,000 images labeled in two weeks? An outsourcing partner can assign dozens of annotators immediately.

In-house teams face capacity constraints. Hiring and training new annotators takes weeks or months. Projects with fluctuating volumes create staffing challenges—too many people during slow periods, not enough during crunches.

Quality and Expertise

Professional annotation companies specialize in this work. They've developed workflows, quality control processes, and expertise across multiple annotation types and domains.

Many employ tiered annotation teams—entry-level annotators handle straightforward tasks while specialists tackle complex medical, satellite, or technical imagery. Quality assurance teams review work systematically rather than relying on spot checks.

According to research on annotation work practices, professional annotation centers typically employ graduates with relevant education, with more than 50% of employees being female, bringing diverse perspectives to labeling tasks.

Focus on Core Competencies

Data science and engineering teams should build models, not spend weeks drawing bounding boxes. Outsourcing frees internal resources to focus on algorithm development, architecture design, and model optimization.

Annotation is essential but rarely a competitive differentiator. The competitive advantage lies in what's built with the annotated data, not in the annotation process itself.

Ensure Accuracy With Image Annotation Outsourcing

Image annotation outsourcing requires precision and process control. NeoWork provides trained remote teams for data labeling, evaluation, and AI dataset preparation. With a 91% annualized teammate retention rate and a 3.2% candidate selectivity rate, projects benefit from stable annotators who understand your guidelines in depth. This reduces inconsistency and improves dataset quality.

Ready to Strengthen Your Annotation Pipeline?

Talk with NeoWork to:

- build a dedicated remote annotation team

- improve labeling accuracy and QA workflows

- scale dataset production efficiently

👉 Reach out to NeoWork to plan your image annotation outsourcing strategy.

The Outsourcing Process Step by Step

Successful annotation outsourcing isn't just about finding a vendor and sending them images. The process requires planning, clear communication, and active management.

Define Project Requirements

Start by documenting exactly what needs to be annotated. Which annotation types are required? What classes or categories should be labeled? Are there specific quality thresholds?

Create detailed annotation guidelines. These should include visual examples of correct and incorrect annotations, edge case handling, and any domain-specific rules. The clearer the guidelines, the better the results.

Specify volume and timeline requirements. How many images need annotation? What's the deadline? Are there multiple batches or phases?

Select the Right Partner

Not all annotation providers are equal. Evaluate potential partners based on several criteria.

Look for domain expertise relevant to the project. Medical imaging requires different knowledge than retail product photos. Ask about previous similar projects and request sample work.

Assess their quality control processes. How do they ensure accuracy? What's their error rate? Do they use multiple annotators per image? How are disagreements resolved?

Check their security and compliance practices. Where is data stored? Who has access? Do they comply with relevant regulations like GDPR or HIPAA?

Review their technology stack. What annotation tools do they use? Can they integrate with existing workflows? Do they provide APIs for programmatic access?

Understand pricing structure. Is it per image, per label, per hour? Are there minimum commitments? What happens if quality doesn't meet expectations?

Run a Pilot Project

Never commit to a large project without testing the provider first. Start with a small batch—maybe 500-1,000 images that represent the full dataset's complexity.

Evaluate the pilot results carefully. Check annotation accuracy against ground truth or expert review. Measure how well the provider followed guidelines. Look for systematic errors or misunderstandings.

Assess communication and responsiveness. How quickly do they address questions? Are they proactive about clarifying ambiguities?

Use pilot results to refine guidelines and processes before scaling up.

Establish Clear Communication Channels

Set up regular check-ins—weekly or bi-weekly depending on project duration. These shouldn't be formal status meetings, but quick sync-ups to address questions and review samples.

Create a shared system for questions and clarifications. Annotation teams will encounter edge cases and ambiguities. They need a fast way to get answers without pausing work.

Define escalation paths for quality issues or delays.

Monitor Quality Continuously

Don't wait until the end to check quality. Sample and review deliverables throughout the project.

Track key metrics: annotation accuracy, consistency across annotators, turnaround time per batch, and error types. Some errors are more problematic than others—a slightly misaligned bounding box matters less than a completely mislabeled object.

Provide feedback quickly. When errors appear, document them with examples and share them with the provider immediately. This prevents repeated mistakes across thousands of images.

Some organizations implement automated quality checks—scripts that flag obvious errors like impossible box dimensions, missing required fields, or class imbalances that suggest systematic mislabeling.

Quality Control Strategies That Work

Quality control separates successful annotation projects from disasters. Even experienced providers make mistakes without proper oversight.

Multi-Annotator Consensus

Have multiple annotators label the same images independently, then compare results. Agreement indicates confidence, while disagreement flags ambiguous cases that need expert review.

Research on crowdsourced annotation suggests that overlapping annotations improve quality significantly, though they increase costs. The trade-off depends on project requirements—mission-critical applications justify the expense.

Golden Datasets

Create a small set of perfectly annotated images—the gold standard. Periodically insert these into the workflow without telling annotators which ones they are.

Track how well annotators perform on golden images. Scores below threshold trigger additional training or reassignment to simpler tasks.

Hierarchical Review

Structure quality control in tiers. Entry-level annotators handle initial labeling. Mid-level reviewers spot-check and correct errors. Senior specialists audit complex cases and maintain consistency.

This approach balances cost and quality—junior annotators are less expensive but need oversight, while specialists focus their time where expertise matters most.

Automated Validation

Build scripts that catch obvious errors: bounding boxes outside image boundaries, impossibly small annotations, required fields left empty, or class distributions that deviate dramatically from expected patterns.

Automated checks don't replace human review, but they catch simple mistakes before human reviewers waste time on them.

Inter-Annotator Agreement Metrics

Measure consistency across annotators using metrics like Cohen's kappa or Intersection over Union for bounding boxes. According to research on annotation variability, paired bootstrap methods help assess whether observed differences are statistically meaningful.

Track these metrics over time. Declining agreement suggests guideline drift or inadequate training.

Common Challenges and How to Handle Them

Even well-planned projects hit obstacles. Knowing what to expect helps address issues quickly.

Ambiguous Guidelines

Annotators encounter edge cases that guidelines don't cover. Should a person wearing a helmet be labeled 'person' or 'person-with-helmet'? Does a partially visible car get annotated?

Solution: Build a living FAQ section in annotation guidelines. When edge cases emerge, document the decision and add it to guidelines immediately. Share updates with all annotators.

Quality Drift Over Time

Annotation quality sometimes degrades as projects progress. Annotators get fatigued, guidelines are forgotten, or subtle misunderstandings compound.

Solution: Regular quality sampling throughout the project, not just at the start. Periodic refresher training helps, especially for long projects.

Domain Knowledge Gaps

Medical imagery, satellite photos, or technical diagrams require specialized knowledge that general annotators lack.

Solution: Work with providers who recruit annotators with relevant backgrounds. Medical imaging needs annotators with clinical training. Satellite imagery benefits from geography or environmental science backgrounds.

Data Security Concerns

Sending proprietary or sensitive data to third parties creates risk.

Solution: Vet providers' security practices thoroughly. Check data handling policies, storage locations, access controls, and compliance certifications. For highly sensitive data, consider on-premise or private cloud annotation platforms with provider-managed labor but client-controlled infrastructure.

Scope Creep

Projects expand as requirements evolve. New annotation types get added, class definitions change, or dataset size grows beyond original estimates.

Solution: Build flexibility into contracts. Define change request processes and pricing adjustments upfront. Treat major scope changes as new projects with fresh pilots and guideline reviews.

Selecting an Annotation Provider

The market has dozens of annotation providers ranging from crowdsourcing platforms to specialized boutique firms. Each model has trade-offs.

Managed Service Providers

These companies employ dedicated annotation teams and handle the entire process—from tool setup to quality control. They typically offer higher quality and better communication but at premium pricing.

Best for: Projects requiring high accuracy, complex annotation types, or domain expertise.

Crowdsourcing Platforms

Platforms distribute tasks across large pools of gig workers. They're fast and inexpensive but quality varies significantly. Research on crowdsourced labeling indicates that while platforms lack internal QA mechanics similar to dedicated companies, they work well for simple tasks with redundancy.

Best for: Large-volume simple tasks where redundancy can compensate for variable quality, or projects with tight budgets.

Hybrid Models

Some providers combine crowdsourcing for initial labeling with expert review for quality control. This balances cost and accuracy.

Best for: Projects with moderate quality requirements and medium budgets.

Specialized Domain Experts

Niche providers focus on specific industries—medical imaging, autonomous vehicles, agriculture, or retail. They employ annotators with relevant expertise.

Best for: Projects where domain knowledge is critical to accurate labeling.

Key Evaluation Criteria

When comparing providers, ask specific questions. What's their typical accuracy rate for similar projects? How do they measure and report quality?

Request client references and check them. Ask references about communication, adherence to deadlines, and how the provider handled problems.

Evaluate their annotation platform. Is it intuitive? Does it support the required annotation types? Can it export in needed formats?

Check team qualifications. What's the annotator screening process? What training do they receive? How much turnover do they have?

Understand project management approach. Who's the main point of contact? How often will they provide updates? What metrics do they track?

Pricing Models Explained

Annotation pricing varies based on complexity, volume, quality requirements, and provider type.

Per-Image Pricing

Simple flat rate per image regardless of annotation complexity. Works for projects where images are relatively uniform.

Advantage: Predictable budgeting. Disadvantage: Doesn't account for variation in image complexity.

Per-Object Pricing

Charged based on number of objects annotated. An image with 50 labeled cars costs more than one with 5.

Advantage: Fairer for variable object counts. Disadvantage: Final costs uncertain until annotation is complete.

Per-Hour Pricing

Annotators bill based on time spent. Common for very complex or exploratory projects where per-unit pricing is hard to estimate.

Advantage: Flexible for unpredictable tasks. Disadvantage: Incentivizes slow work without proper oversight.

Tiered Pricing

Different rates for different annotation types or complexity levels. Bounding boxes cost less than polygon segmentation, which costs less than pixel-perfect semantic segmentation.

Advantage: Matches cost to effort required. Disadvantage: More complex to estimate and invoice.

Most providers offer custom quotes based on project specifics. Provide detailed requirements to get accurate estimates. Always ask about minimum commitments, rush fees, and revision policies.

When In-House Annotation Makes Sense

Outsourcing isn't always the answer. Some situations favor building internal annotation capabilities.

Highly sensitive data that can't leave the organization for legal, competitive, or ethical reasons might require in-house annotation. Healthcare data subject to HIPAA, classified government imagery, or proprietary product designs fall into this category.

Continuous, ongoing annotation needs with relatively stable volume justify the fixed costs of an in-house team. If annotation is a permanent operational requirement rather than project-based, the economics shift.

Projects requiring deep proprietary domain knowledge that's difficult to transfer externally sometimes work better in-house. When annotators need weeks of specialized training, building internal expertise makes more sense.

Real-time or extremely tight feedback loops between model development and annotation benefit from integrated teams. If machine learning engineers need to iterate on annotation schemas daily, in-house teams offer more agility.

That said, many organizations successfully use hybrid approaches—maintaining small in-house teams for sensitive or exploratory work while outsourcing high-volume production labeling.

Future Trends in Annotation Outsourcing

The annotation industry continues evolving as AI advances and demand grows.

Automation-assisted annotation is expanding. Tools now offer pre-labeling using existing models, with human annotators correcting and refining rather than labeling from scratch. This speeds up work and reduces costs while maintaining quality through human oversight.

Active learning strategies help prioritize which images need annotation. Instead of labeling entire datasets randomly, systems identify the most informative examples—those where the model is most uncertain or where labels would improve performance most.

Synthetic data generation reduces annotation needs for some applications. Computer graphics can create labeled training data for scenarios difficult or expensive to capture and annotate manually.

Organizations increasingly recognize annotation as a strategic function requiring formal governance, not just a commodity service. This drives more sophisticated vendor management and quality frameworks.

The annotation workforce is professionalizing. According to research on annotation work practices, professional annotation centers are employing more educated workers, often recent graduates, and developing career paths in annotation rather than treating it purely as low-skill labor.

Making Outsourcing Work

Image annotation outsourcing offers compelling advantages for most AI and machine learning projects. Cost savings, scalability, access to expertise, and faster time-to-market make it the practical choice for organizations focused on building models rather than managing annotation operations.

Success requires treating outsourcing as a partnership rather than a transaction. Clear communication, detailed guidelines, pilot testing, and continuous quality monitoring separate successful projects from problematic ones.

The annotation industry has matured significantly. Professional providers employ sophisticated quality control processes, experienced teams, and proven workflows. The market offers options for every budget and requirement—from low-cost crowdsourcing to premium specialized services.

Start small. Run pilots with multiple providers to evaluate quality, communication, and fit. Scale successful partnerships gradually while maintaining quality oversight. Build feedback loops that continuously improve guidelines and processes.

Data quality of training datasets fundamentally determines model performance. Investing time in finding the right annotation partner and establishing robust quality processes pays dividends throughout the model lifecycle. Poor annotations create technical debt that compounds as models are deployed and iterated.

Ready to outsource your annotation project? Define requirements clearly, evaluate providers thoroughly, pilot cautiously, and scale confidently. The right partner transforms annotation from a bottleneck into a competitive advantage.

Frequently Asked Questions

Topics

Related Blogs

Related Podcasts