.avif)

.png)

Outsourcing AI engineers grants businesses access to specialized technical talent without long-term overhead, typically reducing development costs by 30-50%. Success depends on choosing the right engagement model (staff augmentation, dedicated teams, or project-based), vetting partners for technical depth and communication skills, and maintaining clear ownership over strategy and architecture while external teams execute implementation.

Building AI capabilities internally is getting harder. According to MIT Sloan Management Review research from 2019, while nine out of ten executives recognize AI as a business opportunity, 40% of organizations making significant AI investments do not report business gains from AI. The gap isn't just about technology—it's about access to the right engineering talent.

Most companies don't have benches stacked with machine learning engineers, computer vision specialists, or NLP experts. Hiring full-time AI talent means competing with tech giants offering million-dollar compensation packages. And even when companies manage to hire, they're locked into year-round headcount for projects that might only need intense engineering effort for 3-6 months.

Outsourcing AI engineers solves this by providing on-demand access to specialized skills. But it's not about finding the cheapest developers. The wrong partner can burn a budget on proof-of-concepts that never scale, create technical debt that cripples future development, or build models that don't align with actual business needs.

Here's what actually works when outsourcing AI engineering talent in 2026.

What AI Engineering Outsourcing Actually Covers

AI outsourcing isn't one thing. It spans everything from tactical data labeling to full-stack AI product development. Understanding the scope helps match the right engagement model to specific needs.

Data preparation and annotation represent the foundation layer. Engineers clean datasets, label training data, handle data augmentation, and build pipelines that feed models. This work is labor-intensive but essential—models trained on poorly prepared data fail in production regardless of algorithmic sophistication.

Model development sits at the core. External teams design architectures, select appropriate algorithms, train models, tune hyperparameters, and validate performance. For companies building custom solutions rather than using off-the-shelf APIs, this is where specialized expertise matters most.

MLOps and deployment turn experimental models into production systems. Engineers build serving infrastructure, implement monitoring, create retraining pipelines, optimize inference performance, and integrate models into existing applications. Successful deployment requires coordinating technical implementation with business processes.

End-to-end AI product development bundles everything together. External teams own the entire stack from problem definition through production deployment, handling architecture decisions, development, testing, and launch.

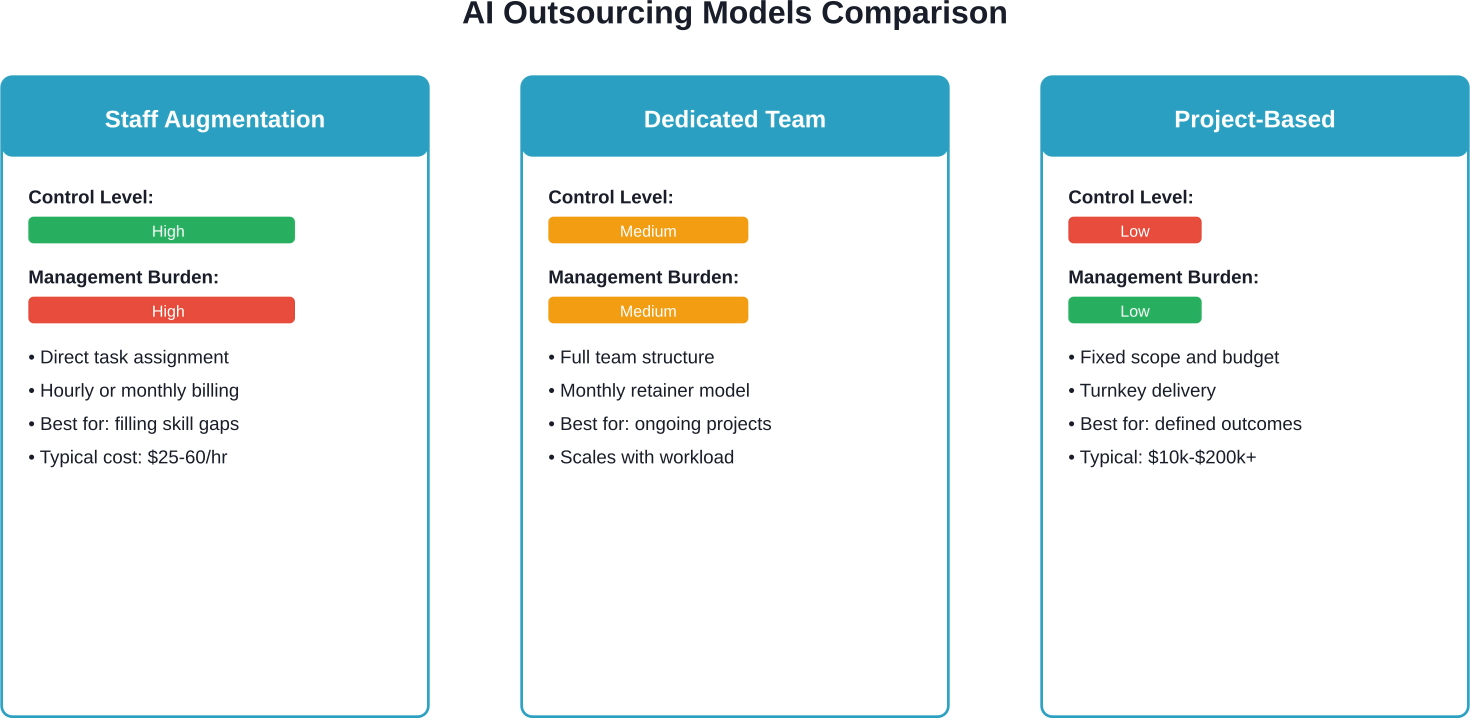

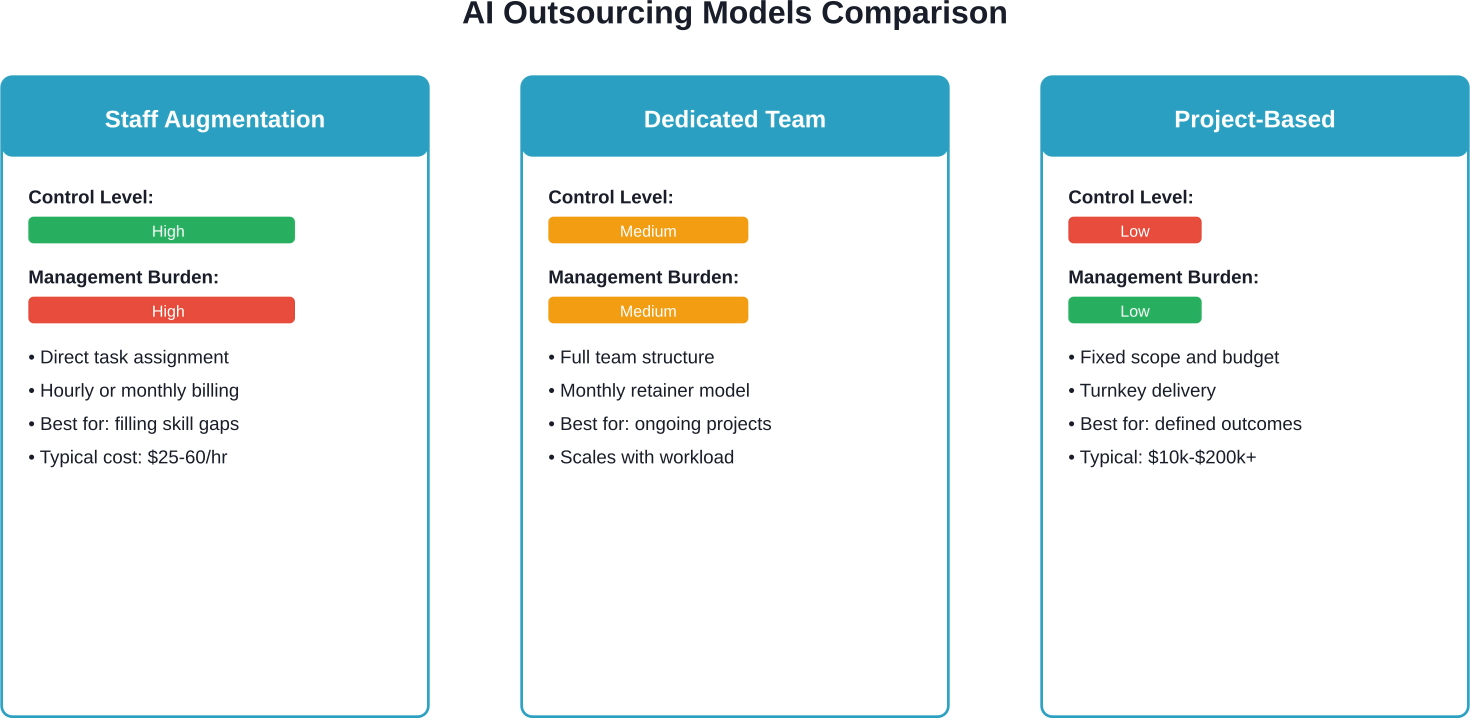

The Three Outsourcing Models That Actually Work

How teams are structured determines success more than individual developer skills. Three models dominate AI engineering outsourcing, each suited to different situations.

Staff Augmentation: Individual Engineers on Demand

Staff augmentation embeds external engineers directly into internal teams. These developers attend daily standups, use internal tools, and report to internal managers just like full-time employees.

This model works when teams need specific skills for defined periods. A company building computer vision capabilities might hire a CV specialist for six months to lead implementation, then transition to an internal engineer for maintenance. The external engineer fills the gap without forcing a permanent headcount increase.

Control is high—internal teams assign tasks, set priorities, and direct work. But the management burden is also high. Internal leads handle performance management, task allocation, and coordination. According to industry data, AI developer hourly engagement in the India market averages $25-60 per hour.

The challenge? Finding engineers who integrate smoothly. Technical skills matter, but so do communication abilities and cultural fit. A brilliant ML engineer who can't collaborate effectively creates more problems than they solve.

Dedicated Teams: Complete Engineering Units

Dedicated teams provide complete engineering units—developers, data scientists, QA engineers, and technical leads—managed externally but aligned to internal projects. The team works exclusively on one client's projects under a monthly retainer structure.

This model suits ongoing development where scope evolves. Instead of defining every feature upfront, companies set strategic direction and let the dedicated team handle execution. The external provider manages hiring, performance, and team dynamics while internal stakeholders focus on product direction.

Control sits between staff augmentation and project-based work. Internal teams define what to build but not exactly how. Management burden drops because the external provider handles day-to-day coordination, though strategic oversight remains internal.

Dedicated teams scale more easily than staff augmentation. Need to add capacity during a critical sprint? The provider can add developers without internal teams managing recruitment. Projects slowing down? Scale back without severance or HR complications.

Project-Based: Fixed Scope, Fixed Price

Project-based outsourcing hands complete initiatives to external teams. Requirements are defined, budgets are set, and the external partner delivers a finished product. Internal teams review milestones and provide feedback, but don't manage daily execution.

This works for well-defined projects with clear success criteria. Building a recommendation engine with specified accuracy targets? A chatbot with defined conversation flows? Document classification with known categories? Project-based engagement can work.

According to industry cost data, simple proof-of-concept projects can start from tens of thousands of dollars, while enterprise-grade AI platforms can reach several hundred thousand. Outsourcing typically reduces total costs by 30-50% compared to in-house development.

The risk is scope creep. AI projects often uncover unexpected challenges during development. A fixed-price contract creates tension when reality diverges from initial specifications. The best project-based engagements include explicit mechanisms for handling scope changes without relationship-damaging disputes.

Where AI Outsourcing Creates Real Value

Not every AI initiative benefits equally from outsourcing. Some use cases align naturally with external teams, while others demand internal ownership.

Customer service automation sees heavy outsourcing. Building chatbots, implementing intent classification, creating knowledge base search systems—these projects have well-established patterns. External teams bring experience from dozens of similar implementations, avoiding common pitfalls that internal teams discover through expensive trial and error.

Predictive analytics for business operations benefits from external expertise. Demand forecasting, churn prediction, inventory optimization, and sales pipeline analysis follow similar modeling approaches across industries. Outsourced teams apply proven techniques without reinventing foundational work.

Document processing and OCR leverage specialized skills that most companies don't maintain internally. Invoice extraction, contract analysis, form processing, and document classification require deep expertise in computer vision and NLP. Outsourcing makes sense unless document processing is core business functionality.

Computer vision applications—from quality control inspection to security monitoring—often involve external teams for initial development. These projects require specialized knowledge of image preprocessing, model architectures, and optimization techniques that general software engineers typically lack.

But strategic AI that defines competitive advantage? That usually demands internal ownership. If an AI capability creates fundamental differentiation, keeping development in-house protects both intellectual property and strategic control. Outsource execution, but own the architecture and strategic direction.

Real Costs: What AI Engineering Outsourcing Actually Runs

Pricing varies dramatically based on engagement model, team location, project complexity, and required expertise. Understanding realistic cost ranges prevents budget surprises.

Location significantly impacts pricing. Eastern European developers typically charge more than Indian engineers but less than Latin American teams. Philippine talent often provides middle-ground pricing with strong English communication skills and cultural alignment to Western business practices.

Expertise level matters as much as location. A senior ML engineer with experience deploying production models at scale commands 2-3x the rate of a junior developer still learning frameworks. For complex projects, that premium pays for itself through faster development and fewer architectural mistakes.

Hidden costs deserve attention. Time zone differences create coordination overhead. A team in India working with stakeholders in California faces limited real-time overlap, slowing decision-making and feedback cycles. Latin American teams align directly with US time zones—a 10 am standup is 10 am for everyone—reducing coordination friction.

According to industry data, North America accounts for approximately 40% of global outsourcing spendings. Salaries and benefits become variable costs scaled to project needs rather than fixed headcount locked in year-round.

Vetting AI Engineering Partners: What Actually Matters

Technical capabilities are table stakes. Every outsourcing firm claims AI expertise. Separating real capability from marketing requires looking past portfolios and certifications.

Technical Depth Beyond Frameworks

Ask about specific challenges they've solved, not just frameworks they know. Any developer can list TensorFlow and PyTorch on a resume. Fewer can explain how they optimized inference latency for a recommendation system serving millions of users, or how they handled class imbalance in a fraud detection model with 1000:1 negative-to-positive ratios.

Request examples of models they've deployed to production. Many teams build impressive demos that never face real users. Production deployment reveals understanding of monitoring, retraining pipelines, performance optimization, and graceful degradation when models encounter edge cases.

Evaluate their approach to data quality. Models fail more often from data issues than algorithmic problems. Teams that ask detailed questions about data sources, labeling processes, and validation strategies understand what actually matters. Teams that jump straight to model selection haven't deployed enough systems to production.

Communication and Collaboration Skills

Technical brilliance without communication ability creates expensive failures. Technical teams and business stakeholders often speak different languages, creating situations where technically impressive systems may not solve actual problems.

Test communication during evaluation. Schedule technical discussions where engineers explain past projects. Strong teams communicate clearly, ask clarifying questions, and flag potential issues proactively. Weak teams provide vague answers, avoid direct questions, and wait for explicit instructions rather than offering guidance.

Assess proactive problem-solving. Present a hypothetical challenge similar to actual needs and evaluate their approach. Do they jump to solutions or ask questions to understand constraints? Do they identify potential risks or promise everything is simple? AI projects always uncover unexpected complexity—teams that acknowledge this upfront handle reality better than those that overpromise.

Process Maturity and DevOps Capabilities

Successful teams demonstrate mature development processes including version control for models and data, automated testing pipelines, and clear documentation practices.

Evaluate their MLOps maturity. Ask about model versioning, experiment tracking, automated retraining, and monitoring strategies. Teams with production experience have systematic approaches. Teams that treat AI development like traditional software lack the specialized practices that make ML systems reliable.

Check their deployment experience across environments. Cloud-native deployment, on-premise installation, edge deployment, and hybrid approaches each create unique challenges. Teams experienced with target deployment environments avoid costly architectural mistakes discovered late in development.

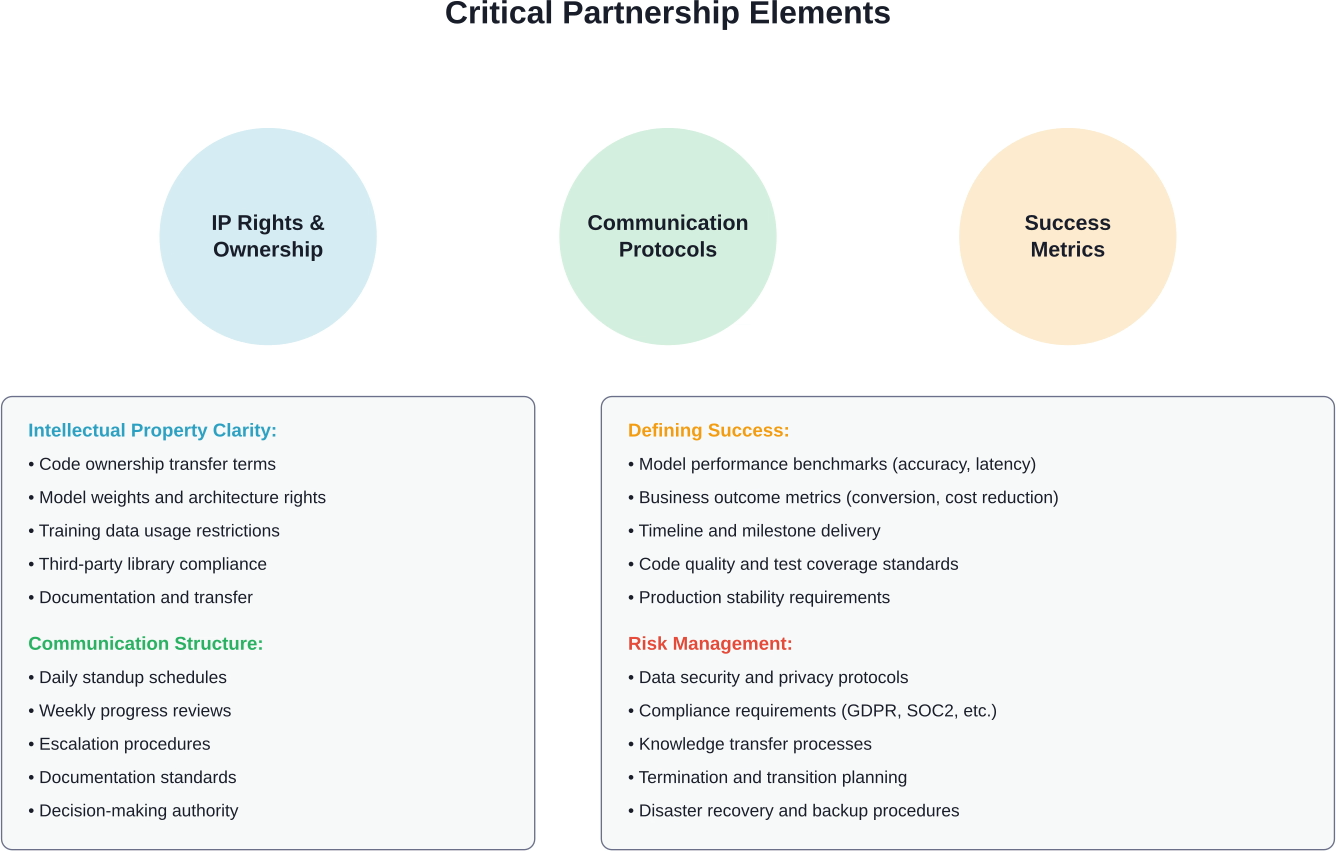

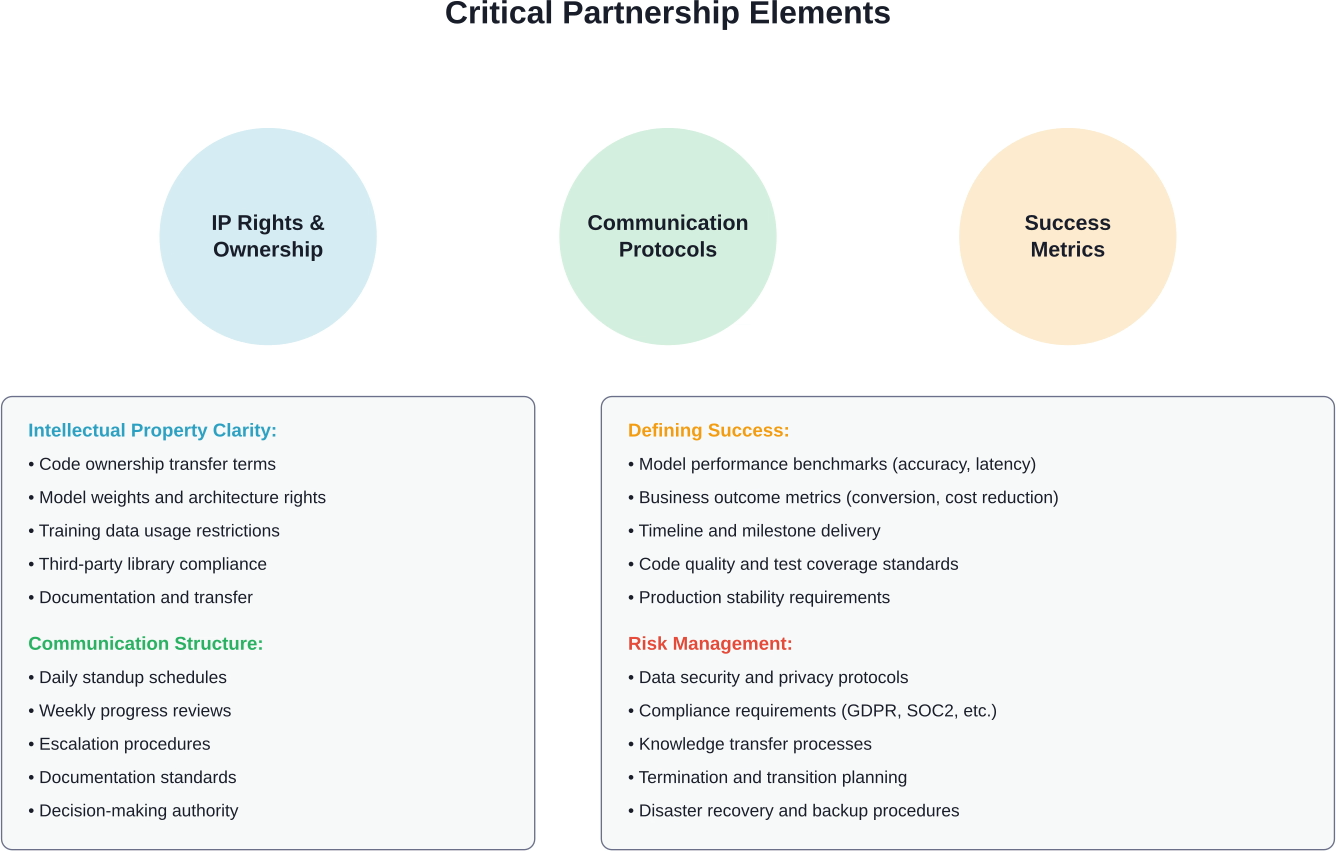

Getting the Partnership Structure Right

Even technically excellent teams fail without proper partnership structures. Clear agreements on ownership, communication, and success metrics prevent misalignment.

Intellectual Property and Code Ownership

Clarify IP ownership before starting work. Default assumptions vary—some contracts give clients full ownership of all code and models, others reserve rights to reuse components across projects, and some create shared ownership of novel techniques developed during engagement.

For most businesses, full ownership of code, models, and documentation is critical. The contract should explicitly transfer all IP rights upon payment. Watch for clauses that allow providers to retain rights to "general methodologies" or "reusable components"—these can create complications if trying to patent innovations or protect competitive advantages.

Address training data rights separately. If using client-provided data, confirm the provider can't retain or reuse that data for other projects. If the provider supplies training data, clarify usage rights and any ongoing licensing requirements.

Communication Cadence and Decision Authority

Establish regular communication rhythms. Daily standups keep development aligned with priorities. Weekly sprint reviews ensure stakeholders see progress and provide course corrections. Monthly strategic reviews evaluate whether the project direction still matches business needs.

Define decision-making authority explicitly. Who approves architectural changes? Who can adjust scope? Who handles trade-offs between accuracy and latency? Ambiguity creates delays and frustration as teams wait for approvals or make assumptions that later need reversal.

Create escalation paths for blockers. AI projects encounter unexpected challenges—data quality issues, performance bottlenecks, integration complications. Clear escalation procedures prevent small issues from becoming major delays.

Success Metrics Beyond Model Accuracy

Technical metrics like model accuracy matter, but business outcomes matter more. A recommendation engine with 95% accuracy that doesn't increase conversion rates has failed regardless of technical sophistication. Define success in business terms.

Specify performance requirements explicitly. Accuracy targets, inference latency limits, throughput requirements, resource consumption constraints—each impacts architectural decisions. Discovering performance requirements after development wastes effort rebuilding systems that can't meet production needs.

Include operational metrics. Model monitoring capabilities, retraining pipeline functionality, debugging tools, and documentation quality all determine long-term success. A model that performs well initially but degrades over time without clear retraining processes creates ongoing problems.

Outsource AI Engineers Without the Turnover Problem

When you outsource AI engineering, continuity matters as much as skill. NeoWork builds dedicated remote teams that integrate into your workflows and stay long enough to understand your models, data pipelines, and product roadmap. With a 91% annualized teammate retention rate and a 3.2% candidate selectivity rate, the approach is selective hiring and long term team stability, not volume staffing.

If you want outsourced AI engineers who stay, learn your system, and contribute beyond short contracts, connect with NeoWork and build a team designed for sustained delivery.

Common Failure Modes and How to Avoid Them

Most AI outsourcing failures follow predictable patterns. Understanding these helps structure engagements to avoid common pitfalls.

The Scope Creep Spiral

AI projects uncover unexpected complexity. Initial requirements seem clear until development reveals data quality issues, edge cases, or performance constraints that force architectural changes. Without clear processes for handling scope changes, projects balloon beyond budgets or create adversarial client-provider relationships.

Prevent this by building scope change mechanisms into contracts. Define core requirements that are truly fixed versus areas where discovery is expected. Create processes for evaluating change requests based on business value versus cost. Treat scope evolution as normal rather than exceptional.

Use phased approaches for uncertain projects. A discovery phase evaluates data quality, technical feasibility, and architectural options before committing to full development. This upfront investment prevents expensive mistakes and creates realistic scope definitions.

Communication Breakdowns

Technical teams and business stakeholders often speak different languages, creating situations where technically impressive systems may not solve actual problems.

Bridge this through explicit translation. Technical leads should frame updates in business terms—not "we improved F1 score from 0.82 to 0.87" but "we reduced false positives by 30%, meaning customer service handles 40 fewer incorrect escalations per day."

Include business stakeholders in sprint reviews. Seeing working functionality regularly keeps everyone aligned and surfaces misunderstandings early when they're cheap to fix.

Knowledge Transfer Failures

External teams build systems, then hand them over. Without proper knowledge transfer, internal teams can't maintain, debug, or enhance what was delivered. The system becomes a black box that requires ongoing external support for even minor changes.

Build knowledge transfer into project plans from the start. Documentation, code comments, architecture diagrams, and debugging guides should be deliverables with the same priority as working code. Schedule training sessions where external teams walk internal engineers through system components.

Consider hybrid approaches where external teams build initial systems but internal engineers work alongside them, gradually taking ownership. This creates smoother transitions than abrupt handoffs.

Data Quality Surprises

Teams discover data quality issues after significant development investment. Labels are inconsistent, datasets have sampling biases, critical features are missing, or data volumes are insufficient for reliable models. These discoveries force expensive rework or project abandonment.

Invest in data assessment before development starts. External teams should analyze data quality, identify gaps, and flag risks upfront. This might delay project starts but prevents costlier delays later.

For projects with uncertain data quality, structure initial phases around data exploration and validation. Confirm data can support planned models before committing to full development.

The Strategic Layer: What to Keep Internal

Successful outsourcing isn't about delegating everything—it's about strategic division of labor. Some elements should always remain internal regardless of external team quality.

Problem definition stays internal. External teams can implement solutions, but internal stakeholders must understand business problems, evaluate whether AI is the right approach, and define success criteria. Outsourcing this creates systems that solve the wrong problems brilliantly.

Data strategy remains internal. Decisions about what data to collect, how to label it, what quality standards to enforce, and how to govern usage all require deep business context that external teams lack. External teams can execute data strategy, but shouldn't define it.

Architectural authority stays internal for strategic systems. External teams can propose architectures and implement them, but final architectural decisions should involve internal technical leadership who understand long-term system evolution and integration requirements.

Product roadmap ownership is non-negotiable internal responsibility. External teams can inform roadmaps with technical insights, but internal stakeholders must drive prioritization based on business strategy, competitive positioning, and market opportunities.

Research on AI outsourcing indicates that even when tasks are automated, maintaining strategic oversight and direction remains important. According to research, AI can generate code in two to three hours that previously took software engineers ten hours to write quality computer code—but determining what code to write and why still requires human judgment.

2026 Trends Reshaping AI Outsourcing

The AI outsourcing landscape continues evolving. Several trends are reshaping how companies access external AI talent and structure partnerships.

Specialized Vertical Expertise

Generic AI development firms are losing ground to specialized providers with deep vertical expertise. Healthcare AI requires understanding HIPAA compliance, clinical workflows, and medical terminology. Financial services AI demands knowledge of regulatory requirements, fraud patterns, and risk management frameworks.

Companies increasingly prioritize providers with proven track records in their industry over general AI expertise. A team that has deployed fraud detection systems for banks brings practical knowledge that generic ML engineers lack, even if the latter have stronger academic credentials.

Hybrid Engagement Models

Pure staff augmentation, dedicated teams, and project-based engagements are blending into hybrid models. Companies might use dedicated teams for ongoing development but bring in specialized contractors for specific challenges like model optimization or compliance implementation.

These hybrid approaches provide flexibility—scaling teams up for intensive development periods, then down for maintenance phases, while maintaining continuity through core team members.

Energy and Infrastructure Considerations

According to analysis on AI infrastructure, IEA forecasts US data center demand to reach around 420–426 TWh by 2030 (more than double from 2024 levels of ~183 TWh), accounting for ~8–9% of total US electricity.

This impacts outsourcing decisions. Companies are evaluating not just development costs but also long-term operational costs including compute infrastructure and energy consumption. Providers that demonstrate expertise in model optimization, efficient architectures, and cost-effective deployment gain advantages.

Emphasis on Production Reliability

The bar for production AI systems is rising. According to MIT Sloan Management Review research on AI success factors, early AI winners align organizational and business strategies to build value while managing risk. Demo-quality models no longer suffice—systems must demonstrate production reliability, monitoring capabilities, and graceful degradation.

Outsourcing partners are differentiated by MLOps maturity. Teams that treat deployment, monitoring, and maintenance as first-class concerns rather than afterthoughts command premium pricing and win strategic engagements.

AI-Augmented Development Workflows

External AI development teams now use AI coding assistants extensively in their own workflows. Some developers report productivity gains from AI coding assistants, though quality and understanding remain considerations.

For clients, this creates both opportunities and risks. Teams using AI assistants effectively can deliver faster and more cost-effectively. But teams over-relying on AI-generated code without proper review create technical debt and maintenance challenges. Evaluating how providers use AI tools and what quality controls they apply becomes increasingly important.

Building the Business Case for AI Outsourcing

Convincing stakeholders to pursue outsourcing requires demonstrating clear value beyond cost savings. Several factors strengthen the business case.

Time-to-market advantages often justify outsourcing even when internal development is possible. External teams with experience in similar projects ship faster by avoiding mistakes internal teams make learning. For competitive markets where being first matters, this speed premium drives value.

Risk mitigation through expertise reduces expensive failures. Internal teams experimenting with unfamiliar technologies make costly mistakes. External specialists bring battle-tested approaches that work, reducing the risk of building systems that can't scale or don't meet requirements.

Flexibility to scale engineering capacity without long-term commitments allows companies to pursue AI opportunities without permanent headcount increases. This matters particularly for initiatives with uncertain long-term resource needs.

Access to specialized skills that are difficult to hire full-time represents perhaps the strongest argument. Most companies can't justify hiring full-time computer vision specialists, NLP experts, or MLOps engineers for periodic projects. Outsourcing provides on-demand access to these skills.

Making the Build-vs-Buy-vs-Outsource Decision

Not every AI needs custom development. Sometimes off-the-shelf solutions work. Sometimes internal development makes sense. Sometimes outsourcing is right. Deciding which path to take requires evaluating several factors.

When AI capabilities are genuinely differentiating and create sustainable competitive advantages, internal development makes sense despite higher costs and longer timelines. When capabilities are commoditized and off-the-shelf solutions exist that meet needs, buying beats building. When capabilities are important but not differentiating, require customization beyond what off-the-shelf offers, and demand expertise not present internally, outsourcing provides the middle path.

Regulatory and Compliance Considerations

AI outsourcing creates compliance obligations that vary by industry and jurisdiction. Addressing these upfront prevents costly problems.

Data protection regulations like GDPR, CCPA, and industry-specific rules like HIPAA restrict where data can be processed and who can access it. Contracts with outsourcing partners must explicitly address data handling, storage locations, access controls, and deletion procedures.

For regulated industries, provider compliance certifications matter. Healthcare applications may require HITRUST certification. Financial services might demand SOC2 Type II audits. Government contracts often require FedRAMP authorization. Verifying certifications before engagement prevents discovering compliance gaps mid-project.

Export controls on AI technologies are tightening. Depending on application domains and deployment locations, certain AI capabilities may face export restrictions. Legal review of outsourcing arrangements helps ensure compliance.

Algorithmic accountability and bias regulations are emerging. Contracts should address who bears responsibility for ensuring models meet fairness requirements, how bias testing occurs, and what happens if deployed models create discriminatory outcomes.

Measuring Outsourcing Success

Defining success metrics upfront creates accountability and enables objective evaluation of outsourcing partnerships.

Technical performance metrics include model accuracy, precision, recall, F1 scores, inference latency, throughput, and resource utilization. These should be specified with concrete targets based on business requirements, not academic benchmarks.

Business outcome metrics connect technical work to value creation. Did the recommendation engine increase conversion rates? Did the churn prediction model reduce customer attrition? Did the demand forecasting system improve inventory efficiency? Tracking these demonstrates ROI.

Project execution metrics evaluate process quality. On-time delivery rates, budget adherence, communication responsiveness, and defect rates all indicate partnership health. Consistent misses suggest problems requiring intervention.

Knowledge transfer effectiveness can be measured through internal team capability growth. Can internal engineers now handle model retraining? Can they debug issues independently? Can they extend functionality without external help? Successful outsourcing builds internal capability, not dependency.

Long-term system health metrics assess sustainability. Model performance stability over time, system uptime, incident frequency, and maintenance burden all indicate whether delivered systems are production-grade or fragile prototypes requiring constant support.

When Not to Outsource AI Engineering

Outsourcing isn't always the answer. Certain situations favor internal development despite higher costs and longer timelines.

When AI creates core competitive differentiation, internal development protects strategic advantages. If recommendation algorithms define the customer experience and competitors can't easily replicate results, keeping development internal protects that moat. Outsourcing risks leaking insights to competitors through shared provider relationships.

When data is highly sensitive and regulations restrict external access, internal development may be the only compliant path. While proper contracts and controls can enable secure outsourcing in many cases, some data sensitivity levels make internal-only development necessary.

When teams are learning AI capabilities for long-term internal growth, outsourcing can impede learning. If the goal is building internal expertise for ongoing AI work, starting with internal development (even if slower) creates learning opportunities that outsourcing doesn't provide.

When requirements are highly uncertain and exploratory, the overhead of managing external teams may exceed benefits. Early-stage exploration often involves rapid pivots, frequent changes, and high communication overhead. Internal teams handle this chaos more easily than managed external relationships.

When projects are very small, outsourcing coordination costs may exceed development costs. Simple data labeling tasks or basic classification models might take longer to specify and coordinate than to just build internally.

Taking the First Step

Getting started with AI outsourcing doesn't require massive commitments. Small pilot projects test partnerships and build confidence before scaling to larger engagements.

Identify a contained, valuable project for initial engagement. Good candidates have clear success criteria, meaningful business value, manageable scope (2-4 months), and won't cause catastrophic damage if they fail. A customer service chatbot handling common questions, a demand forecasting model for a single product category, or a document classification system for one department all work as pilots.

Run a structured evaluation process for 3-5 providers. Share the same project brief, evaluate proposals against consistent criteria, and conduct technical interviews with actual engineers who would work on the project. Request references from similar past projects and speak with those clients about their experiences.

Start with a discovery phase before committing to full development. A 2-4 week engagement where the provider analyzes data quality, validates technical approach, and creates detailed implementation plans costs relatively little but surfaces potential issues and demonstrates how the partnership will function.

Define explicit evaluation criteria for the pilot. Beyond whether the project succeeds technically, assess communication quality, responsiveness, proactive problem identification, and how well the team handles unexpected challenges. These process factors predict success for larger future engagements.

Use pilot results to refine partnership structures. Apply lessons learned about communication cadence, documentation needs, knowledge transfer processes, and contract terms to subsequent engagements. Each project teaches what works in the specific context of the business and provider.

The landscape of AI outsourcing continues maturing. As industry standards on AI strategy development indicate, bringing clarity to this rapidly evolving field requires both technical expertise and structured processes. Companies that approach outsourcing strategically—maintaining internal ownership of strategy while leveraging external expertise for execution—access AI capabilities faster and more cost-effectively than those trying to build everything internally or those delegating everything to external teams without maintaining strategic control.

The key isn't whether to outsource AI engineering. It's understanding which elements to outsource, how to structure partnerships for success, and how to maintain strategic direction while leveraging external execution capability. Get that balance right, and outsourcing becomes a competitive advantage rather than a risky cost-cutting measure.

Frequently Asked Questions

Topics

Outsourcing AI Engineers: The 2026 Practical Guide

Outsourcing AI engineers grants businesses access to specialized technical talent without long-term overhead, typically reducing development costs by 30-50%. Success depends on choosing the right engagement model (staff augmentation, dedicated teams, or project-based), vetting partners for technical depth and communication skills, and maintaining clear ownership over strategy and architecture while external teams execute implementation.

Building AI capabilities internally is getting harder. According to MIT Sloan Management Review research from 2019, while nine out of ten executives recognize AI as a business opportunity, 40% of organizations making significant AI investments do not report business gains from AI. The gap isn't just about technology—it's about access to the right engineering talent.

Most companies don't have benches stacked with machine learning engineers, computer vision specialists, or NLP experts. Hiring full-time AI talent means competing with tech giants offering million-dollar compensation packages. And even when companies manage to hire, they're locked into year-round headcount for projects that might only need intense engineering effort for 3-6 months.

Outsourcing AI engineers solves this by providing on-demand access to specialized skills. But it's not about finding the cheapest developers. The wrong partner can burn a budget on proof-of-concepts that never scale, create technical debt that cripples future development, or build models that don't align with actual business needs.

Here's what actually works when outsourcing AI engineering talent in 2026.

What AI Engineering Outsourcing Actually Covers

AI outsourcing isn't one thing. It spans everything from tactical data labeling to full-stack AI product development. Understanding the scope helps match the right engagement model to specific needs.

Data preparation and annotation represent the foundation layer. Engineers clean datasets, label training data, handle data augmentation, and build pipelines that feed models. This work is labor-intensive but essential—models trained on poorly prepared data fail in production regardless of algorithmic sophistication.

Model development sits at the core. External teams design architectures, select appropriate algorithms, train models, tune hyperparameters, and validate performance. For companies building custom solutions rather than using off-the-shelf APIs, this is where specialized expertise matters most.

MLOps and deployment turn experimental models into production systems. Engineers build serving infrastructure, implement monitoring, create retraining pipelines, optimize inference performance, and integrate models into existing applications. Successful deployment requires coordinating technical implementation with business processes.

End-to-end AI product development bundles everything together. External teams own the entire stack from problem definition through production deployment, handling architecture decisions, development, testing, and launch.

The Three Outsourcing Models That Actually Work

How teams are structured determines success more than individual developer skills. Three models dominate AI engineering outsourcing, each suited to different situations.

Staff Augmentation: Individual Engineers on Demand

Staff augmentation embeds external engineers directly into internal teams. These developers attend daily standups, use internal tools, and report to internal managers just like full-time employees.

This model works when teams need specific skills for defined periods. A company building computer vision capabilities might hire a CV specialist for six months to lead implementation, then transition to an internal engineer for maintenance. The external engineer fills the gap without forcing a permanent headcount increase.

Control is high—internal teams assign tasks, set priorities, and direct work. But the management burden is also high. Internal leads handle performance management, task allocation, and coordination. According to industry data, AI developer hourly engagement in the India market averages $25-60 per hour.

The challenge? Finding engineers who integrate smoothly. Technical skills matter, but so do communication abilities and cultural fit. A brilliant ML engineer who can't collaborate effectively creates more problems than they solve.

Dedicated Teams: Complete Engineering Units

Dedicated teams provide complete engineering units—developers, data scientists, QA engineers, and technical leads—managed externally but aligned to internal projects. The team works exclusively on one client's projects under a monthly retainer structure.

This model suits ongoing development where scope evolves. Instead of defining every feature upfront, companies set strategic direction and let the dedicated team handle execution. The external provider manages hiring, performance, and team dynamics while internal stakeholders focus on product direction.

Control sits between staff augmentation and project-based work. Internal teams define what to build but not exactly how. Management burden drops because the external provider handles day-to-day coordination, though strategic oversight remains internal.

Dedicated teams scale more easily than staff augmentation. Need to add capacity during a critical sprint? The provider can add developers without internal teams managing recruitment. Projects slowing down? Scale back without severance or HR complications.

Project-Based: Fixed Scope, Fixed Price

Project-based outsourcing hands complete initiatives to external teams. Requirements are defined, budgets are set, and the external partner delivers a finished product. Internal teams review milestones and provide feedback, but don't manage daily execution.

This works for well-defined projects with clear success criteria. Building a recommendation engine with specified accuracy targets? A chatbot with defined conversation flows? Document classification with known categories? Project-based engagement can work.

According to industry cost data, simple proof-of-concept projects can start from tens of thousands of dollars, while enterprise-grade AI platforms can reach several hundred thousand. Outsourcing typically reduces total costs by 30-50% compared to in-house development.

The risk is scope creep. AI projects often uncover unexpected challenges during development. A fixed-price contract creates tension when reality diverges from initial specifications. The best project-based engagements include explicit mechanisms for handling scope changes without relationship-damaging disputes.

Where AI Outsourcing Creates Real Value

Not every AI initiative benefits equally from outsourcing. Some use cases align naturally with external teams, while others demand internal ownership.

Customer service automation sees heavy outsourcing. Building chatbots, implementing intent classification, creating knowledge base search systems—these projects have well-established patterns. External teams bring experience from dozens of similar implementations, avoiding common pitfalls that internal teams discover through expensive trial and error.

Predictive analytics for business operations benefits from external expertise. Demand forecasting, churn prediction, inventory optimization, and sales pipeline analysis follow similar modeling approaches across industries. Outsourced teams apply proven techniques without reinventing foundational work.

Document processing and OCR leverage specialized skills that most companies don't maintain internally. Invoice extraction, contract analysis, form processing, and document classification require deep expertise in computer vision and NLP. Outsourcing makes sense unless document processing is core business functionality.

Computer vision applications—from quality control inspection to security monitoring—often involve external teams for initial development. These projects require specialized knowledge of image preprocessing, model architectures, and optimization techniques that general software engineers typically lack.

But strategic AI that defines competitive advantage? That usually demands internal ownership. If an AI capability creates fundamental differentiation, keeping development in-house protects both intellectual property and strategic control. Outsource execution, but own the architecture and strategic direction.

Real Costs: What AI Engineering Outsourcing Actually Runs

Pricing varies dramatically based on engagement model, team location, project complexity, and required expertise. Understanding realistic cost ranges prevents budget surprises.

Location significantly impacts pricing. Eastern European developers typically charge more than Indian engineers but less than Latin American teams. Philippine talent often provides middle-ground pricing with strong English communication skills and cultural alignment to Western business practices.

Expertise level matters as much as location. A senior ML engineer with experience deploying production models at scale commands 2-3x the rate of a junior developer still learning frameworks. For complex projects, that premium pays for itself through faster development and fewer architectural mistakes.

Hidden costs deserve attention. Time zone differences create coordination overhead. A team in India working with stakeholders in California faces limited real-time overlap, slowing decision-making and feedback cycles. Latin American teams align directly with US time zones—a 10 am standup is 10 am for everyone—reducing coordination friction.

According to industry data, North America accounts for approximately 40% of global outsourcing spendings. Salaries and benefits become variable costs scaled to project needs rather than fixed headcount locked in year-round.

Vetting AI Engineering Partners: What Actually Matters

Technical capabilities are table stakes. Every outsourcing firm claims AI expertise. Separating real capability from marketing requires looking past portfolios and certifications.

Technical Depth Beyond Frameworks

Ask about specific challenges they've solved, not just frameworks they know. Any developer can list TensorFlow and PyTorch on a resume. Fewer can explain how they optimized inference latency for a recommendation system serving millions of users, or how they handled class imbalance in a fraud detection model with 1000:1 negative-to-positive ratios.

Request examples of models they've deployed to production. Many teams build impressive demos that never face real users. Production deployment reveals understanding of monitoring, retraining pipelines, performance optimization, and graceful degradation when models encounter edge cases.

Evaluate their approach to data quality. Models fail more often from data issues than algorithmic problems. Teams that ask detailed questions about data sources, labeling processes, and validation strategies understand what actually matters. Teams that jump straight to model selection haven't deployed enough systems to production.

Communication and Collaboration Skills

Technical brilliance without communication ability creates expensive failures. Technical teams and business stakeholders often speak different languages, creating situations where technically impressive systems may not solve actual problems.

Test communication during evaluation. Schedule technical discussions where engineers explain past projects. Strong teams communicate clearly, ask clarifying questions, and flag potential issues proactively. Weak teams provide vague answers, avoid direct questions, and wait for explicit instructions rather than offering guidance.

Assess proactive problem-solving. Present a hypothetical challenge similar to actual needs and evaluate their approach. Do they jump to solutions or ask questions to understand constraints? Do they identify potential risks or promise everything is simple? AI projects always uncover unexpected complexity—teams that acknowledge this upfront handle reality better than those that overpromise.

Process Maturity and DevOps Capabilities

Successful teams demonstrate mature development processes including version control for models and data, automated testing pipelines, and clear documentation practices.

Evaluate their MLOps maturity. Ask about model versioning, experiment tracking, automated retraining, and monitoring strategies. Teams with production experience have systematic approaches. Teams that treat AI development like traditional software lack the specialized practices that make ML systems reliable.

Check their deployment experience across environments. Cloud-native deployment, on-premise installation, edge deployment, and hybrid approaches each create unique challenges. Teams experienced with target deployment environments avoid costly architectural mistakes discovered late in development.

Getting the Partnership Structure Right

Even technically excellent teams fail without proper partnership structures. Clear agreements on ownership, communication, and success metrics prevent misalignment.

Intellectual Property and Code Ownership

Clarify IP ownership before starting work. Default assumptions vary—some contracts give clients full ownership of all code and models, others reserve rights to reuse components across projects, and some create shared ownership of novel techniques developed during engagement.

For most businesses, full ownership of code, models, and documentation is critical. The contract should explicitly transfer all IP rights upon payment. Watch for clauses that allow providers to retain rights to "general methodologies" or "reusable components"—these can create complications if trying to patent innovations or protect competitive advantages.

Address training data rights separately. If using client-provided data, confirm the provider can't retain or reuse that data for other projects. If the provider supplies training data, clarify usage rights and any ongoing licensing requirements.

Communication Cadence and Decision Authority

Establish regular communication rhythms. Daily standups keep development aligned with priorities. Weekly sprint reviews ensure stakeholders see progress and provide course corrections. Monthly strategic reviews evaluate whether the project direction still matches business needs.

Define decision-making authority explicitly. Who approves architectural changes? Who can adjust scope? Who handles trade-offs between accuracy and latency? Ambiguity creates delays and frustration as teams wait for approvals or make assumptions that later need reversal.

Create escalation paths for blockers. AI projects encounter unexpected challenges—data quality issues, performance bottlenecks, integration complications. Clear escalation procedures prevent small issues from becoming major delays.

Success Metrics Beyond Model Accuracy

Technical metrics like model accuracy matter, but business outcomes matter more. A recommendation engine with 95% accuracy that doesn't increase conversion rates has failed regardless of technical sophistication. Define success in business terms.

Specify performance requirements explicitly. Accuracy targets, inference latency limits, throughput requirements, resource consumption constraints—each impacts architectural decisions. Discovering performance requirements after development wastes effort rebuilding systems that can't meet production needs.

Include operational metrics. Model monitoring capabilities, retraining pipeline functionality, debugging tools, and documentation quality all determine long-term success. A model that performs well initially but degrades over time without clear retraining processes creates ongoing problems.

Outsource AI Engineers Without the Turnover Problem

When you outsource AI engineering, continuity matters as much as skill. NeoWork builds dedicated remote teams that integrate into your workflows and stay long enough to understand your models, data pipelines, and product roadmap. With a 91% annualized teammate retention rate and a 3.2% candidate selectivity rate, the approach is selective hiring and long term team stability, not volume staffing.

If you want outsourced AI engineers who stay, learn your system, and contribute beyond short contracts, connect with NeoWork and build a team designed for sustained delivery.

Common Failure Modes and How to Avoid Them

Most AI outsourcing failures follow predictable patterns. Understanding these helps structure engagements to avoid common pitfalls.

The Scope Creep Spiral

AI projects uncover unexpected complexity. Initial requirements seem clear until development reveals data quality issues, edge cases, or performance constraints that force architectural changes. Without clear processes for handling scope changes, projects balloon beyond budgets or create adversarial client-provider relationships.

Prevent this by building scope change mechanisms into contracts. Define core requirements that are truly fixed versus areas where discovery is expected. Create processes for evaluating change requests based on business value versus cost. Treat scope evolution as normal rather than exceptional.

Use phased approaches for uncertain projects. A discovery phase evaluates data quality, technical feasibility, and architectural options before committing to full development. This upfront investment prevents expensive mistakes and creates realistic scope definitions.

Communication Breakdowns

Technical teams and business stakeholders often speak different languages, creating situations where technically impressive systems may not solve actual problems.

Bridge this through explicit translation. Technical leads should frame updates in business terms—not "we improved F1 score from 0.82 to 0.87" but "we reduced false positives by 30%, meaning customer service handles 40 fewer incorrect escalations per day."

Include business stakeholders in sprint reviews. Seeing working functionality regularly keeps everyone aligned and surfaces misunderstandings early when they're cheap to fix.

Knowledge Transfer Failures

External teams build systems, then hand them over. Without proper knowledge transfer, internal teams can't maintain, debug, or enhance what was delivered. The system becomes a black box that requires ongoing external support for even minor changes.

Build knowledge transfer into project plans from the start. Documentation, code comments, architecture diagrams, and debugging guides should be deliverables with the same priority as working code. Schedule training sessions where external teams walk internal engineers through system components.

Consider hybrid approaches where external teams build initial systems but internal engineers work alongside them, gradually taking ownership. This creates smoother transitions than abrupt handoffs.

Data Quality Surprises

Teams discover data quality issues after significant development investment. Labels are inconsistent, datasets have sampling biases, critical features are missing, or data volumes are insufficient for reliable models. These discoveries force expensive rework or project abandonment.

Invest in data assessment before development starts. External teams should analyze data quality, identify gaps, and flag risks upfront. This might delay project starts but prevents costlier delays later.

For projects with uncertain data quality, structure initial phases around data exploration and validation. Confirm data can support planned models before committing to full development.

The Strategic Layer: What to Keep Internal

Successful outsourcing isn't about delegating everything—it's about strategic division of labor. Some elements should always remain internal regardless of external team quality.

Problem definition stays internal. External teams can implement solutions, but internal stakeholders must understand business problems, evaluate whether AI is the right approach, and define success criteria. Outsourcing this creates systems that solve the wrong problems brilliantly.

Data strategy remains internal. Decisions about what data to collect, how to label it, what quality standards to enforce, and how to govern usage all require deep business context that external teams lack. External teams can execute data strategy, but shouldn't define it.

Architectural authority stays internal for strategic systems. External teams can propose architectures and implement them, but final architectural decisions should involve internal technical leadership who understand long-term system evolution and integration requirements.

Product roadmap ownership is non-negotiable internal responsibility. External teams can inform roadmaps with technical insights, but internal stakeholders must drive prioritization based on business strategy, competitive positioning, and market opportunities.

Research on AI outsourcing indicates that even when tasks are automated, maintaining strategic oversight and direction remains important. According to research, AI can generate code in two to three hours that previously took software engineers ten hours to write quality computer code—but determining what code to write and why still requires human judgment.

2026 Trends Reshaping AI Outsourcing

The AI outsourcing landscape continues evolving. Several trends are reshaping how companies access external AI talent and structure partnerships.

Specialized Vertical Expertise

Generic AI development firms are losing ground to specialized providers with deep vertical expertise. Healthcare AI requires understanding HIPAA compliance, clinical workflows, and medical terminology. Financial services AI demands knowledge of regulatory requirements, fraud patterns, and risk management frameworks.

Companies increasingly prioritize providers with proven track records in their industry over general AI expertise. A team that has deployed fraud detection systems for banks brings practical knowledge that generic ML engineers lack, even if the latter have stronger academic credentials.

Hybrid Engagement Models

Pure staff augmentation, dedicated teams, and project-based engagements are blending into hybrid models. Companies might use dedicated teams for ongoing development but bring in specialized contractors for specific challenges like model optimization or compliance implementation.

These hybrid approaches provide flexibility—scaling teams up for intensive development periods, then down for maintenance phases, while maintaining continuity through core team members.

Energy and Infrastructure Considerations

According to analysis on AI infrastructure, IEA forecasts US data center demand to reach around 420–426 TWh by 2030 (more than double from 2024 levels of ~183 TWh), accounting for ~8–9% of total US electricity.

This impacts outsourcing decisions. Companies are evaluating not just development costs but also long-term operational costs including compute infrastructure and energy consumption. Providers that demonstrate expertise in model optimization, efficient architectures, and cost-effective deployment gain advantages.

Emphasis on Production Reliability

The bar for production AI systems is rising. According to MIT Sloan Management Review research on AI success factors, early AI winners align organizational and business strategies to build value while managing risk. Demo-quality models no longer suffice—systems must demonstrate production reliability, monitoring capabilities, and graceful degradation.

Outsourcing partners are differentiated by MLOps maturity. Teams that treat deployment, monitoring, and maintenance as first-class concerns rather than afterthoughts command premium pricing and win strategic engagements.

AI-Augmented Development Workflows

External AI development teams now use AI coding assistants extensively in their own workflows. Some developers report productivity gains from AI coding assistants, though quality and understanding remain considerations.

For clients, this creates both opportunities and risks. Teams using AI assistants effectively can deliver faster and more cost-effectively. But teams over-relying on AI-generated code without proper review create technical debt and maintenance challenges. Evaluating how providers use AI tools and what quality controls they apply becomes increasingly important.

Building the Business Case for AI Outsourcing

Convincing stakeholders to pursue outsourcing requires demonstrating clear value beyond cost savings. Several factors strengthen the business case.

Time-to-market advantages often justify outsourcing even when internal development is possible. External teams with experience in similar projects ship faster by avoiding mistakes internal teams make learning. For competitive markets where being first matters, this speed premium drives value.

Risk mitigation through expertise reduces expensive failures. Internal teams experimenting with unfamiliar technologies make costly mistakes. External specialists bring battle-tested approaches that work, reducing the risk of building systems that can't scale or don't meet requirements.

Flexibility to scale engineering capacity without long-term commitments allows companies to pursue AI opportunities without permanent headcount increases. This matters particularly for initiatives with uncertain long-term resource needs.

Access to specialized skills that are difficult to hire full-time represents perhaps the strongest argument. Most companies can't justify hiring full-time computer vision specialists, NLP experts, or MLOps engineers for periodic projects. Outsourcing provides on-demand access to these skills.

Making the Build-vs-Buy-vs-Outsource Decision

Not every AI needs custom development. Sometimes off-the-shelf solutions work. Sometimes internal development makes sense. Sometimes outsourcing is right. Deciding which path to take requires evaluating several factors.

When AI capabilities are genuinely differentiating and create sustainable competitive advantages, internal development makes sense despite higher costs and longer timelines. When capabilities are commoditized and off-the-shelf solutions exist that meet needs, buying beats building. When capabilities are important but not differentiating, require customization beyond what off-the-shelf offers, and demand expertise not present internally, outsourcing provides the middle path.

Regulatory and Compliance Considerations

AI outsourcing creates compliance obligations that vary by industry and jurisdiction. Addressing these upfront prevents costly problems.

Data protection regulations like GDPR, CCPA, and industry-specific rules like HIPAA restrict where data can be processed and who can access it. Contracts with outsourcing partners must explicitly address data handling, storage locations, access controls, and deletion procedures.

For regulated industries, provider compliance certifications matter. Healthcare applications may require HITRUST certification. Financial services might demand SOC2 Type II audits. Government contracts often require FedRAMP authorization. Verifying certifications before engagement prevents discovering compliance gaps mid-project.

Export controls on AI technologies are tightening. Depending on application domains and deployment locations, certain AI capabilities may face export restrictions. Legal review of outsourcing arrangements helps ensure compliance.

Algorithmic accountability and bias regulations are emerging. Contracts should address who bears responsibility for ensuring models meet fairness requirements, how bias testing occurs, and what happens if deployed models create discriminatory outcomes.

Measuring Outsourcing Success

Defining success metrics upfront creates accountability and enables objective evaluation of outsourcing partnerships.

Technical performance metrics include model accuracy, precision, recall, F1 scores, inference latency, throughput, and resource utilization. These should be specified with concrete targets based on business requirements, not academic benchmarks.

Business outcome metrics connect technical work to value creation. Did the recommendation engine increase conversion rates? Did the churn prediction model reduce customer attrition? Did the demand forecasting system improve inventory efficiency? Tracking these demonstrates ROI.

Project execution metrics evaluate process quality. On-time delivery rates, budget adherence, communication responsiveness, and defect rates all indicate partnership health. Consistent misses suggest problems requiring intervention.

Knowledge transfer effectiveness can be measured through internal team capability growth. Can internal engineers now handle model retraining? Can they debug issues independently? Can they extend functionality without external help? Successful outsourcing builds internal capability, not dependency.

Long-term system health metrics assess sustainability. Model performance stability over time, system uptime, incident frequency, and maintenance burden all indicate whether delivered systems are production-grade or fragile prototypes requiring constant support.

When Not to Outsource AI Engineering

Outsourcing isn't always the answer. Certain situations favor internal development despite higher costs and longer timelines.

When AI creates core competitive differentiation, internal development protects strategic advantages. If recommendation algorithms define the customer experience and competitors can't easily replicate results, keeping development internal protects that moat. Outsourcing risks leaking insights to competitors through shared provider relationships.

When data is highly sensitive and regulations restrict external access, internal development may be the only compliant path. While proper contracts and controls can enable secure outsourcing in many cases, some data sensitivity levels make internal-only development necessary.

When teams are learning AI capabilities for long-term internal growth, outsourcing can impede learning. If the goal is building internal expertise for ongoing AI work, starting with internal development (even if slower) creates learning opportunities that outsourcing doesn't provide.

When requirements are highly uncertain and exploratory, the overhead of managing external teams may exceed benefits. Early-stage exploration often involves rapid pivots, frequent changes, and high communication overhead. Internal teams handle this chaos more easily than managed external relationships.

When projects are very small, outsourcing coordination costs may exceed development costs. Simple data labeling tasks or basic classification models might take longer to specify and coordinate than to just build internally.

Taking the First Step

Getting started with AI outsourcing doesn't require massive commitments. Small pilot projects test partnerships and build confidence before scaling to larger engagements.

Identify a contained, valuable project for initial engagement. Good candidates have clear success criteria, meaningful business value, manageable scope (2-4 months), and won't cause catastrophic damage if they fail. A customer service chatbot handling common questions, a demand forecasting model for a single product category, or a document classification system for one department all work as pilots.

Run a structured evaluation process for 3-5 providers. Share the same project brief, evaluate proposals against consistent criteria, and conduct technical interviews with actual engineers who would work on the project. Request references from similar past projects and speak with those clients about their experiences.

Start with a discovery phase before committing to full development. A 2-4 week engagement where the provider analyzes data quality, validates technical approach, and creates detailed implementation plans costs relatively little but surfaces potential issues and demonstrates how the partnership will function.

Define explicit evaluation criteria for the pilot. Beyond whether the project succeeds technically, assess communication quality, responsiveness, proactive problem identification, and how well the team handles unexpected challenges. These process factors predict success for larger future engagements.

Use pilot results to refine partnership structures. Apply lessons learned about communication cadence, documentation needs, knowledge transfer processes, and contract terms to subsequent engagements. Each project teaches what works in the specific context of the business and provider.

The landscape of AI outsourcing continues maturing. As industry standards on AI strategy development indicate, bringing clarity to this rapidly evolving field requires both technical expertise and structured processes. Companies that approach outsourcing strategically—maintaining internal ownership of strategy while leveraging external expertise for execution—access AI capabilities faster and more cost-effectively than those trying to build everything internally or those delegating everything to external teams without maintaining strategic control.

The key isn't whether to outsource AI engineering. It's understanding which elements to outsource, how to structure partnerships for success, and how to maintain strategic direction while leveraging external execution capability. Get that balance right, and outsourcing becomes a competitive advantage rather than a risky cost-cutting measure.

Frequently Asked Questions

Topics

Related Blogs

Related Podcasts