.avif)

.png)

Semantic segmentation outsourcing involves hiring specialized vendors to label image and video data at the pixel level for computer vision projects. This guide covers when to outsource vs. build in-house, how to choose the right annotation partner, quality management strategies, and practical steps to launch and scale your segmentation projects cost-effectively.

Training computer vision models requires massive amounts of labeled data. When each pixel in thousands of images needs classification, the annotation workload becomes overwhelming for internal teams.

Semantic segmentation outsourcing solves this problem by leveraging specialized annotation providers who handle the labor-intensive pixel-level labeling at scale. But choosing the wrong partner or managing quality poorly can derail entire machine learning initiatives.

This guide walks through everything needed to outsource semantic segmentation successfully—from understanding what makes segmentation unique to selecting vendors, managing quality, and optimizing costs.

What Is Semantic Segmentation Outsourcing

Semantic segmentation is the identification of each pixel present in an image by object type. If an image shows a classroom containing a teacher, students, blackboard, chairs, tables, walls, ceiling, floor and windows, each pixel in the image gets allocated to one of these objects.

This differs from simpler annotation tasks. Bounding boxes just draw rectangles around objects. Instance segmentation identifies individual objects separately. Semantic segmentation treats all objects of the same class as one unified category.

Outsourcing this work means contracting external annotation companies, crowdsourcing platforms, or managed service providers to perform the pixel-level labeling. Teams provide raw images, annotation guidelines, and quality requirements. The vendor delivers labeled datasets ready for model training.

Why Outsourcing Makes Sense for Segmentation Projects

Semantic segmentation annotation is uniquely time-consuming. Annotators must trace precise boundaries around irregular shapes at pixel resolution. A single complex image can take 20-30 minutes to annotate properly.

Research from arXiv shows that over the past two years, approximately 20% of exhibitors at major computer vision conferences were annotation providers—highlighting how critical specialized vendors have become for real-world applications.

The economics favor outsourcing for most teams. Building annotation capacity in-house requires recruiting, training, managing annotators, and maintaining QA systems. For project-based work or variable annotation volumes, paying for external capacity as needed proves more efficient than carrying fixed overhead.

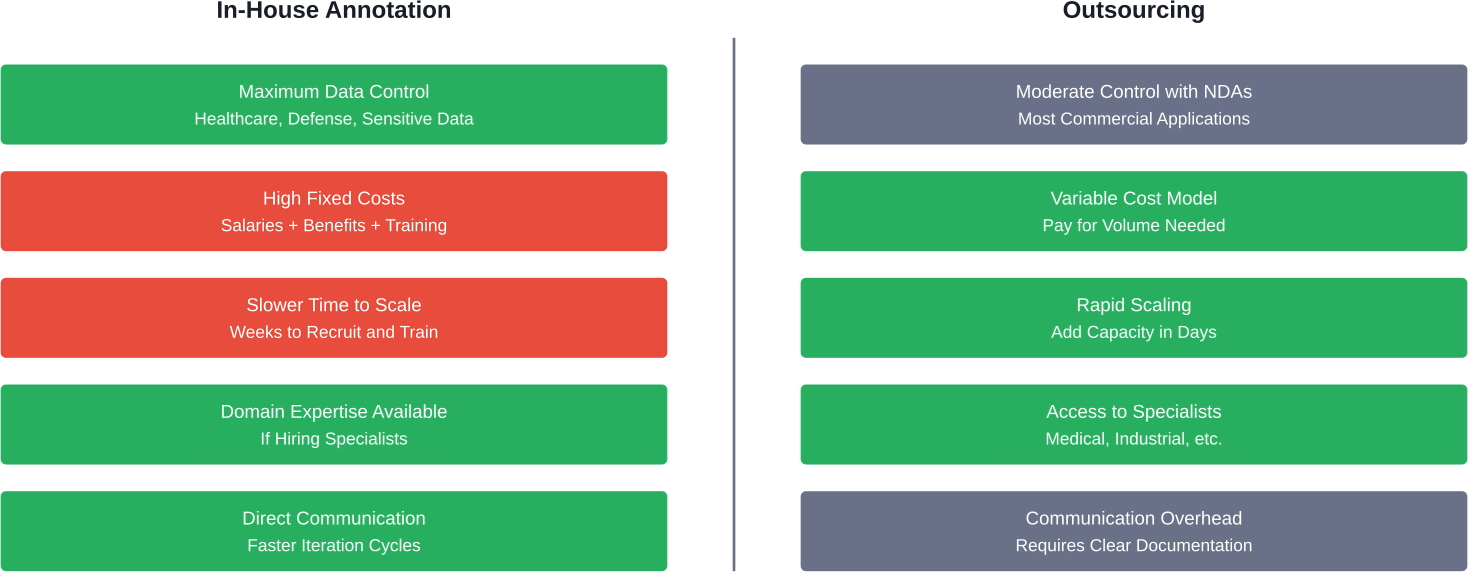

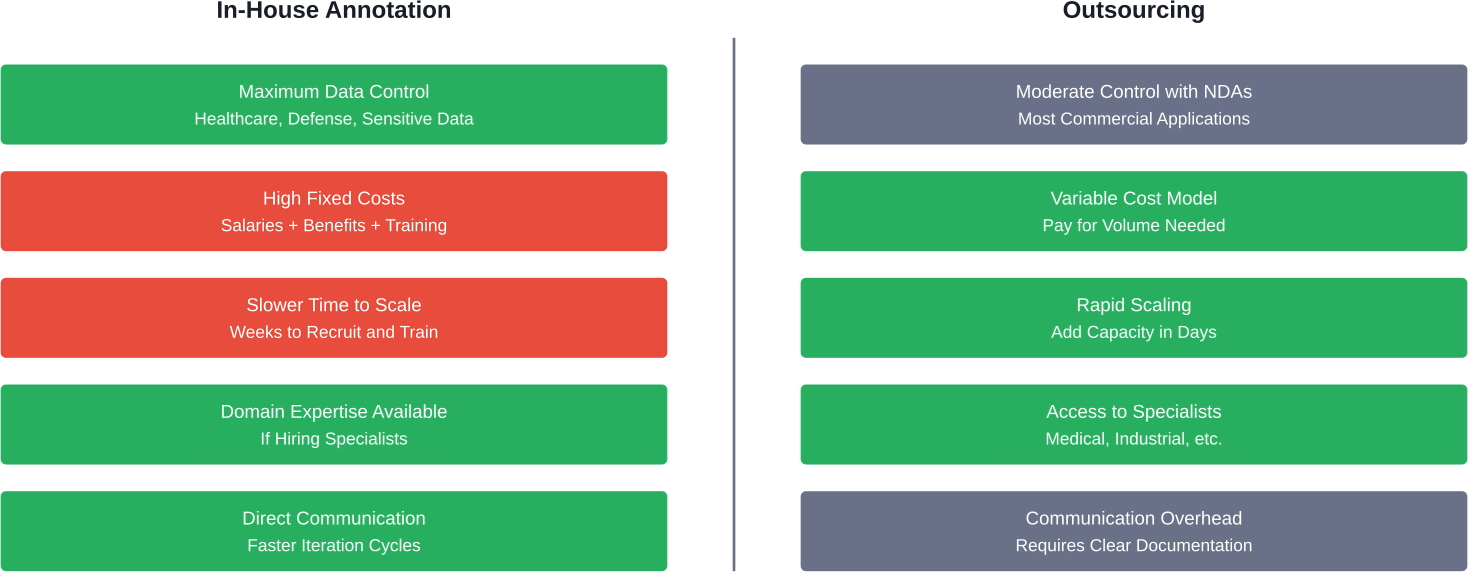

Outsourcing vs. In-House Annotation: Making the Decision

Not every situation calls for outsourcing. The decision depends on project requirements, budget, timeline, and data sensitivity.

When In-House Annotation Makes Sense

Build internal annotation capacity when data security is paramount. Healthcare organizations handling protected health information, defense contractors working with classified imagery, or companies with proprietary trade secrets often can't risk external data sharing.

In-house teams also excel when annotation requirements change frequently. Direct communication with annotators speeds up iteration cycles when refining object categories or boundary definitions.

Long-term projects with steady annotation volumes may justify the investment. Once trained, internal annotators develop deep domain knowledge that improves quality over time.

The Case for Outsourcing

Outsourcing wins for projects with variable or unpredictable annotation needs. Paying for capacity as needed avoids carrying idle resources during slow periods.

Speed to market favors external vendors. Annotation companies maintain trained workforces ready to scale. Spinning up 50 annotators takes days instead of months.

Cost efficiency improves dramatically at scale. Professional annotation services leverage specialized tools, quality assurance systems, and process optimization that would be expensive to replicate internally.

Studies on the global annotation workforce indicate that while demographics vary by region, entry-level annotators in major hubs typically have secondary education or vocational training, with female participation rates varying between 35-45% depending on the country.

Types of Semantic Segmentation Outsourcing Providers

The annotation market includes several distinct provider types, each with different strengths.

Dedicated Annotation Companies

These specialized firms focus exclusively on data labeling. They maintain trained annotation workforces, proprietary QA systems, and often develop custom tooling for complex segmentation tasks.

Quality tends to be high because annotation companies employ internal QA mechanics and structured training programs. Account managers provide single points of contact for project management.

The tradeoff is cost. Professional annotation services charge premium rates but deliver consistent quality at scale.

Crowdsourcing Platforms

Crowdsourcing distributes annotation tasks across large networks of distributed workers. Platforms handle task distribution, payment, and basic quality controls.

Research from arXiv notes that crowdsourcing platforms usually do not employ internal QA mechanics similar to annotation companies. Quality depends heavily on task design and redundancy (having multiple workers label the same data).

Crowdsourcing works best for simpler segmentation where objects have clear boundaries. Complex medical imaging or nuanced industrial inspection typically requires more specialized expertise.

Managed Service Providers

Full-service providers handle everything from data strategy through model deployment. They combine annotation services with machine learning expertise, helping design labeling schemas and validate model performance.

This end-to-end approach costs more but reduces internal resource requirements. Teams lacking computer vision expertise benefit most from managed services.

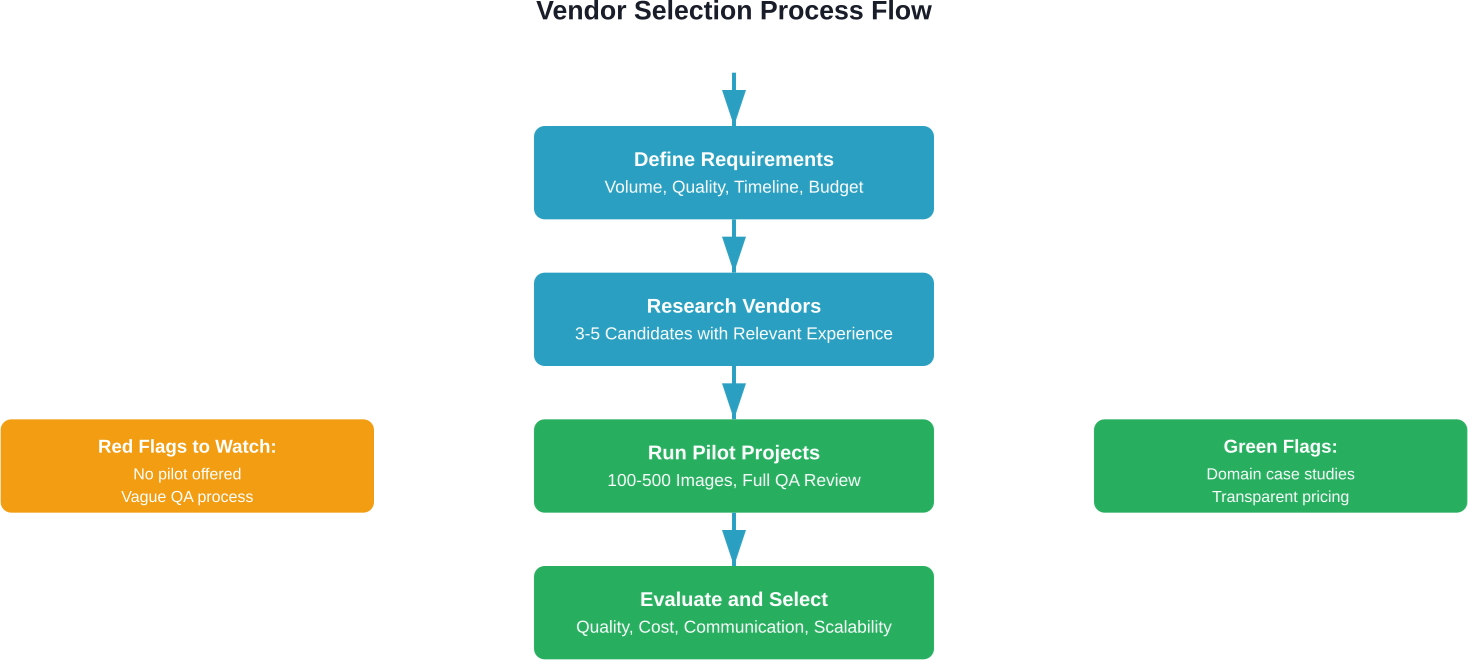

Choosing the Right Semantic Segmentation Vendor

Vendor selection determines project success more than any other factor. The right partner delivers quality data on time and budget. The wrong one wastes months and resources.

Technical Capabilities to Evaluate

Start with tooling. Quality segmentation requires specialized annotation software supporting polygon drawing, magic wand selection, and interpolation across video frames. Ask vendors what platforms they use—CVAT, Labelbox, V7, or proprietary systems.

Verify the vendor handles your data types. Image segmentation differs from video annotation. Video data annotation involves tracking objects across frames, handling occlusion, and maintaining consistency over time.

Check whether they support your file formats and can integrate with your ML pipeline. Exporting annotations in COCO format, PASCAL VOC, or custom JSON schemas should be straightforward.

Quality Assurance Systems

Quality management separates professional annotation companies from amateur operations. Strong vendors implement multi-layer QA:

First-pass annotation by trained labelers, followed by peer review catching obvious errors, then expert review for edge cases and complex scenarios, and finally automated validation checking for technical errors like overlapping polygons or missed regions.

Ask vendors about their QA metrics. Agreement scores, error rates per image, and revision cycles indicate process maturity.

IEEE research on quality management of datasets for medical artificial intelligence emphasizes the importance of structured QA practices, particularly for high-stakes applications.

Domain Expertise and Training

Generic annotation skills don't transfer to specialized domains. Medical image segmentation requires understanding anatomy. Autonomous vehicle datasets need annotators who recognize edge cases in traffic scenarios.

Evaluate how vendors train annotators for your domain. Look for structured onboarding programs, certification processes, and ongoing feedback mechanisms.

Research from arXiv shows that depending on project size, annotation centers may employ specialists with domain knowledge rather than just general labelers.

Security and Compliance

Data security matters even for non-sensitive projects. Vendors should offer secure data transfer protocols, encrypted storage, and access controls limiting who sees your data.

For regulated industries, verify compliance certifications. HIPAA for healthcare data, SOC 2 for general security, ISO 27001 for information security management.

Understand where annotation happens geographically. Some companies require data stays in specific jurisdictions for regulatory reasons.

Pricing Models and Cost Structure

Annotation vendors use several pricing approaches. Per-image pricing works for consistent complexity—each image costs a fixed amount regardless of annotation time. Per-pixel pricing scales with segmentation complexity. Hourly rates give vendors flexibility but make budgeting harder.

Request detailed quotes including all costs. Some vendors charge separately for project management, QA reviews, or format conversions.

Volume discounts significantly reduce per-image costs. Negotiate pricing tiers based on total project size.

Get the Right Team for Your Semantic Segmentation Project

Semantic segmentation requires engineers, data annotators, and validation specialists who understand both computer vision and your domain. Many teams slow down because of turnover or poor hiring fit. NeoWork helps you build a dedicated, long-term remote team for AI and data work. Their 91% annualized retention rate means teammates stay through long development cycles, and their 3.2% candidate selectivity rate means you spend time only with vetted professionals.

If you need reliable resources that can keep your semantic segmentation work moving without constant rehiring, contact NeoWork to start building a team that matches your technical needs and timeline.

Managing Quality in Outsourced Segmentation Projects

Quality problems in training data directly impact model performance. Garbage in, garbage out applies brutally to computer vision.

Setting Clear Annotation Guidelines

Ambiguity kills quality. Annotators need crystal-clear instructions about what to label and how to handle edge cases.

Document guidelines thoroughly. Include visual examples showing correct and incorrect annotations. Address common edge cases—how to handle partial occlusion, reflections, shadows, or ambiguous boundaries.

Specify technical requirements. Should annotators trace exact edges or smooth curves? How to handle objects touching frame boundaries? What level of detail for small objects?

Implementing Gold Standard Datasets

Gold standard datasets provide objective quality benchmarks. Expert annotators label a subset of images with maximum care. These become ground truth for evaluating vendor output.

Mix gold standard images into vendor batches without identifying them. Measure vendor accuracy against known correct labels. This catches quality drift before it affects large volumes.

Refresh gold standards periodically. Edge cases discovered during annotation should be added to maintain relevance.

Multi-Layer Quality Review

Single-pass annotation produces error rates too high for most applications. Layered review improves quality:

- Automated validation catches technical errors. Scripts check for unlabeled regions, overlapping polygons, invalid class assignments, or inconsistent object boundaries.

- Peer review by other annotators identifies obvious mistakes faster than expert review. Quality control research indicates that peer validation significantly improves annotation accuracy.

- Expert review focuses on complex edge cases and domain-specific accuracy. Medical imaging or specialized industrial applications require subject matter experts validating annotations.

Measuring and Tracking Quality Metrics

Track key quality indicators throughout projects. Agreement between annotators (inter-annotator agreement) should exceed 90% for simple segmentation, though complex domains may see lower scores.

Monitor revision rates. How often do QA reviewers reject or modify annotations? Rising revision rates indicate training gaps or guideline ambiguities.

Measure actual model performance on validation sets. Ultimately, annotation quality shows up in model metrics like IoU (Intersection over Union) or pixel accuracy.

Practical Steps to Launch Segmentation Outsourcing

Here's the thing though—knowing what to look for matters less than executing a structured launch process.

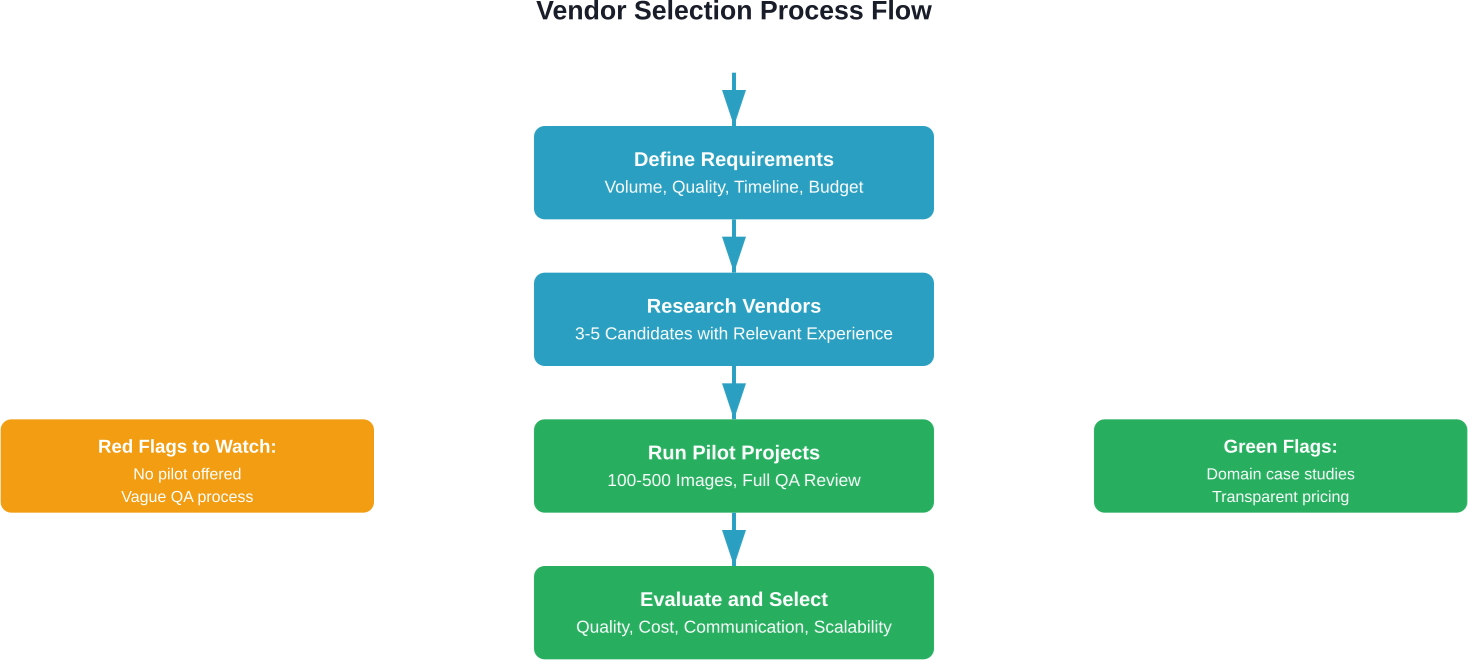

Phase 1: Preparation

Start by curating a representative sample dataset. Select 200-500 images covering the full range of scenarios, lighting conditions, object types, and edge cases your model will encounter.

Write comprehensive annotation guidelines. Include object definitions, boundary rules, edge case handling, and quality criteria. Add visual examples liberally.

Define success metrics. What accuracy level is acceptable? What timeline and budget constraints exist?

Phase 2: Vendor Evaluation

Reach out to 3-5 candidate vendors. Share your sample dataset and guidelines. Request proposals including pricing, timeline, and QA approach.

Run pilot projects with the top 2-3 vendors. Assign the same 100-200 images to each. This enables direct quality comparison.

Evaluate pilot results rigorously. Check annotation accuracy against your gold standards. Assess how well vendors handled edge cases. Review communication responsiveness.

Phase 3: Scaling Production

Start production with modest volumes. Even the best pilot doesn't reveal all potential issues. Begin with 500-1000 images while monitoring quality closely.

Establish regular communication cadences. Weekly calls with vendor project managers catch problems early. Create shared documentation for tracking edge cases and guideline updates.

Scale gradually as quality stabilizes. Double annotation volumes every 2-3 weeks if quality metrics remain solid.

Phase 4: Continuous Improvement

Analyze systematic errors. If multiple annotators make the same mistakes, guidelines need clarification or training needs enhancement.

Update documentation based on real-world challenges. Edge cases discovered during annotation should be added to guidelines with resolution instructions.

Provide regular feedback to vendors. Share quality metrics, highlight excellent work, and discuss areas needing improvement.

Cost Optimization Strategies

Annotation costs can balloon quickly at scale. Smart strategies reduce spending without sacrificing quality.

Active Learning Reduces Annotation Volume

Active learning uses partially-trained models to identify which images most need annotation. The model annotates easy examples automatically. Humans focus on edge cases where the model is uncertain.

This approach can potentially reduce annotation costs by 40-60%. The tradeoff is added technical complexity and longer project timelines.

Pre-Annotation Improves Efficiency

Use existing models or simple algorithms to create rough annotations. Annotators correct and refine rather than starting from scratch.

Pre-annotation works best when rough approximations are decent—existing models in the same domain or simple algorithms like color-based segmentation for high-contrast objects.

Tiered Quality for Different Data Subsets

Not all training data requires perfect annotation. Consider using different quality tiers:

High-quality annotation for validation and test sets where accuracy is critical. Medium-quality for the bulk of training data where some noise is acceptable. Lower-quality crowdsourced annotation for supplementary data increasing dataset diversity.

This approach balances cost with model performance requirements.

Volume Commitments Unlock Discounts

Vendors offer significant discounts for volume commitments. Committing to 50,000 images might reduce per-image costs by 30-40% compared to small batches.

The risk is overcommitting before validating model performance. Start with pilot volumes, then negotiate long-term pricing once confident in the approach.

Common Pitfalls and How to Avoid Them

Even experienced teams stumble when outsourcing segmentation annotation. These problems show up repeatedly.

Insufficient Guidelines Lead to Inconsistency

Ambiguous instructions produce inconsistent annotations. Different annotators interpret edge cases differently, introducing noise into training data.

The fix is ruthless specificity. Document every edge case. When annotators ask questions, update guidelines immediately with the answer.

Skipping Pilot Projects

Jumping straight to full production without testing vendors is expensive gambling. Quality problems discovered after annotating 10,000 images mean rework and delays.

Always run pilots. The small upfront investment prevents vastly larger downstream costs.

Weak Communication Channels

Annotation projects involve constant micro-decisions about edge cases and guideline interpretations. Slow communication creates bottlenecks and quality drift.

Establish fast communication channels. Shared Slack channels, regular video calls, and responsive project managers keep things moving.

Neglecting Validation Set Quality

Teams sometimes outsource training data annotation but handle test sets internally to control quality. This makes sense—but test sets still need the same rigor.

Apply equal or higher quality standards to validation and test data. Model evaluation depends on these golden datasets being correct.

Video Segmentation: Special Considerations

Video annotation multiplies complexity compared to static images. Objects move, get occluded, change appearance, and interact across frames.

Video data annotation requires tracking objects across frames while maintaining consistent boundaries. When a car passes behind a tree, annotators must handle the occlusion correctly and re-identify the same vehicle when it emerges.

Interpolation features help—annotators label keyframes and software interpolates intermediate frames. But quality checking interpolated frames remains essential. Motion blur, rapid direction changes, or occlusion often break interpolation algorithms.

Video annotation often costs 1.5-2.5x more per frame than static images when using temporal interpolation, though the total effort per second of video is significantly higher.

Industry-Specific Applications

Semantic segmentation use cases span industries, each with unique requirements.

Autonomous Vehicles

Self-driving systems need pixel-perfect segmentation of roads, vehicles, pedestrians, traffic signs, and obstacles. Edge cases like unusual weather conditions, construction zones, or rare object types require extensive annotation coverage.

Safety criticality demands extremely high accuracy. Small annotation errors in pedestrian detection could have life-or-death consequences.

Medical Imaging

Radiological image segmentation identifies tumors, organs, lesions, or anatomical structures. Medical annotation requires domain expertise—general annotators can't distinguish normal from pathological tissue.

Healthcare applications face strict regulatory requirements. HIPAA compliance, data encryption, and audit trails are mandatory.

Agricultural Technology

Precision agriculture uses segmentation to identify crop types, detect diseases, assess plant health, and optimize resource application. Drone imagery and satellite data require handling large-scale datasets.

Retail and E-commerce

Product segmentation enables virtual try-on, automated background removal, and visual search. Fashion applications need accurate clothing item segmentation from diverse images.

Looking Forward: The Future of Segmentation Outsourcing

The annotation industry continues evolving rapidly. Several trends are reshaping how teams approach segmentation outsourcing.

AI-assisted annotation tools improve efficiency. Models pre-annotate images with increasing accuracy, reducing human annotation time. The human role shifts toward quality review and edge case handling rather than pixel-by-pixel labeling.

Specialized domain vendors emerge for verticals like medical imaging, agricultural technology, and industrial inspection. Deep domain expertise delivers higher quality than generalist annotators.

Pricing models shift toward outcome-based contracts where vendors are paid for model performance rather than annotation volume. This aligns incentives around quality and efficiency rather than just throughput.

But the fundamental challenge remains—training computer vision models requires massive amounts of accurately labeled data. Outsourcing will continue as the pragmatic solution for most teams lacking internal annotation infrastructure.

Conclusion: Making Outsourcing Work

Semantic segmentation outsourcing succeeds when teams approach it strategically rather than transactionally. The lowest-cost vendor rarely delivers the best value. Quality, communication, and scalability matter more than shaving pennies per image.

Start with clear requirements. Understand exactly what quality level, timeline, and budget constraints define success. Invest time in comprehensive annotation guidelines—ambiguity multiplies costs through rework.

Run pilot projects before committing to large volumes. Testing vendors with real data reveals far more than proposals and sales conversations. The investment in pilots prevents expensive mistakes at scale.

Treat vendors as partners rather than commodity suppliers. Regular communication, feedback, and collaborative problem-solving produce better outcomes than purely transactional relationships.

The computer vision models powering autonomous vehicles, medical diagnostics, and industrial automation depend entirely on training data quality. Getting annotation right is getting the foundation of AI right.

Ready to outsource your semantic segmentation project? Start by curating a representative sample dataset and drafting initial annotation guidelines. Then reach out to 3-5 potential vendors with relevant domain experience. The time invested in proper vendor selection pays dividends throughout your project lifecycle.

Frequently Asked Questions

Topics

Semantic Segmentation Outsourcing Guide 2026

Semantic segmentation outsourcing involves hiring specialized vendors to label image and video data at the pixel level for computer vision projects. This guide covers when to outsource vs. build in-house, how to choose the right annotation partner, quality management strategies, and practical steps to launch and scale your segmentation projects cost-effectively.

Training computer vision models requires massive amounts of labeled data. When each pixel in thousands of images needs classification, the annotation workload becomes overwhelming for internal teams.

Semantic segmentation outsourcing solves this problem by leveraging specialized annotation providers who handle the labor-intensive pixel-level labeling at scale. But choosing the wrong partner or managing quality poorly can derail entire machine learning initiatives.

This guide walks through everything needed to outsource semantic segmentation successfully—from understanding what makes segmentation unique to selecting vendors, managing quality, and optimizing costs.

What Is Semantic Segmentation Outsourcing

Semantic segmentation is the identification of each pixel present in an image by object type. If an image shows a classroom containing a teacher, students, blackboard, chairs, tables, walls, ceiling, floor and windows, each pixel in the image gets allocated to one of these objects.

This differs from simpler annotation tasks. Bounding boxes just draw rectangles around objects. Instance segmentation identifies individual objects separately. Semantic segmentation treats all objects of the same class as one unified category.

Outsourcing this work means contracting external annotation companies, crowdsourcing platforms, or managed service providers to perform the pixel-level labeling. Teams provide raw images, annotation guidelines, and quality requirements. The vendor delivers labeled datasets ready for model training.

Why Outsourcing Makes Sense for Segmentation Projects

Semantic segmentation annotation is uniquely time-consuming. Annotators must trace precise boundaries around irregular shapes at pixel resolution. A single complex image can take 20-30 minutes to annotate properly.

Research from arXiv shows that over the past two years, approximately 20% of exhibitors at major computer vision conferences were annotation providers—highlighting how critical specialized vendors have become for real-world applications.

The economics favor outsourcing for most teams. Building annotation capacity in-house requires recruiting, training, managing annotators, and maintaining QA systems. For project-based work or variable annotation volumes, paying for external capacity as needed proves more efficient than carrying fixed overhead.

Outsourcing vs. In-House Annotation: Making the Decision

Not every situation calls for outsourcing. The decision depends on project requirements, budget, timeline, and data sensitivity.

When In-House Annotation Makes Sense

Build internal annotation capacity when data security is paramount. Healthcare organizations handling protected health information, defense contractors working with classified imagery, or companies with proprietary trade secrets often can't risk external data sharing.

In-house teams also excel when annotation requirements change frequently. Direct communication with annotators speeds up iteration cycles when refining object categories or boundary definitions.

Long-term projects with steady annotation volumes may justify the investment. Once trained, internal annotators develop deep domain knowledge that improves quality over time.

The Case for Outsourcing

Outsourcing wins for projects with variable or unpredictable annotation needs. Paying for capacity as needed avoids carrying idle resources during slow periods.

Speed to market favors external vendors. Annotation companies maintain trained workforces ready to scale. Spinning up 50 annotators takes days instead of months.

Cost efficiency improves dramatically at scale. Professional annotation services leverage specialized tools, quality assurance systems, and process optimization that would be expensive to replicate internally.

Studies on the global annotation workforce indicate that while demographics vary by region, entry-level annotators in major hubs typically have secondary education or vocational training, with female participation rates varying between 35-45% depending on the country.

Types of Semantic Segmentation Outsourcing Providers

The annotation market includes several distinct provider types, each with different strengths.

Dedicated Annotation Companies

These specialized firms focus exclusively on data labeling. They maintain trained annotation workforces, proprietary QA systems, and often develop custom tooling for complex segmentation tasks.

Quality tends to be high because annotation companies employ internal QA mechanics and structured training programs. Account managers provide single points of contact for project management.

The tradeoff is cost. Professional annotation services charge premium rates but deliver consistent quality at scale.

Crowdsourcing Platforms

Crowdsourcing distributes annotation tasks across large networks of distributed workers. Platforms handle task distribution, payment, and basic quality controls.

Research from arXiv notes that crowdsourcing platforms usually do not employ internal QA mechanics similar to annotation companies. Quality depends heavily on task design and redundancy (having multiple workers label the same data).

Crowdsourcing works best for simpler segmentation where objects have clear boundaries. Complex medical imaging or nuanced industrial inspection typically requires more specialized expertise.

Managed Service Providers

Full-service providers handle everything from data strategy through model deployment. They combine annotation services with machine learning expertise, helping design labeling schemas and validate model performance.

This end-to-end approach costs more but reduces internal resource requirements. Teams lacking computer vision expertise benefit most from managed services.

Choosing the Right Semantic Segmentation Vendor

Vendor selection determines project success more than any other factor. The right partner delivers quality data on time and budget. The wrong one wastes months and resources.

Technical Capabilities to Evaluate

Start with tooling. Quality segmentation requires specialized annotation software supporting polygon drawing, magic wand selection, and interpolation across video frames. Ask vendors what platforms they use—CVAT, Labelbox, V7, or proprietary systems.

Verify the vendor handles your data types. Image segmentation differs from video annotation. Video data annotation involves tracking objects across frames, handling occlusion, and maintaining consistency over time.

Check whether they support your file formats and can integrate with your ML pipeline. Exporting annotations in COCO format, PASCAL VOC, or custom JSON schemas should be straightforward.

Quality Assurance Systems

Quality management separates professional annotation companies from amateur operations. Strong vendors implement multi-layer QA:

First-pass annotation by trained labelers, followed by peer review catching obvious errors, then expert review for edge cases and complex scenarios, and finally automated validation checking for technical errors like overlapping polygons or missed regions.

Ask vendors about their QA metrics. Agreement scores, error rates per image, and revision cycles indicate process maturity.

IEEE research on quality management of datasets for medical artificial intelligence emphasizes the importance of structured QA practices, particularly for high-stakes applications.

Domain Expertise and Training

Generic annotation skills don't transfer to specialized domains. Medical image segmentation requires understanding anatomy. Autonomous vehicle datasets need annotators who recognize edge cases in traffic scenarios.

Evaluate how vendors train annotators for your domain. Look for structured onboarding programs, certification processes, and ongoing feedback mechanisms.

Research from arXiv shows that depending on project size, annotation centers may employ specialists with domain knowledge rather than just general labelers.

Security and Compliance

Data security matters even for non-sensitive projects. Vendors should offer secure data transfer protocols, encrypted storage, and access controls limiting who sees your data.

For regulated industries, verify compliance certifications. HIPAA for healthcare data, SOC 2 for general security, ISO 27001 for information security management.

Understand where annotation happens geographically. Some companies require data stays in specific jurisdictions for regulatory reasons.

Pricing Models and Cost Structure

Annotation vendors use several pricing approaches. Per-image pricing works for consistent complexity—each image costs a fixed amount regardless of annotation time. Per-pixel pricing scales with segmentation complexity. Hourly rates give vendors flexibility but make budgeting harder.

Request detailed quotes including all costs. Some vendors charge separately for project management, QA reviews, or format conversions.

Volume discounts significantly reduce per-image costs. Negotiate pricing tiers based on total project size.

Get the Right Team for Your Semantic Segmentation Project

Semantic segmentation requires engineers, data annotators, and validation specialists who understand both computer vision and your domain. Many teams slow down because of turnover or poor hiring fit. NeoWork helps you build a dedicated, long-term remote team for AI and data work. Their 91% annualized retention rate means teammates stay through long development cycles, and their 3.2% candidate selectivity rate means you spend time only with vetted professionals.

If you need reliable resources that can keep your semantic segmentation work moving without constant rehiring, contact NeoWork to start building a team that matches your technical needs and timeline.

Managing Quality in Outsourced Segmentation Projects

Quality problems in training data directly impact model performance. Garbage in, garbage out applies brutally to computer vision.

Setting Clear Annotation Guidelines

Ambiguity kills quality. Annotators need crystal-clear instructions about what to label and how to handle edge cases.

Document guidelines thoroughly. Include visual examples showing correct and incorrect annotations. Address common edge cases—how to handle partial occlusion, reflections, shadows, or ambiguous boundaries.

Specify technical requirements. Should annotators trace exact edges or smooth curves? How to handle objects touching frame boundaries? What level of detail for small objects?

Implementing Gold Standard Datasets

Gold standard datasets provide objective quality benchmarks. Expert annotators label a subset of images with maximum care. These become ground truth for evaluating vendor output.

Mix gold standard images into vendor batches without identifying them. Measure vendor accuracy against known correct labels. This catches quality drift before it affects large volumes.

Refresh gold standards periodically. Edge cases discovered during annotation should be added to maintain relevance.

Multi-Layer Quality Review

Single-pass annotation produces error rates too high for most applications. Layered review improves quality:

- Automated validation catches technical errors. Scripts check for unlabeled regions, overlapping polygons, invalid class assignments, or inconsistent object boundaries.

- Peer review by other annotators identifies obvious mistakes faster than expert review. Quality control research indicates that peer validation significantly improves annotation accuracy.

- Expert review focuses on complex edge cases and domain-specific accuracy. Medical imaging or specialized industrial applications require subject matter experts validating annotations.

Measuring and Tracking Quality Metrics

Track key quality indicators throughout projects. Agreement between annotators (inter-annotator agreement) should exceed 90% for simple segmentation, though complex domains may see lower scores.

Monitor revision rates. How often do QA reviewers reject or modify annotations? Rising revision rates indicate training gaps or guideline ambiguities.

Measure actual model performance on validation sets. Ultimately, annotation quality shows up in model metrics like IoU (Intersection over Union) or pixel accuracy.

Practical Steps to Launch Segmentation Outsourcing

Here's the thing though—knowing what to look for matters less than executing a structured launch process.

Phase 1: Preparation

Start by curating a representative sample dataset. Select 200-500 images covering the full range of scenarios, lighting conditions, object types, and edge cases your model will encounter.

Write comprehensive annotation guidelines. Include object definitions, boundary rules, edge case handling, and quality criteria. Add visual examples liberally.

Define success metrics. What accuracy level is acceptable? What timeline and budget constraints exist?

Phase 2: Vendor Evaluation

Reach out to 3-5 candidate vendors. Share your sample dataset and guidelines. Request proposals including pricing, timeline, and QA approach.

Run pilot projects with the top 2-3 vendors. Assign the same 100-200 images to each. This enables direct quality comparison.

Evaluate pilot results rigorously. Check annotation accuracy against your gold standards. Assess how well vendors handled edge cases. Review communication responsiveness.

Phase 3: Scaling Production

Start production with modest volumes. Even the best pilot doesn't reveal all potential issues. Begin with 500-1000 images while monitoring quality closely.

Establish regular communication cadences. Weekly calls with vendor project managers catch problems early. Create shared documentation for tracking edge cases and guideline updates.

Scale gradually as quality stabilizes. Double annotation volumes every 2-3 weeks if quality metrics remain solid.

Phase 4: Continuous Improvement

Analyze systematic errors. If multiple annotators make the same mistakes, guidelines need clarification or training needs enhancement.

Update documentation based on real-world challenges. Edge cases discovered during annotation should be added to guidelines with resolution instructions.

Provide regular feedback to vendors. Share quality metrics, highlight excellent work, and discuss areas needing improvement.

Cost Optimization Strategies

Annotation costs can balloon quickly at scale. Smart strategies reduce spending without sacrificing quality.

Active Learning Reduces Annotation Volume

Active learning uses partially-trained models to identify which images most need annotation. The model annotates easy examples automatically. Humans focus on edge cases where the model is uncertain.

This approach can potentially reduce annotation costs by 40-60%. The tradeoff is added technical complexity and longer project timelines.

Pre-Annotation Improves Efficiency

Use existing models or simple algorithms to create rough annotations. Annotators correct and refine rather than starting from scratch.

Pre-annotation works best when rough approximations are decent—existing models in the same domain or simple algorithms like color-based segmentation for high-contrast objects.

Tiered Quality for Different Data Subsets

Not all training data requires perfect annotation. Consider using different quality tiers:

High-quality annotation for validation and test sets where accuracy is critical. Medium-quality for the bulk of training data where some noise is acceptable. Lower-quality crowdsourced annotation for supplementary data increasing dataset diversity.

This approach balances cost with model performance requirements.

Volume Commitments Unlock Discounts

Vendors offer significant discounts for volume commitments. Committing to 50,000 images might reduce per-image costs by 30-40% compared to small batches.

The risk is overcommitting before validating model performance. Start with pilot volumes, then negotiate long-term pricing once confident in the approach.

Common Pitfalls and How to Avoid Them

Even experienced teams stumble when outsourcing segmentation annotation. These problems show up repeatedly.

Insufficient Guidelines Lead to Inconsistency

Ambiguous instructions produce inconsistent annotations. Different annotators interpret edge cases differently, introducing noise into training data.

The fix is ruthless specificity. Document every edge case. When annotators ask questions, update guidelines immediately with the answer.

Skipping Pilot Projects

Jumping straight to full production without testing vendors is expensive gambling. Quality problems discovered after annotating 10,000 images mean rework and delays.

Always run pilots. The small upfront investment prevents vastly larger downstream costs.

Weak Communication Channels

Annotation projects involve constant micro-decisions about edge cases and guideline interpretations. Slow communication creates bottlenecks and quality drift.

Establish fast communication channels. Shared Slack channels, regular video calls, and responsive project managers keep things moving.

Neglecting Validation Set Quality

Teams sometimes outsource training data annotation but handle test sets internally to control quality. This makes sense—but test sets still need the same rigor.

Apply equal or higher quality standards to validation and test data. Model evaluation depends on these golden datasets being correct.

Video Segmentation: Special Considerations

Video annotation multiplies complexity compared to static images. Objects move, get occluded, change appearance, and interact across frames.

Video data annotation requires tracking objects across frames while maintaining consistent boundaries. When a car passes behind a tree, annotators must handle the occlusion correctly and re-identify the same vehicle when it emerges.

Interpolation features help—annotators label keyframes and software interpolates intermediate frames. But quality checking interpolated frames remains essential. Motion blur, rapid direction changes, or occlusion often break interpolation algorithms.

Video annotation often costs 1.5-2.5x more per frame than static images when using temporal interpolation, though the total effort per second of video is significantly higher.

Industry-Specific Applications

Semantic segmentation use cases span industries, each with unique requirements.

Autonomous Vehicles

Self-driving systems need pixel-perfect segmentation of roads, vehicles, pedestrians, traffic signs, and obstacles. Edge cases like unusual weather conditions, construction zones, or rare object types require extensive annotation coverage.

Safety criticality demands extremely high accuracy. Small annotation errors in pedestrian detection could have life-or-death consequences.

Medical Imaging

Radiological image segmentation identifies tumors, organs, lesions, or anatomical structures. Medical annotation requires domain expertise—general annotators can't distinguish normal from pathological tissue.

Healthcare applications face strict regulatory requirements. HIPAA compliance, data encryption, and audit trails are mandatory.

Agricultural Technology

Precision agriculture uses segmentation to identify crop types, detect diseases, assess plant health, and optimize resource application. Drone imagery and satellite data require handling large-scale datasets.

Retail and E-commerce

Product segmentation enables virtual try-on, automated background removal, and visual search. Fashion applications need accurate clothing item segmentation from diverse images.

Looking Forward: The Future of Segmentation Outsourcing

The annotation industry continues evolving rapidly. Several trends are reshaping how teams approach segmentation outsourcing.

AI-assisted annotation tools improve efficiency. Models pre-annotate images with increasing accuracy, reducing human annotation time. The human role shifts toward quality review and edge case handling rather than pixel-by-pixel labeling.

Specialized domain vendors emerge for verticals like medical imaging, agricultural technology, and industrial inspection. Deep domain expertise delivers higher quality than generalist annotators.

Pricing models shift toward outcome-based contracts where vendors are paid for model performance rather than annotation volume. This aligns incentives around quality and efficiency rather than just throughput.

But the fundamental challenge remains—training computer vision models requires massive amounts of accurately labeled data. Outsourcing will continue as the pragmatic solution for most teams lacking internal annotation infrastructure.

Conclusion: Making Outsourcing Work

Semantic segmentation outsourcing succeeds when teams approach it strategically rather than transactionally. The lowest-cost vendor rarely delivers the best value. Quality, communication, and scalability matter more than shaving pennies per image.

Start with clear requirements. Understand exactly what quality level, timeline, and budget constraints define success. Invest time in comprehensive annotation guidelines—ambiguity multiplies costs through rework.

Run pilot projects before committing to large volumes. Testing vendors with real data reveals far more than proposals and sales conversations. The investment in pilots prevents expensive mistakes at scale.

Treat vendors as partners rather than commodity suppliers. Regular communication, feedback, and collaborative problem-solving produce better outcomes than purely transactional relationships.

The computer vision models powering autonomous vehicles, medical diagnostics, and industrial automation depend entirely on training data quality. Getting annotation right is getting the foundation of AI right.

Ready to outsource your semantic segmentation project? Start by curating a representative sample dataset and drafting initial annotation guidelines. Then reach out to 3-5 potential vendors with relevant domain experience. The time invested in proper vendor selection pays dividends throughout your project lifecycle.

Frequently Asked Questions

Topics

Related Blogs

Related Podcasts