.avif)

.png)

Text annotation outsourcing involves partnering with specialized vendors to label datasets for AI and machine learning projects. Companies outsource to access expert annotators, scale quickly, reduce costs significantly, and maintain quality through multi-layer review processes, while retaining control via SLAs and quality protocols.

Building accurate AI models demands one critical ingredient that most teams underestimate: properly annotated training data. But here's the thing—managing annotation projects in-house quickly becomes resource-intensive, complex, and expensive.

Text annotation outsourcing has become the go-to strategy for companies that need to scale machine learning models without derailing their roadmap. The right partner brings domain expertise, established quality protocols, and flexible capacity that adapts to project demands.

This guide breaks down everything teams need to know about outsourcing text annotation services, from vendor evaluation to quality assurance frameworks.

What Text Annotation Services Actually Do

Text annotation is the process of labeling textual data so machine learning models can understand patterns, context, and meaning. Think of it as teaching an AI to read between the lines.

Annotation types vary based on the model's purpose. Sentiment analysis requires labeling text as positive, negative, or neutral. Named entity recognition involves tagging people, organizations, locations, and dates within documents. Intent classification labels user queries by what action they're requesting.

Other common annotation tasks include text categorization, relationship extraction, part-of-speech tagging, and semantic annotation. Each task demands different expertise levels and quality control mechanisms.

Specialized providers handle these tasks at scale. According to competitor analysis, many vendors aim to achieve up to 99% annotation accuracy through multiple review layers and established QA protocols. Domain experts work on day one without requiring lengthy onboarding or training cycles.

Why Companies Choose to Outsource Annotation

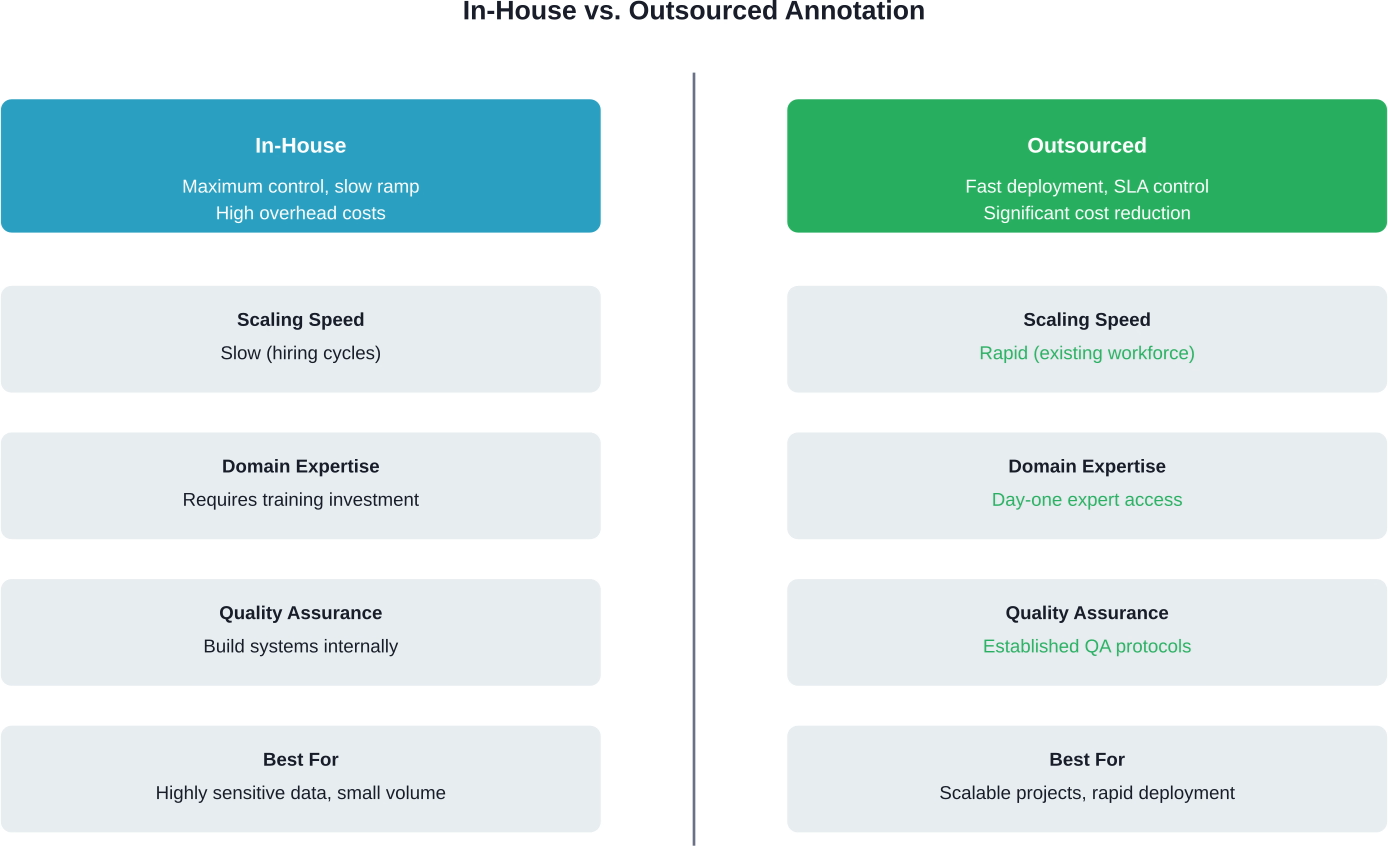

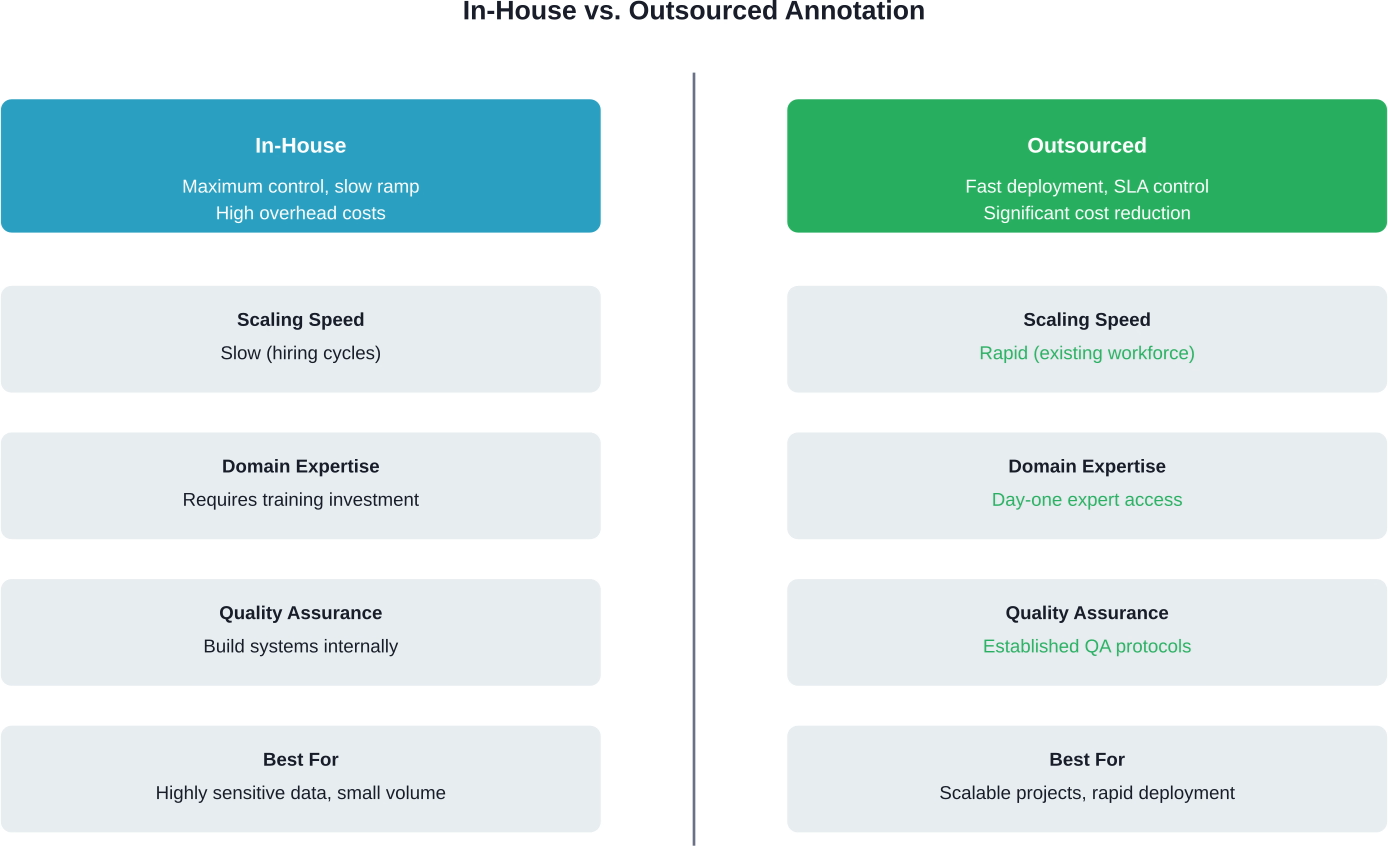

The strategic advantages of outsourcing become clear when examining the alternative. Building an in-house annotation team means recruiting, training, managing workflow, implementing quality controls, and maintaining infrastructure.

Cost reduction ranks among the top benefits. Outsourcing eliminates overhead associated with full-time employees—benefits, workspace, equipment, and ongoing training. Vendors typically offer faster turnarounds than in-house approaches.

Scalability solves another critical challenge. Machine learning projects rarely maintain consistent annotation needs. Teams might need 10 annotators this month and 100 the next. Outsourced providers absorb that variability without forcing companies to hire temporary staff or maintain unused capacity.

Access to expertise matters more than teams initially realize. Quality annotation requires deep understanding of linguistic nuances, domain-specific terminology, and edge cases. Established vendors have already solved these problems across hundreds of projects.

How Annotation Outsourcing Actually Works

The process starts with project scoping and guideline creation. Vendors work with client teams to define labeling rules, edge case handling, and quality targets. Clear guidelines prevent inconsistent annotations that derail model training.

Guideline-centered annotation methodologies focus on documenting the annotation process itself—capturing decisions, ambiguities, and resolution patterns. This creates institutional knowledge that improves consistency across large annotation teams.

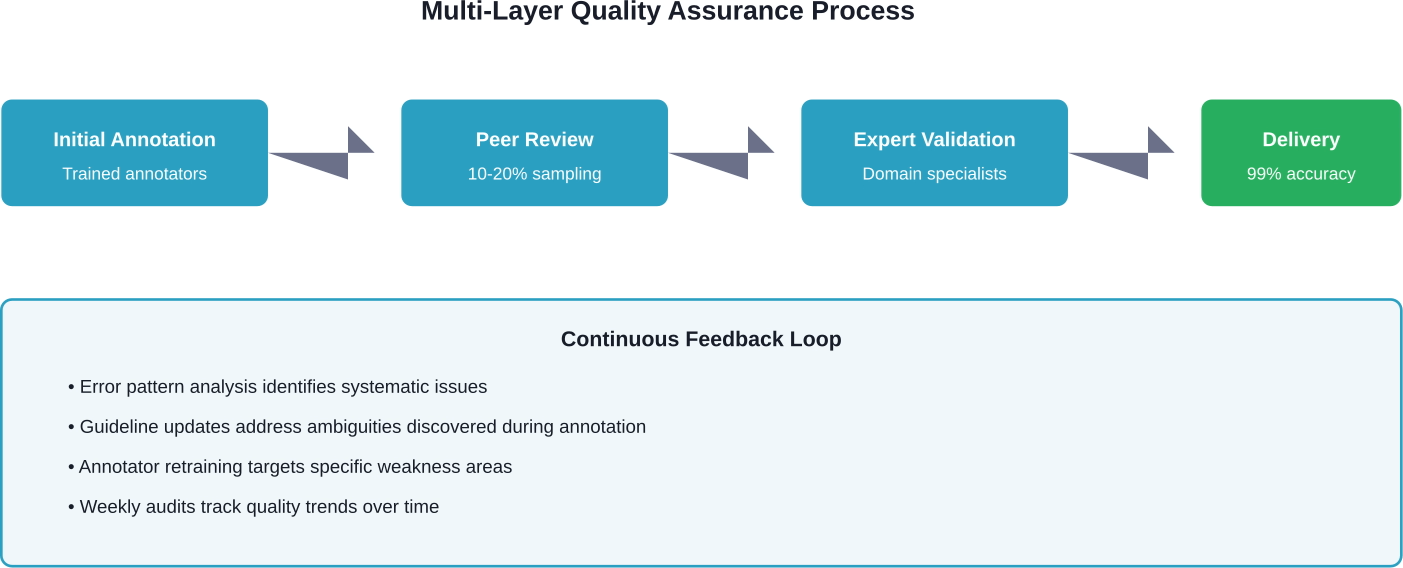

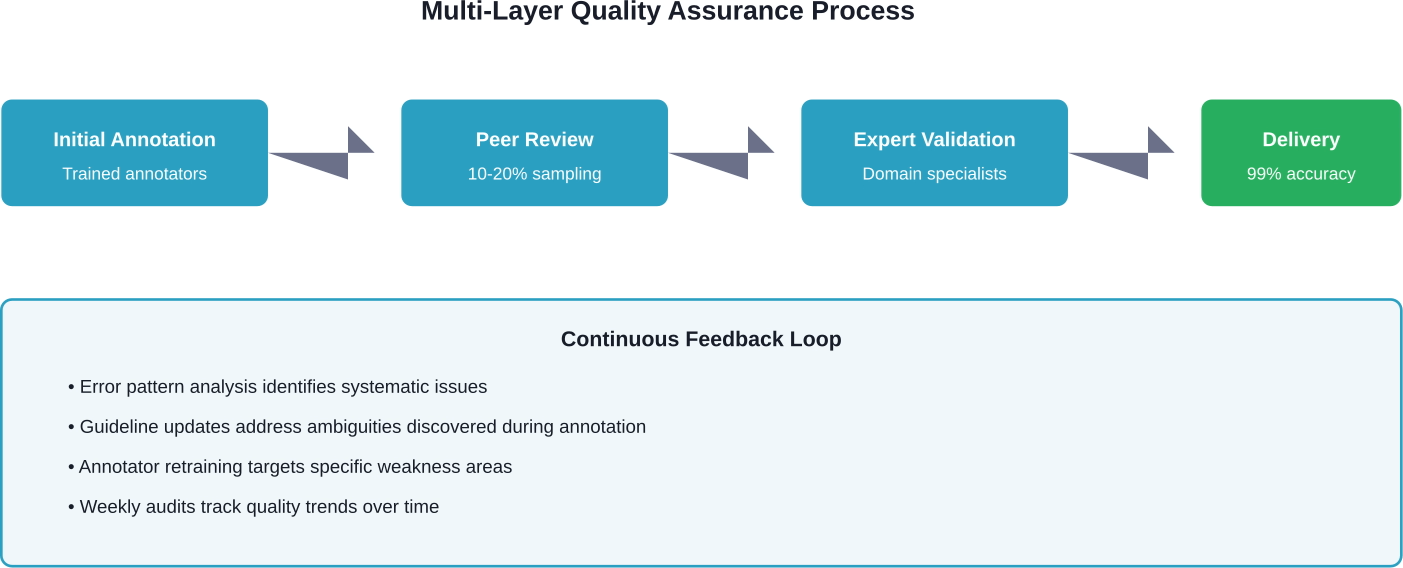

Quality assurance happens in multiple layers. Quality assurance protocols often include review cadence specifications, such as weekly 10% audits, and closed feedback loops for continuous improvement.

Workflow typically follows this pattern: raw data intake, task assignment to specialized annotators, initial labeling, peer review, expert validation, and final delivery. Each stage includes checkpoints that catch errors before they propagate through thousands of records.

But wait. What separates mediocre vendors from exceptional ones? The answer lies in how they handle edge cases, ambiguous examples, and evolving guidelines mid-project.

Scale With Structured Text Annotation Outsourcing

Text annotation demands attention to context, tone, and edge cases. NeoWork offers remote teams experienced in NLP labeling, classification, and evaluation tasks. Their 91% annualized teammate retention rate and 3.2% candidate selectivity rate help ensure long-term guideline adherence and minimal turnover. This reduces retraining cycles and improves model performance over time.

Ready to Improve Your NLP Data Quality?

Talk with NeoWork to:

- assemble a vetted text annotation team

- implement structured QA workflows

- scale multilingual or domain-specific projects

👉 Reach out to NeoWork to structure your text annotation outsourcing.

Security Considerations Teams Can't Ignore

Handing over training data to external vendors creates legitimate security concerns. Text datasets often contain proprietary information, customer communications, or sensitive business intelligence.

Strong vendors implement multi-layered security protocols. Data encryption during transfer and storage forms the baseline. Access controls ensure annotators only see data relevant to their assigned tasks. Audit trails track who accessed what data and when.

Non-disclosure agreements and data processing agreements establish legal protections. For regulated industries like healthcare or finance, vendors need relevant compliance certifications—HIPAA, SOC 2, GDPR compliance frameworks.

Some projects require on-premise annotation or private cloud deployment. These models keep data within company infrastructure while still leveraging external annotation expertise. The tradeoff involves higher setup costs and longer deployment timelines.

Data Retention and Deletion Policies

Clear policies around data retention matter enormously. Contracts should specify exactly when and how vendors delete client data after project completion.

Automated deletion protocols work better than manual processes. Some vendors maintain zero-retention policies, where annotated data transfers back to clients immediately with no copies retained on vendor systems.

Choosing the Right Annotation Partner

Vendor selection determines project success more than any other factor. The market includes dozens of providers with vastly different capabilities, workforce models, and quality standards.

Delivery models vary significantly. Some vendors maintain full-time employed annotators. Others use crowd-sourced models with independent contractors. Hybrid approaches combine employed quality reviewers with flexible annotator pools.

Each model has tradeoffs. Employed workforces offer more stability and institutional knowledge but cost more and scale slower. Crowd models scale rapidly but introduce consistency challenges. Look for vendors that match workforce model to project requirements.

Domain expertise becomes critical for specialized annotation. Medical text annotation requires annotators who understand clinical terminology. Legal document annotation needs familiarity with legal concepts and citation formats. Financial sentiment analysis benefits from annotators who grasp market dynamics.

Testing Before Committing

Pilot projects reveal vendor capabilities better than any sales presentation. Start with a small, well-defined annotation task with ground truth data.

Evaluate the results quantitatively—accuracy rates, consistency scores, turnaround times. But also assess the qualitative experience. How responsive was the team? How well did they handle questions and edge cases? Did they proactively identify guideline ambiguities?

Successful pilots typically involve 1,000-5,000 records. Large enough to test workflows and quality systems, small enough to limit risk.

Quality Assurance Frameworks That Actually Work

Quality assurance can't be an afterthought. The best annotation vendors build QA into every workflow stage rather than treating it as a final inspection step.

Multi-annotator consensus provides one validation approach. The same data gets labeled by multiple independent annotators. Agreement rates reveal which examples are straightforward and which need guideline clarification.

Expert review layers catch errors that peer review misses. Senior annotators or subject matter experts review a percentage of all annotations—often 10-20% of total volume. The specific percentage depends on task complexity and risk tolerance.

Statistical sampling enables quality monitoring without reviewing every record. Random sampling catches systematic errors. Stratified sampling ensures rare categories get adequate review attention.

Measuring Annotation Quality

Inter-annotator agreement quantifies consistency between annotators. Cohen's kappa and Fleiss' kappa provide statistical measures that account for chance agreement.

Accuracy against gold standard data offers another metric. Maintaining a set of expert-labeled examples allows ongoing validation of annotator performance. When accuracy drops below thresholds, it triggers retraining or guideline clarification.

Cost Structures and Pricing Models

Annotation pricing varies based on task complexity, volume, turnaround requirements, and quality standards. Simple classification tasks cost less per record than complex relationship extraction or semantic annotation.

Per-record pricing works for well-defined, consistent tasks. Vendors quote a fixed price per annotated item—per document, per sentence, per entity tagged. This model offers predictable budgeting but can create misaligned incentives around quality.

Hourly pricing makes sense for complex or exploratory projects where task requirements evolve. Teams pay for annotator time rather than output volume. This aligns incentives around quality but requires careful scope management.

Hybrid models combine base fees with volume pricing. Monthly minimums guarantee vendor capacity while per-record fees above the minimum create flexibility for volume fluctuations.

Real talk: the cheapest vendor rarely delivers the best value. Low prices often indicate undertrained annotators, minimal quality controls, or inadequate security measures. The cost of fixing bad annotations and retraining models far exceeds the savings from budget vendors.

Managing Outsourced Annotation Projects

Successful outsourcing requires active management, not passive delegation. Clear communication channels between internal teams and vendor teams prevent misunderstandings that compound over thousands of records.

Guideline documentation deserves significant upfront investment. Comprehensive guidelines that address edge cases, provide examples, and explain the reasoning behind labeling decisions improve consistency dramatically.

Regular sync meetings keep projects on track. Weekly calls during active annotation phases allow teams to discuss challenging examples, refine guidelines, and address quality concerns before they affect large data volumes.

Scope reassessment should happen quarterly. According to analysis from leading vendors, projects benefit from regular scope reviews that align annotation requirements with evolving model needs.

Handling Guideline Changes Mid-Project

Annotation requirements evolve as teams learn more about their data and model behavior. The challenge lies in updating guidelines without invalidating already-completed work.

Version control for guidelines creates clear audit trails. Each guideline version gets tagged with a date and change summary. Annotated data includes metadata indicating which guideline version governed the annotation.

Retroactive correction depends on the scope of changes. Minor clarifications might not warrant re-annotation. Major category redefinitions often require revisiting completed work, at least for a validation sample.

Industries Leveraging Annotation Outsourcing

Healthcare applications rely heavily on annotated text for clinical decision support, medical coding automation, and patient record analysis. HIPAA-compliant vendors enable healthcare organizations to outsource while maintaining regulatory compliance.

Financial services use annotation for sentiment analysis of market communications, automated document processing, and fraud detection systems. Annotators label transaction descriptions, analyst reports, and customer communications to train models that identify patterns and anomalies.

E-commerce platforms need annotation for product categorization, review sentiment analysis, and customer service automation. Properly labeled product data improves search relevance and recommendation engines.

Legal tech applications include contract analysis, case law research automation, and e-discovery. Legal domain expertise matters enormously—annotators must understand legal terminology, document structures, and citation formats.

Common Pitfalls and How to Avoid Them

Insufficient guideline detail creates the most common quality problems. Annotators facing ambiguous examples make inconsistent decisions without clear guidance. The solution involves comprehensive guidelines developed iteratively with annotator feedback.

Inadequate quality monitoring allows errors to propagate. Teams that only review final deliverables miss opportunities to correct systematic problems early. Continuous quality sampling catches issues when they're still manageable.

Poor communication between client teams and annotation teams leads to misaligned expectations. Regular check-ins and accessible escalation paths prevent small misunderstandings from becoming project-derailing problems.

Vendor lock-in happens when proprietary tools or formats make switching vendors difficult. Insisting on standard data formats and maintaining independent copies of annotation guidelines preserves flexibility.

Moving Forward with Annotation Outsourcing

Text annotation outsourcing transforms from a tactical necessity into a strategic advantage when approached systematically. The right partner brings more than labor—they contribute expertise, established processes, and institutional knowledge that accelerates AI development.

Success requires active partnership rather than hands-off delegation. Clear guidelines, continuous quality monitoring, open communication, and regular scope reviews keep projects aligned with evolving model requirements.

Start with pilot projects that test vendor capabilities on real data. Evaluate both quantitative metrics and qualitative factors like responsiveness and problem-solving ability. Scale relationships gradually as trust and performance track records develop.

The annotation landscape continues evolving. New techniques combine human annotation with machine learning assistance. Quality frameworks become more sophisticated. Security standards tighten in response to data privacy concerns.

Organizations that master annotation outsourcing gain sustainable competitive advantages in AI development. They deploy models faster, achieve higher accuracy, and scale more efficiently than competitors struggling with in-house annotation challenges.

Ready to start outsourcing your text annotation projects? Define clear success metrics, document detailed guidelines, and evaluate vendors based on demonstrated expertise rather than cost alone. The investment in finding the right partner pays dividends throughout your AI roadmap.

Frequently Asked Questions

Topics

Text Annotation Outsourcing Guide (2026)

Text annotation outsourcing involves partnering with specialized vendors to label datasets for AI and machine learning projects. Companies outsource to access expert annotators, scale quickly, reduce costs significantly, and maintain quality through multi-layer review processes, while retaining control via SLAs and quality protocols.

Building accurate AI models demands one critical ingredient that most teams underestimate: properly annotated training data. But here's the thing—managing annotation projects in-house quickly becomes resource-intensive, complex, and expensive.

Text annotation outsourcing has become the go-to strategy for companies that need to scale machine learning models without derailing their roadmap. The right partner brings domain expertise, established quality protocols, and flexible capacity that adapts to project demands.

This guide breaks down everything teams need to know about outsourcing text annotation services, from vendor evaluation to quality assurance frameworks.

What Text Annotation Services Actually Do

Text annotation is the process of labeling textual data so machine learning models can understand patterns, context, and meaning. Think of it as teaching an AI to read between the lines.

Annotation types vary based on the model's purpose. Sentiment analysis requires labeling text as positive, negative, or neutral. Named entity recognition involves tagging people, organizations, locations, and dates within documents. Intent classification labels user queries by what action they're requesting.

Other common annotation tasks include text categorization, relationship extraction, part-of-speech tagging, and semantic annotation. Each task demands different expertise levels and quality control mechanisms.

Specialized providers handle these tasks at scale. According to competitor analysis, many vendors aim to achieve up to 99% annotation accuracy through multiple review layers and established QA protocols. Domain experts work on day one without requiring lengthy onboarding or training cycles.

Why Companies Choose to Outsource Annotation

The strategic advantages of outsourcing become clear when examining the alternative. Building an in-house annotation team means recruiting, training, managing workflow, implementing quality controls, and maintaining infrastructure.

Cost reduction ranks among the top benefits. Outsourcing eliminates overhead associated with full-time employees—benefits, workspace, equipment, and ongoing training. Vendors typically offer faster turnarounds than in-house approaches.

Scalability solves another critical challenge. Machine learning projects rarely maintain consistent annotation needs. Teams might need 10 annotators this month and 100 the next. Outsourced providers absorb that variability without forcing companies to hire temporary staff or maintain unused capacity.

Access to expertise matters more than teams initially realize. Quality annotation requires deep understanding of linguistic nuances, domain-specific terminology, and edge cases. Established vendors have already solved these problems across hundreds of projects.

How Annotation Outsourcing Actually Works

The process starts with project scoping and guideline creation. Vendors work with client teams to define labeling rules, edge case handling, and quality targets. Clear guidelines prevent inconsistent annotations that derail model training.

Guideline-centered annotation methodologies focus on documenting the annotation process itself—capturing decisions, ambiguities, and resolution patterns. This creates institutional knowledge that improves consistency across large annotation teams.

Quality assurance happens in multiple layers. Quality assurance protocols often include review cadence specifications, such as weekly 10% audits, and closed feedback loops for continuous improvement.

Workflow typically follows this pattern: raw data intake, task assignment to specialized annotators, initial labeling, peer review, expert validation, and final delivery. Each stage includes checkpoints that catch errors before they propagate through thousands of records.

But wait. What separates mediocre vendors from exceptional ones? The answer lies in how they handle edge cases, ambiguous examples, and evolving guidelines mid-project.

Scale With Structured Text Annotation Outsourcing

Text annotation demands attention to context, tone, and edge cases. NeoWork offers remote teams experienced in NLP labeling, classification, and evaluation tasks. Their 91% annualized teammate retention rate and 3.2% candidate selectivity rate help ensure long-term guideline adherence and minimal turnover. This reduces retraining cycles and improves model performance over time.

Ready to Improve Your NLP Data Quality?

Talk with NeoWork to:

- assemble a vetted text annotation team

- implement structured QA workflows

- scale multilingual or domain-specific projects

👉 Reach out to NeoWork to structure your text annotation outsourcing.

Security Considerations Teams Can't Ignore

Handing over training data to external vendors creates legitimate security concerns. Text datasets often contain proprietary information, customer communications, or sensitive business intelligence.

Strong vendors implement multi-layered security protocols. Data encryption during transfer and storage forms the baseline. Access controls ensure annotators only see data relevant to their assigned tasks. Audit trails track who accessed what data and when.

Non-disclosure agreements and data processing agreements establish legal protections. For regulated industries like healthcare or finance, vendors need relevant compliance certifications—HIPAA, SOC 2, GDPR compliance frameworks.

Some projects require on-premise annotation or private cloud deployment. These models keep data within company infrastructure while still leveraging external annotation expertise. The tradeoff involves higher setup costs and longer deployment timelines.

Data Retention and Deletion Policies

Clear policies around data retention matter enormously. Contracts should specify exactly when and how vendors delete client data after project completion.

Automated deletion protocols work better than manual processes. Some vendors maintain zero-retention policies, where annotated data transfers back to clients immediately with no copies retained on vendor systems.

Choosing the Right Annotation Partner

Vendor selection determines project success more than any other factor. The market includes dozens of providers with vastly different capabilities, workforce models, and quality standards.

Delivery models vary significantly. Some vendors maintain full-time employed annotators. Others use crowd-sourced models with independent contractors. Hybrid approaches combine employed quality reviewers with flexible annotator pools.

Each model has tradeoffs. Employed workforces offer more stability and institutional knowledge but cost more and scale slower. Crowd models scale rapidly but introduce consistency challenges. Look for vendors that match workforce model to project requirements.

Domain expertise becomes critical for specialized annotation. Medical text annotation requires annotators who understand clinical terminology. Legal document annotation needs familiarity with legal concepts and citation formats. Financial sentiment analysis benefits from annotators who grasp market dynamics.

Testing Before Committing

Pilot projects reveal vendor capabilities better than any sales presentation. Start with a small, well-defined annotation task with ground truth data.

Evaluate the results quantitatively—accuracy rates, consistency scores, turnaround times. But also assess the qualitative experience. How responsive was the team? How well did they handle questions and edge cases? Did they proactively identify guideline ambiguities?

Successful pilots typically involve 1,000-5,000 records. Large enough to test workflows and quality systems, small enough to limit risk.

Quality Assurance Frameworks That Actually Work

Quality assurance can't be an afterthought. The best annotation vendors build QA into every workflow stage rather than treating it as a final inspection step.

Multi-annotator consensus provides one validation approach. The same data gets labeled by multiple independent annotators. Agreement rates reveal which examples are straightforward and which need guideline clarification.

Expert review layers catch errors that peer review misses. Senior annotators or subject matter experts review a percentage of all annotations—often 10-20% of total volume. The specific percentage depends on task complexity and risk tolerance.

Statistical sampling enables quality monitoring without reviewing every record. Random sampling catches systematic errors. Stratified sampling ensures rare categories get adequate review attention.

Measuring Annotation Quality

Inter-annotator agreement quantifies consistency between annotators. Cohen's kappa and Fleiss' kappa provide statistical measures that account for chance agreement.

Accuracy against gold standard data offers another metric. Maintaining a set of expert-labeled examples allows ongoing validation of annotator performance. When accuracy drops below thresholds, it triggers retraining or guideline clarification.

Cost Structures and Pricing Models

Annotation pricing varies based on task complexity, volume, turnaround requirements, and quality standards. Simple classification tasks cost less per record than complex relationship extraction or semantic annotation.

Per-record pricing works for well-defined, consistent tasks. Vendors quote a fixed price per annotated item—per document, per sentence, per entity tagged. This model offers predictable budgeting but can create misaligned incentives around quality.

Hourly pricing makes sense for complex or exploratory projects where task requirements evolve. Teams pay for annotator time rather than output volume. This aligns incentives around quality but requires careful scope management.

Hybrid models combine base fees with volume pricing. Monthly minimums guarantee vendor capacity while per-record fees above the minimum create flexibility for volume fluctuations.

Real talk: the cheapest vendor rarely delivers the best value. Low prices often indicate undertrained annotators, minimal quality controls, or inadequate security measures. The cost of fixing bad annotations and retraining models far exceeds the savings from budget vendors.

Managing Outsourced Annotation Projects

Successful outsourcing requires active management, not passive delegation. Clear communication channels between internal teams and vendor teams prevent misunderstandings that compound over thousands of records.

Guideline documentation deserves significant upfront investment. Comprehensive guidelines that address edge cases, provide examples, and explain the reasoning behind labeling decisions improve consistency dramatically.

Regular sync meetings keep projects on track. Weekly calls during active annotation phases allow teams to discuss challenging examples, refine guidelines, and address quality concerns before they affect large data volumes.

Scope reassessment should happen quarterly. According to analysis from leading vendors, projects benefit from regular scope reviews that align annotation requirements with evolving model needs.

Handling Guideline Changes Mid-Project

Annotation requirements evolve as teams learn more about their data and model behavior. The challenge lies in updating guidelines without invalidating already-completed work.

Version control for guidelines creates clear audit trails. Each guideline version gets tagged with a date and change summary. Annotated data includes metadata indicating which guideline version governed the annotation.

Retroactive correction depends on the scope of changes. Minor clarifications might not warrant re-annotation. Major category redefinitions often require revisiting completed work, at least for a validation sample.

Industries Leveraging Annotation Outsourcing

Healthcare applications rely heavily on annotated text for clinical decision support, medical coding automation, and patient record analysis. HIPAA-compliant vendors enable healthcare organizations to outsource while maintaining regulatory compliance.

Financial services use annotation for sentiment analysis of market communications, automated document processing, and fraud detection systems. Annotators label transaction descriptions, analyst reports, and customer communications to train models that identify patterns and anomalies.

E-commerce platforms need annotation for product categorization, review sentiment analysis, and customer service automation. Properly labeled product data improves search relevance and recommendation engines.

Legal tech applications include contract analysis, case law research automation, and e-discovery. Legal domain expertise matters enormously—annotators must understand legal terminology, document structures, and citation formats.

Common Pitfalls and How to Avoid Them

Insufficient guideline detail creates the most common quality problems. Annotators facing ambiguous examples make inconsistent decisions without clear guidance. The solution involves comprehensive guidelines developed iteratively with annotator feedback.

Inadequate quality monitoring allows errors to propagate. Teams that only review final deliverables miss opportunities to correct systematic problems early. Continuous quality sampling catches issues when they're still manageable.

Poor communication between client teams and annotation teams leads to misaligned expectations. Regular check-ins and accessible escalation paths prevent small misunderstandings from becoming project-derailing problems.

Vendor lock-in happens when proprietary tools or formats make switching vendors difficult. Insisting on standard data formats and maintaining independent copies of annotation guidelines preserves flexibility.

Moving Forward with Annotation Outsourcing

Text annotation outsourcing transforms from a tactical necessity into a strategic advantage when approached systematically. The right partner brings more than labor—they contribute expertise, established processes, and institutional knowledge that accelerates AI development.

Success requires active partnership rather than hands-off delegation. Clear guidelines, continuous quality monitoring, open communication, and regular scope reviews keep projects aligned with evolving model requirements.

Start with pilot projects that test vendor capabilities on real data. Evaluate both quantitative metrics and qualitative factors like responsiveness and problem-solving ability. Scale relationships gradually as trust and performance track records develop.

The annotation landscape continues evolving. New techniques combine human annotation with machine learning assistance. Quality frameworks become more sophisticated. Security standards tighten in response to data privacy concerns.

Organizations that master annotation outsourcing gain sustainable competitive advantages in AI development. They deploy models faster, achieve higher accuracy, and scale more efficiently than competitors struggling with in-house annotation challenges.

Ready to start outsourcing your text annotation projects? Define clear success metrics, document detailed guidelines, and evaluate vendors based on demonstrated expertise rather than cost alone. The investment in finding the right partner pays dividends throughout your AI roadmap.

Frequently Asked Questions

Topics

Related Blogs

Related Podcasts