.avif)

.png)

Video annotation outsourcing involves hiring specialized third-party providers to label and tag objects, actions, and events in video data for training AI models. This guide covers when to outsource vs. build in-house, how to select the right annotation partner, key pricing models, quality assurance practices, and common challenges teams face when scaling video annotation projects.

Building AI models that understand video requires massive amounts of labeled data. Most teams hit a wall around the same point—when annotation tasks grow beyond what internal engineers can handle without sacrificing their actual product work.

That's when outsourcing becomes less of a nice-to-have and more of a strategic necessity. But the decision to outsource video annotation isn't just about cost savings. It's about maintaining quality at scale, managing complex workflows, and finding partners who understand the nuances of your specific computer vision tasks.

This guide walks through everything teams need to know about video annotation outsourcing: the fundamentals, when it makes sense, how to evaluate providers, and what to watch for as projects scale.

What Video Annotation Actually Involves

Video annotation is the process of labeling objects, actions, events, and attributes within video frames to create training data for machine learning models. Unlike static image annotation, video introduces temporal dimensions—tracking how objects move, interact, and change across time.

The process breaks down video into individual frames, then applies various annotation techniques depending on the use case. For autonomous vehicle training, that might mean drawing bounding boxes around pedestrians, vehicles, and traffic signs across thousands of sequential frames. For action recognition models, it involves tagging specific behaviors or events as they occur.

Here's the thing though—video annotation is significantly more resource-intensive than image annotation. A minute of video at 30 frames per second contains 1,800 individual frames (e.g., a 60-second video at 60 fps yields 3,600 frames). Annotating five minutes of footage at 30 fps means 9,000 frames requiring human attention.

Common Video Annotation Techniques

Different machine learning applications require different annotation approaches. Bounding boxes remain the most common method, drawing rectangles around objects of interest in each frame. They're computationally efficient and work well for object detection tasks.

Polygon annotation offers more precision by tracing irregular object boundaries. This matters for applications needing exact shape information, like medical imaging or detailed scene segmentation.

Semantic segmentation takes it further, classifying every pixel in a frame. Each pixel gets assigned to a specific category—road, sidewalk, building, sky. It's computationally expensive but necessary for applications requiring complete scene understanding.

Keypoint annotation marks specific points of interest, often used for pose estimation or facial landmark detection. Think of tracking joint positions for human motion analysis or monitoring facial expressions.

Then there's temporal annotation—tagging events or activities that occur across time ranges rather than in single frames. This powers applications like sports analytics, surveillance systems, and content moderation.

When Outsourcing Makes More Sense Than In-House Work

The decision to outsource video annotation comes down to resource allocation. Small pilot projects with a few hundred clips can run in-house with minimal disruption. But scaling challenges appear quickly.

Teams typically consider outsourcing when they're labeling less than 25% of the data they collect or spending more than 30% of their AI budget on annotation work.

Real talk: if engineers are spending afternoons drawing bounding boxes instead of improving model architecture, something's wrong with the resource allocation.

Cost Economics of Outsourcing

The cost argument for outsourcing appears straightforward but requires careful analysis. Many sources suggest teams can often reduce cost per annotation by 50 to 70% through outsourcing, though actual savings depend heavily on project complexity and provider selection.

In-house annotation means paying engineer salaries for repetitive labeling work. For teams in high-cost regions like the US or Western Europe, this quickly becomes expensive. One source noted that clients from the US and Western Europe reported savings of approximately 80% after outsourcing to providers in lower-cost regions.

But cost comparisons shouldn't stop at hourly rates. Factor in the time engineers spend developing annotation tools, managing workflows, handling quality control, and dealing with annotator turnover. Specialized providers already have this infrastructure built.

Quality and Expertise Considerations

Professional annotation providers employ teams trained specifically for labeling work. They understand edge cases, maintain consistency across large datasets, and follow detailed annotation guidelines more reliably than engineers doing this work part-time.

Stanford research examining data annotation ethics notes that despite being indispensable to AI development, annotation work requires significant skill that's often underestimated. Quality annotation isn't just clicking boxes—it involves judgment calls, domain understanding, and attention to detail sustained over hours.

Providers also bring experience across multiple projects and industries. They've seen common annotation challenges before and developed solutions. That accumulated knowledge matters when unusual edge cases appear in data.

Speed and Scalability

Scaling in-house annotation means hiring, training, and managing additional annotators. That process takes weeks or months. Outsourcing providers can ramp teams up or down within days based on project needs.

One video annotation case study from an established provider showed they assembled an entire annotation team in four days and annotated 10,000+ images for immunology research, achieving a 98% quality rate with 5-6 videos annotated per week.

This flexibility matters for teams with variable annotation needs or tight deadlines. Projects don't wait for hiring pipelines to complete.

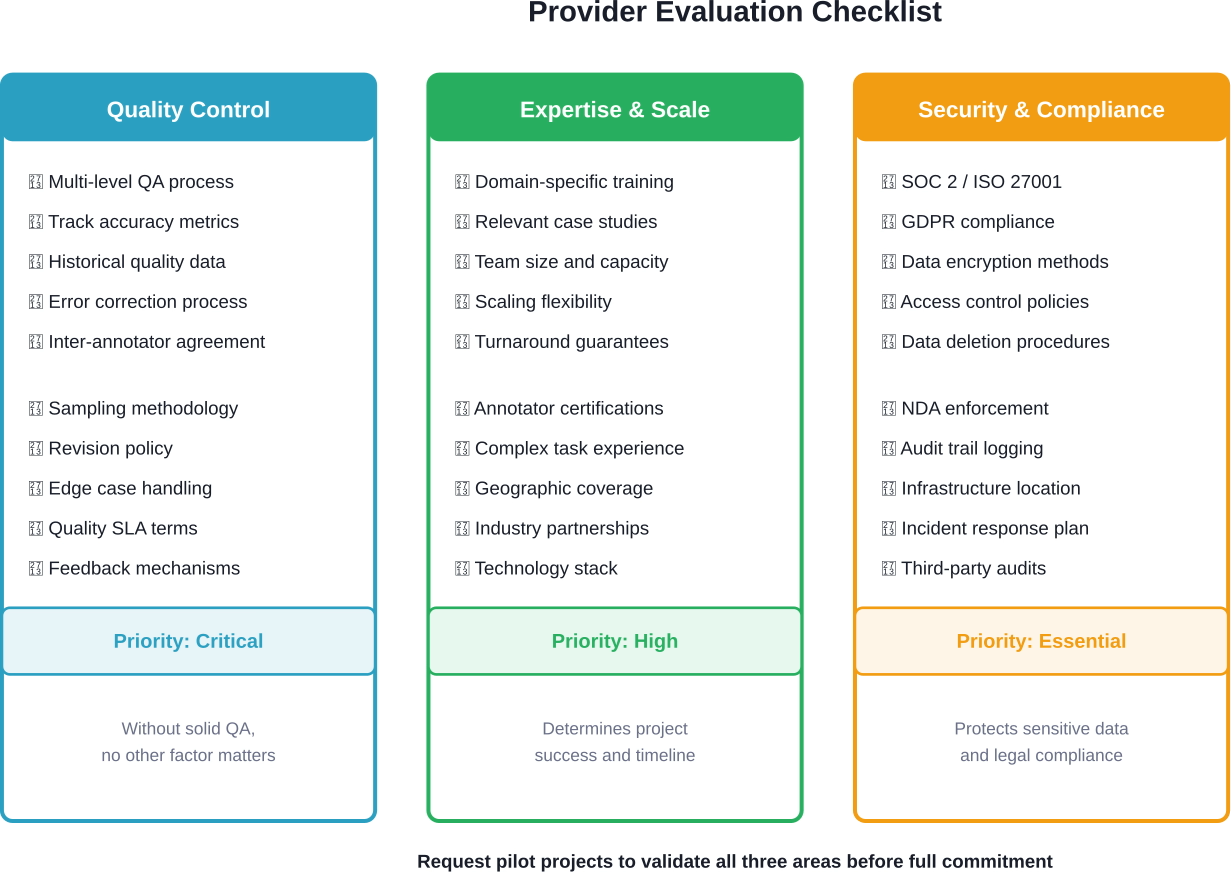

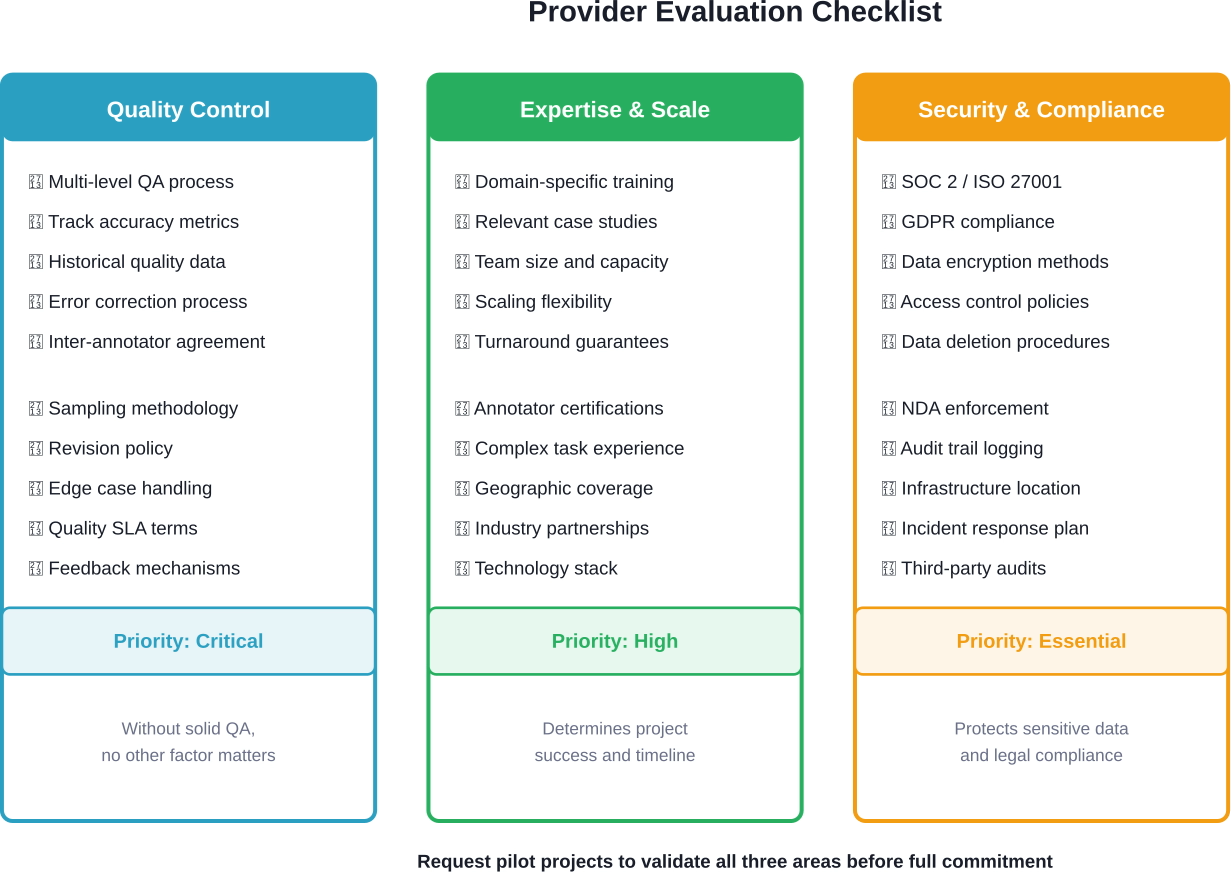

How to Select the Right Video Annotation Provider

Not all annotation providers deliver the same quality, reliability, or value. The market includes everything from crowdsourcing platforms to managed service providers to specialized AI training companies.

Choosing the wrong partner means wasted time, poor quality data, and potential project delays. Here's what to evaluate.

Annotation Quality Standards

Quality control processes separate professional providers from amateur operations. Ask potential providers detailed questions about their QA methodology.

Do they use multi-level review? A solid process typically includes initial annotation, peer review, and expert validation. Some providers implement sampling-based quality checks, while others review every annotated frame.

Request their quality metrics. Serious providers track annotation accuracy, inter-annotator agreement rates, and error types. They should willingly share historical quality data from similar projects.

IEEE research on quality control mechanisms emphasizes the importance of dynamic selection approaches rather than one-size-fits-all quality systems. The best providers adapt their QA intensity based on task complexity and project requirements.

Domain Expertise and Training

Generic annotation skills don't transfer perfectly across domains. Medical imaging annotation requires different knowledge than autonomous vehicle training data. Action recognition for sports differs from surveillance applications.

Ask about annotator training programs. How long do new annotators train before working on real projects? What ongoing training happens? How do they handle domain-specific annotation challenges?

Request case studies from similar projects. A provider claiming expertise in your domain should easily produce relevant examples.

Infrastructure and Tool Capabilities

The annotation platform matters more than teams sometimes realize. Clunky tools slow work down and increase error rates. Advanced tools with features like interpolation, automated tracking, and smart suggestions improve both speed and accuracy.

Ask about tool features specific to video annotation. Can annotators track objects automatically across frames? Does the platform support temporal annotations? What file formats and resolutions do they handle?

Integration capabilities deserve attention too. How will annotated data get delivered? Do they support your preferred formats? Can their systems integrate with your existing data pipelines?

Security and Compliance

Data security concerns kill deals regularly. If video data contains sensitive information—medical imagery, proprietary product designs, unreleased content—providers need robust security measures.

Verify their security certifications. SOC 2, ISO 27001, and GDPR compliance indicate serious security infrastructure. Ask about data handling procedures: where data gets stored, who has access, how it's encrypted, and what happens after project completion.

Research from Brookings Institution and Stanford examining responsible AI development emphasizes that annotation outsourcing to global markets requires careful attention to data practices and worker treatment standards to avoid exacerbating inequalities.

Pricing Models and Contract Flexibility

Video annotation pricing varies widely based on complexity, volume, and turnaround requirements. Providers typically offer several pricing models.

Per-frame pricing charges for each annotated frame. It's straightforward but can get expensive for high-frame-rate video. Per-video pricing sets costs based on video length and annotation requirements. Per-hour pricing bills for annotator time spent.

Many providers offer volume discounts for large projects. Some require minimum commitments, while others operate on flexible, pay-as-you-go models.

Watch for hidden costs. Does pricing include quality assurance? Are revisions included or charged separately? What about project management overhead?

Communication and Project Management

Ongoing communication makes or breaks outsourcing relationships. The provider should assign a dedicated project manager who understands your requirements and responds promptly to issues.

Ask about communication workflows. How frequently will they provide updates? What channels do they use? How do they handle questions about ambiguous cases in data?

Look for providers who become extensions of internal teams rather than distant vendors. The best partnerships feel collaborative, with providers proactively suggesting improvements and raising concerns early.

Build a Reliable Video Annotation Outsourcing Team

Video annotation projects require consistency across frames, edge cases, and evolving guidelines. NeoWork provides dedicated remote annotation teams trained to follow structured QA processes. With a 91% annualized teammate retention rate and a 3.2% candidate selectivity rate, NeoWork ensures stable teams that understand your labeling standards long term. That stability improves dataset accuracy and reduces costly relabeling.

Ready to Strengthen Your Video Annotation Pipeline?

Talk with NeoWork to:

- build a dedicated video annotation team

- maintain consistent labeling standards

- scale production without sacrificing accuracy

👉 Connect with NeoWork to plan your video annotation outsourcing setup.

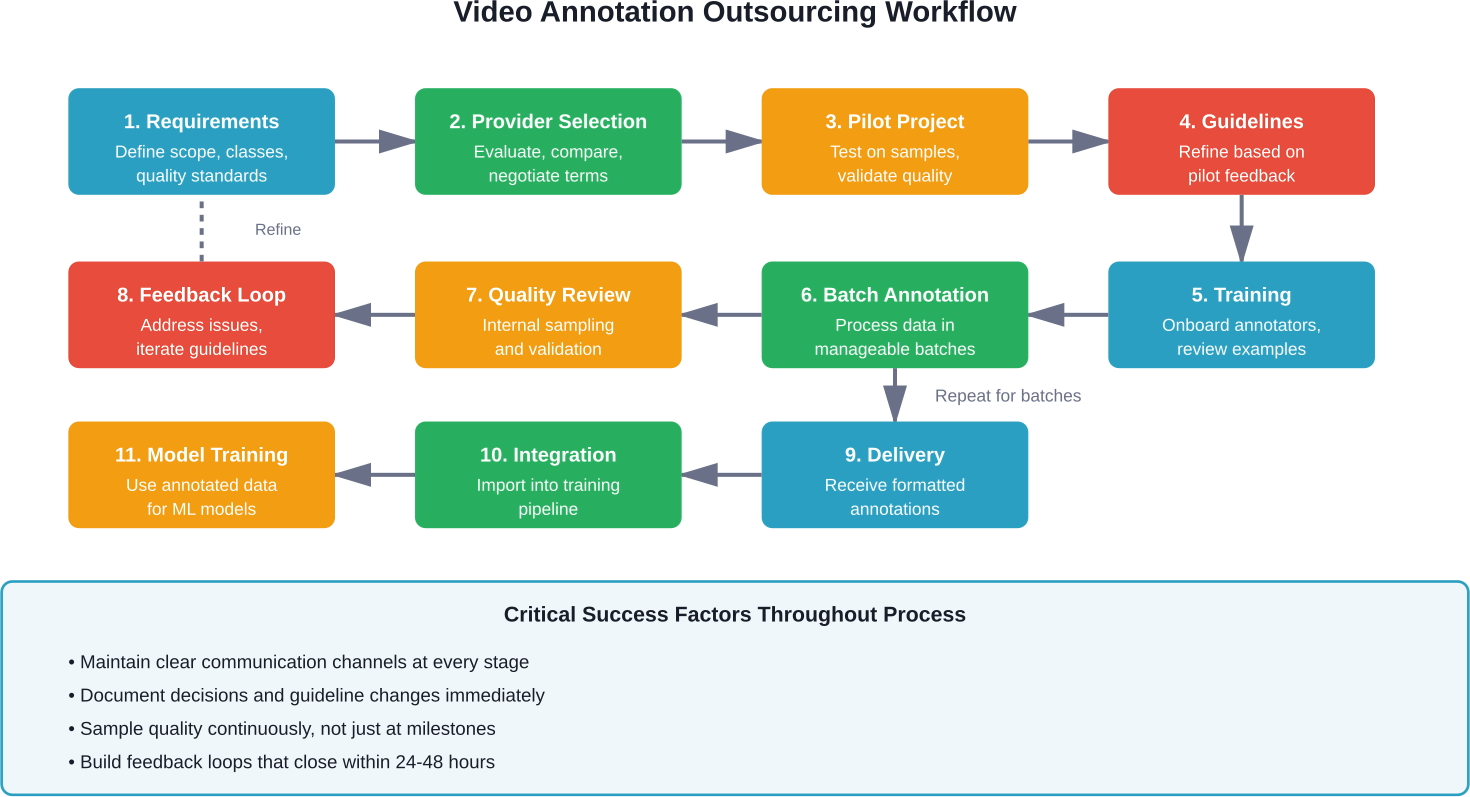

Managing the Outsourcing Relationship

Signing a contract doesn't end the work. Successful outsourcing requires active management, clear communication, and continuous refinement of processes.

Creating Effective Annotation Guidelines

Annotation quality depends heavily on guideline clarity. Vague instructions produce inconsistent results. Detailed, example-rich guidelines help annotators understand exactly what's needed.

Good guidelines include visual examples showing both correct and incorrect annotations. They address edge cases explicitly—what happens when objects are partially obscured? How should overlapping objects get handled? At what point does an object become too small to annotate?

Write guidelines assuming annotators have no context about the project. What seems obvious to domain experts often isn't clear to annotators seeing the data for the first time.

Update guidelines iteratively. As annotation work progresses, new edge cases appear. Document decisions about handling these cases and distribute updates to the annotation team promptly.

Setting Up Quality Monitoring

Trust but verify. Even with providers handling internal QA, maintain independent quality checks on delivered annotations.

Sample random batches regularly and review them internally. Track quality metrics over time. Are error rates increasing? Are certain annotation types consistently problematic? Address issues immediately rather than letting them compound.

Schedule regular quality review meetings with the provider. Discuss problematic cases, clarify guidelines, and align on quality expectations. Treat quality as a collaborative goal rather than a contractual battle.

Handling Revisions and Feedback Loops

Annotation projects rarely go perfectly on the first attempt. Build revision processes into workflows from the start.

When quality issues appear, provide specific feedback with examples. Don't just reject batches—explain what's wrong and how to fix it. The more specific feedback gets, the faster quality improves.

Track common error patterns. If the same mistakes keep appearing, the problem likely sits in guidelines or training rather than individual annotator performance.

Scaling Operations Gradually

Don't immediately scale to full production volumes. Start with pilot batches to validate quality and processes. Once pilot results meet expectations, gradually increase volume.

This staged approach catches problems when they're small and manageable. It's far easier to fix issues affecting 100 videos than issues affecting 10,000.

Monitor how quality changes as volume increases. Some providers maintain quality well at scale, while others struggle when stretched thin across multiple projects.

Common Challenges and How to Address Them

Video annotation outsourcing involves predictable challenges. Teams that anticipate these issues and plan accordingly get better results.

Annotation Consistency Across Large Teams

Multiple annotators working on the same project introduce variability. What one annotator considers worth labeling, another might skip. Boundary placement varies between individuals.

Establish clear consensus on ambiguous cases early. When annotators disagree about handling specific situations, document the official decision and communicate it team-wide.

Regular calibration sessions help. Have annotators label the same sample set periodically, then compare results and discuss discrepancies. This builds shared understanding of guidelines.

Handling Domain-Specific Complexity

Generic annotation providers sometimes struggle with specialized domains. Medical imaging, rare industrial processes, or niche scientific applications require specific knowledge.

Invest time in thorough training for domain-specific projects. Provide background materials, domain glossaries, and access to subject matter experts for questions.

Consider hybrid approaches where domain experts handle the most complex annotations while general annotators handle straightforward cases. This balances cost with quality on specialized projects.

Data Privacy and Security Concerns

Sending sensitive video data to third parties creates legitimate security concerns. Medical records, proprietary designs, unreleased products, and personal information all require careful handling.

Use data anonymization where possible before sending to annotators. Remove identifying information, blur faces or license plates, or substitute synthetic data for particularly sensitive elements.

Verify provider security practices thoroughly. Request security audit reports, review their data handling procedures, and ensure contracts include strong confidentiality clauses with meaningful penalties for breaches.

For highly sensitive projects, consider on-premise annotation where provider teams work within secure facilities under direct oversight.

Managing Turnaround Time Expectations

Annotation speed matters but shouldn't compromise quality. Aggressive timelines often lead to rushed work and increased error rates.

Set realistic timelines based on annotation complexity and volume. A minute of dense urban driving footage with 30 object classes takes longer to annotate than simple scenes with few objects.

Build buffer time into project schedules. Annotation delays happen—unclear edge cases, guideline updates, quality issues requiring rework. Plans that assume perfect execution inevitably slip.

Cost Overruns from Scope Changes

Annotation requirements often evolve as projects progress. New object classes get added, annotation detail increases, or edge case handling becomes more complex.

Each scope change impacts pricing. What seemed like a minor tweak—adding one more object class—can significantly increase annotation time per frame.

Manage scope carefully. When requirements change, discuss cost implications with the provider before proceeding. Track how changes affect per-unit costs to avoid budget surprises.

Pricing Models and Budget Planning

Video annotation costs vary dramatically based on complexity, volume, quality requirements, and turnaround speed. Understanding pricing models helps with accurate budgeting.

Typical Pricing Structures

Providers structure pricing in several ways. Per-frame pricing charges a fixed rate for each annotated frame, with rates varying by annotation complexity. Simple bounding boxes cost less than detailed polygon segmentation.

Per-video pricing sets rates based on video duration and annotation requirements. This works well when annotation density stays consistent throughout videos.

Per-hour pricing bills for actual annotator time. This makes sense for complex projects where annotation time per frame varies significantly.

Some providers offer hybrid models combining base fees with per-unit charges. Others structure pricing around project milestones rather than individual units.

Factors Affecting Cost

Annotation complexity drives the biggest cost differences. Drawing bounding boxes around large, clear objects costs far less than pixel-perfect segmentation of irregular shapes.

Object density matters. Frames with dozens of objects take longer to annotate than sparse scenes. Tracking objects across frames adds complexity compared to annotating individual frames independently.

Quality requirements affect pricing. Higher quality standards require more review layers, increasing costs. Projects tolerating some error rate cost less than those demanding near-perfect accuracy.

Turnaround time impacts price. Rush jobs typically cost 20-50% more than standard timelines. Providers must dedicate more resources to meet tight deadlines.

Budget Planning Strategies

Start with pilot projects to establish realistic per-unit costs before committing to large-scale annotation. Actual costs often differ from initial estimates once real data reveals complexity.

Build contingency into budgets. Plan for 15-20% cost overruns from scope changes, quality revisions, and unexpected challenges.

Consider volume discounts for large projects. Many providers offer significantly reduced rates once volumes exceed certain thresholds.

Balance cost against quality needs. Not every frame requires perfect annotation. Focus quality investment on critical data while accepting lower precision for less important samples.

Best Practices for Successful Outsourcing

Teams that consistently get good results from outsourced annotation follow common patterns. These practices minimize issues and maximize value.

Start With Crystal-Clear Requirements

Ambiguous requirements cause most annotation problems. Before engaging providers, document exactly what needs annotation, at what quality level, and with what specifications.

Create detailed annotation guidelines with visual examples. Define every object class precisely. Specify how to handle edge cases. The more clarity upfront, the fewer issues downstream.

Don't assume providers will interpret requirements the same way internal teams do. Make everything explicit.

Run Thorough Pilot Projects

Never commit to large-scale annotation without testing providers on representative samples first. Pilots reveal quality issues, communication gaps, and process inefficiencies while stakes remain low.

Use pilot results to refine guidelines, improve training, and optimize workflows before scaling. The time invested in pilots pays back many times over by preventing large-scale quality issues.

Maintain Regular Communication

Treat outsourcing partners as extensions of internal teams rather than distant vendors. Schedule regular check-ins to discuss progress, address issues, and align on priorities.

Create channels for annotators to ask questions about ambiguous cases. Quick clarifications prevent incorrect annotations from propagating through large datasets.

Implement Staged Quality Gates

Don't wait until project completion to check quality. Build quality gates at regular intervals—review samples after the first 5% of work, then 25%, then 50%.

Catching quality issues early allows corrections before massive rework becomes necessary. Staged gates also build confidence that projects stay on track.

Document Everything

Keep detailed records of decisions, guideline changes, edge case resolutions, and quality issues. This documentation becomes invaluable when scaling projects or onboarding new team members.

Create knowledge bases that capture lessons learned. When similar projects arise later, teams can reference past decisions rather than solving the same problems repeatedly.

Build Long-Term Partnerships

Switching providers frequently means constant re-training and quality inconsistency. Finding a reliable provider and building a long-term relationship pays dividends.

Providers who work with teams repeatedly understand requirements better, produce higher quality work, and become genuinely invested in project success.

Tools and Technologies for Video Annotation

The annotation platform significantly impacts efficiency and quality. Modern tools offer features that dramatically speed up video annotation while reducing errors.

Key Platform Capabilities

Interpolation features automatically track objects between keyframes. Annotators mark an object's position in frame 1 and frame 30, and the tool interpolates positions for frames 2-29. This reduces manual work by 90% or more for tracking tasks.

Auto-segmentation uses machine learning to suggest object boundaries. Annotators refine suggestions rather than drawing from scratch, significantly speeding polygon and segmentation work.

Collaborative features allow multiple annotators to work on different sections of the same video simultaneously. Built-in communication tools help teams resolve ambiguous cases quickly.

Version control tracks annotation changes over time. When guidelines evolve or errors get discovered, teams can identify which annotations need updating.

Export flexibility matters for integration with training pipelines. Quality tools support multiple format options—COCO, Pascal VOC, YOLO, custom JSON schemas.

Cloud vs. On-Premise Solutions

Cloud-based annotation platforms offer accessibility and scalability. Annotators work from anywhere, and infrastructure scales automatically with team size.

On-premise solutions provide more control over data security. Video files never leave internal networks, addressing concerns about sensitive content.

Hybrid approaches are becoming common—annotation tools run in the cloud while video storage remains on-premise. Tools stream video data securely without requiring full uploads.

Automation and AI-Assisted Annotation

Pre-annotation using existing models speeds up human annotation. Models generate initial labels that humans review and correct. This works especially well when models achieve 70-80% accuracy—good enough to provide helpful starting points.

Active learning identifies which frames need human attention most. Rather than annotating every frame, systems flag frames where model confidence drops or where annotation will improve model performance most.

Research on quality control mechanisms suggests dynamic approaches that adapt annotation intensity based on data characteristics deliver better efficiency-quality tradeoffs than fixed processes.

Industry-Specific Video Annotation Considerations

Different industries have unique annotation requirements that affect outsourcing decisions.

Autonomous Vehicles

Self-driving car training data requires extreme precision and completeness. Every object potentially matters for safety—vehicles, pedestrians, cyclists, animals, debris, traffic signs, lane markings.

Annotation density is high. A single frame might contain dozens of objects requiring precise boundaries. Temporal tracking becomes crucial for understanding object behavior and predicting movements.

Edge cases matter enormously. Rare situations—unusual weather conditions, uncommon traffic scenarios, edge case pedestrian behaviors—require careful annotation even though they appear infrequently.

Medical and Healthcare

Medical video annotation demands specialized knowledge. Annotators need training to identify anatomical structures, recognize pathologies, and understand clinical contexts.

Data sensitivity requires strict security measures. HIPAA compliance is non-negotiable for US healthcare data. Providers must demonstrate appropriate safeguards for patient privacy.

Research from MIT examining dermatology image datasets emphasizes the importance of transparency in medical annotation work, including careful consideration of annotator expertise and demographic representation.

Retail and E-Commerce

Retail applications often focus on action recognition—identifying customer behaviors, detecting shelf gaps, monitoring checkout processes.

Annotation requirements tend toward event tagging and temporal annotation rather than detailed object segmentation. Speed often matters more than extreme precision.

Security and Surveillance

Surveillance annotation focuses on detecting specific behaviors and events—unauthorized access, suspicious activities, safety violations.

Privacy concerns require careful handling. Face blurring and identity protection often happen before annotation to protect individual privacy while maintaining useful training data.

Sports and Entertainment

Sports analytics requires tracking player positions, ball location, and game events. Pose estimation and action recognition drive most applications.

Temporal precision matters—events need timestamps accurate to fractions of a second. Content moderation for entertainment platforms requires identifying specific content types according to detailed taxonomies.

Future Trends in Video Annotation Outsourcing

The annotation landscape continues evolving as technology advances and market dynamics shift.

Increasing Automation

AI-assisted annotation tools keep improving. Models handle more initial labeling work, with humans focusing on correction and quality assurance rather than annotation from scratch.

This shift changes the skill profile for annotation work. Quality verification and edge case handling become more important than pure labeling speed.

Specialized Provider Niches

The annotation market is fragmenting into specialized niches. Rather than general-purpose providers, specialized companies focus on specific domains—medical imaging, autonomous vehicles, agricultural monitoring.

Domain specialization enables higher quality through focused expertise but requires more careful provider matching to project needs.

Ethical Considerations

Growing attention focuses on annotation workforce conditions. Research from Stanford and Brookings examining responsible AI development highlights concerns about data annotation labor practices, particularly when outsourcing to developing regions.

Ethical sourcing considerations increasingly influence provider selection. Companies examine worker treatment, fair compensation, and working conditions alongside traditional quality and cost metrics.

Synthetic Data Alternatives

Synthetic video generation offers alternatives to manual annotation for some applications. Computer graphics generate training scenarios with automatic ground truth labels.

Synthetic approaches work best when real-world data collection is expensive or dangerous—extreme weather conditions, rare traffic scenarios, hazardous environments.

But synthetic data doesn't fully replace real-world annotation yet. Domain gap between synthetic and real data remains an active research challenge.

Making the Right Decision for Your Project

Video annotation outsourcing isn't one-size-fits-all. The right approach depends on project specifics, organizational capabilities, and strategic priorities.

Small pilot projects with a few hundred clips often make sense in-house. This builds internal understanding of annotation challenges and requirements before committing to external partnerships.

But as projects scale, outsourcing usually becomes the more practical option. The cost, speed, and quality benefits of specialized providers outweigh the coordination overhead for most teams.

Focus provider selection on quality first, cost second. Poor annotations waste far more money than they save. A provider charging 30% more but delivering 98% accuracy costs less overall than a cheap provider at 85% accuracy requiring extensive rework.

Start relationships with pilot projects. Test quality, communication, and processes before committing to large-scale work. The time invested in thorough vetting pays back many times over.

Build partnerships, not transactions. Long-term relationships with reliable providers deliver better results than constantly switching vendors chasing marginal cost savings.

Document everything. Capture decisions, lessons learned, and edge case resolutions. This knowledge becomes invaluable as projects scale and team members change.

Remember that annotation is just one piece of the machine learning pipeline. The best annotations in the world don't help if model architecture, training procedures, or evaluation methods have fundamental issues. Treat annotation as an important component of a larger system rather than the entire solution.

The AI and computer vision landscape continues evolving rapidly. Annotation requirements, tools, and best practices will keep changing. Stay informed about developments in the field. Maintain flexibility in approaches rather than locking into rigid processes.

Teams that approach video annotation outsourcing strategically—with clear requirements, careful provider selection, strong communication, and continuous quality monitoring—consistently achieve better results while building valuable partnerships that scale with their needs.

Frequently Asked Questions

Topics

Video Annotation Outsourcing Guide 2026

Video annotation outsourcing involves hiring specialized third-party providers to label and tag objects, actions, and events in video data for training AI models. This guide covers when to outsource vs. build in-house, how to select the right annotation partner, key pricing models, quality assurance practices, and common challenges teams face when scaling video annotation projects.

Building AI models that understand video requires massive amounts of labeled data. Most teams hit a wall around the same point—when annotation tasks grow beyond what internal engineers can handle without sacrificing their actual product work.

That's when outsourcing becomes less of a nice-to-have and more of a strategic necessity. But the decision to outsource video annotation isn't just about cost savings. It's about maintaining quality at scale, managing complex workflows, and finding partners who understand the nuances of your specific computer vision tasks.

This guide walks through everything teams need to know about video annotation outsourcing: the fundamentals, when it makes sense, how to evaluate providers, and what to watch for as projects scale.

What Video Annotation Actually Involves

Video annotation is the process of labeling objects, actions, events, and attributes within video frames to create training data for machine learning models. Unlike static image annotation, video introduces temporal dimensions—tracking how objects move, interact, and change across time.

The process breaks down video into individual frames, then applies various annotation techniques depending on the use case. For autonomous vehicle training, that might mean drawing bounding boxes around pedestrians, vehicles, and traffic signs across thousands of sequential frames. For action recognition models, it involves tagging specific behaviors or events as they occur.

Here's the thing though—video annotation is significantly more resource-intensive than image annotation. A minute of video at 30 frames per second contains 1,800 individual frames (e.g., a 60-second video at 60 fps yields 3,600 frames). Annotating five minutes of footage at 30 fps means 9,000 frames requiring human attention.

Common Video Annotation Techniques

Different machine learning applications require different annotation approaches. Bounding boxes remain the most common method, drawing rectangles around objects of interest in each frame. They're computationally efficient and work well for object detection tasks.

Polygon annotation offers more precision by tracing irregular object boundaries. This matters for applications needing exact shape information, like medical imaging or detailed scene segmentation.

Semantic segmentation takes it further, classifying every pixel in a frame. Each pixel gets assigned to a specific category—road, sidewalk, building, sky. It's computationally expensive but necessary for applications requiring complete scene understanding.

Keypoint annotation marks specific points of interest, often used for pose estimation or facial landmark detection. Think of tracking joint positions for human motion analysis or monitoring facial expressions.

Then there's temporal annotation—tagging events or activities that occur across time ranges rather than in single frames. This powers applications like sports analytics, surveillance systems, and content moderation.

When Outsourcing Makes More Sense Than In-House Work

The decision to outsource video annotation comes down to resource allocation. Small pilot projects with a few hundred clips can run in-house with minimal disruption. But scaling challenges appear quickly.

Teams typically consider outsourcing when they're labeling less than 25% of the data they collect or spending more than 30% of their AI budget on annotation work.

Real talk: if engineers are spending afternoons drawing bounding boxes instead of improving model architecture, something's wrong with the resource allocation.

Cost Economics of Outsourcing

The cost argument for outsourcing appears straightforward but requires careful analysis. Many sources suggest teams can often reduce cost per annotation by 50 to 70% through outsourcing, though actual savings depend heavily on project complexity and provider selection.

In-house annotation means paying engineer salaries for repetitive labeling work. For teams in high-cost regions like the US or Western Europe, this quickly becomes expensive. One source noted that clients from the US and Western Europe reported savings of approximately 80% after outsourcing to providers in lower-cost regions.

But cost comparisons shouldn't stop at hourly rates. Factor in the time engineers spend developing annotation tools, managing workflows, handling quality control, and dealing with annotator turnover. Specialized providers already have this infrastructure built.

Quality and Expertise Considerations

Professional annotation providers employ teams trained specifically for labeling work. They understand edge cases, maintain consistency across large datasets, and follow detailed annotation guidelines more reliably than engineers doing this work part-time.

Stanford research examining data annotation ethics notes that despite being indispensable to AI development, annotation work requires significant skill that's often underestimated. Quality annotation isn't just clicking boxes—it involves judgment calls, domain understanding, and attention to detail sustained over hours.

Providers also bring experience across multiple projects and industries. They've seen common annotation challenges before and developed solutions. That accumulated knowledge matters when unusual edge cases appear in data.

Speed and Scalability

Scaling in-house annotation means hiring, training, and managing additional annotators. That process takes weeks or months. Outsourcing providers can ramp teams up or down within days based on project needs.

One video annotation case study from an established provider showed they assembled an entire annotation team in four days and annotated 10,000+ images for immunology research, achieving a 98% quality rate with 5-6 videos annotated per week.

This flexibility matters for teams with variable annotation needs or tight deadlines. Projects don't wait for hiring pipelines to complete.

How to Select the Right Video Annotation Provider

Not all annotation providers deliver the same quality, reliability, or value. The market includes everything from crowdsourcing platforms to managed service providers to specialized AI training companies.

Choosing the wrong partner means wasted time, poor quality data, and potential project delays. Here's what to evaluate.

Annotation Quality Standards

Quality control processes separate professional providers from amateur operations. Ask potential providers detailed questions about their QA methodology.

Do they use multi-level review? A solid process typically includes initial annotation, peer review, and expert validation. Some providers implement sampling-based quality checks, while others review every annotated frame.

Request their quality metrics. Serious providers track annotation accuracy, inter-annotator agreement rates, and error types. They should willingly share historical quality data from similar projects.

IEEE research on quality control mechanisms emphasizes the importance of dynamic selection approaches rather than one-size-fits-all quality systems. The best providers adapt their QA intensity based on task complexity and project requirements.

Domain Expertise and Training

Generic annotation skills don't transfer perfectly across domains. Medical imaging annotation requires different knowledge than autonomous vehicle training data. Action recognition for sports differs from surveillance applications.

Ask about annotator training programs. How long do new annotators train before working on real projects? What ongoing training happens? How do they handle domain-specific annotation challenges?

Request case studies from similar projects. A provider claiming expertise in your domain should easily produce relevant examples.

Infrastructure and Tool Capabilities

The annotation platform matters more than teams sometimes realize. Clunky tools slow work down and increase error rates. Advanced tools with features like interpolation, automated tracking, and smart suggestions improve both speed and accuracy.

Ask about tool features specific to video annotation. Can annotators track objects automatically across frames? Does the platform support temporal annotations? What file formats and resolutions do they handle?

Integration capabilities deserve attention too. How will annotated data get delivered? Do they support your preferred formats? Can their systems integrate with your existing data pipelines?

Security and Compliance

Data security concerns kill deals regularly. If video data contains sensitive information—medical imagery, proprietary product designs, unreleased content—providers need robust security measures.

Verify their security certifications. SOC 2, ISO 27001, and GDPR compliance indicate serious security infrastructure. Ask about data handling procedures: where data gets stored, who has access, how it's encrypted, and what happens after project completion.

Research from Brookings Institution and Stanford examining responsible AI development emphasizes that annotation outsourcing to global markets requires careful attention to data practices and worker treatment standards to avoid exacerbating inequalities.

Pricing Models and Contract Flexibility

Video annotation pricing varies widely based on complexity, volume, and turnaround requirements. Providers typically offer several pricing models.

Per-frame pricing charges for each annotated frame. It's straightforward but can get expensive for high-frame-rate video. Per-video pricing sets costs based on video length and annotation requirements. Per-hour pricing bills for annotator time spent.

Many providers offer volume discounts for large projects. Some require minimum commitments, while others operate on flexible, pay-as-you-go models.

Watch for hidden costs. Does pricing include quality assurance? Are revisions included or charged separately? What about project management overhead?

Communication and Project Management

Ongoing communication makes or breaks outsourcing relationships. The provider should assign a dedicated project manager who understands your requirements and responds promptly to issues.

Ask about communication workflows. How frequently will they provide updates? What channels do they use? How do they handle questions about ambiguous cases in data?

Look for providers who become extensions of internal teams rather than distant vendors. The best partnerships feel collaborative, with providers proactively suggesting improvements and raising concerns early.

Build a Reliable Video Annotation Outsourcing Team

Video annotation projects require consistency across frames, edge cases, and evolving guidelines. NeoWork provides dedicated remote annotation teams trained to follow structured QA processes. With a 91% annualized teammate retention rate and a 3.2% candidate selectivity rate, NeoWork ensures stable teams that understand your labeling standards long term. That stability improves dataset accuracy and reduces costly relabeling.

Ready to Strengthen Your Video Annotation Pipeline?

Talk with NeoWork to:

- build a dedicated video annotation team

- maintain consistent labeling standards

- scale production without sacrificing accuracy

👉 Connect with NeoWork to plan your video annotation outsourcing setup.

Managing the Outsourcing Relationship

Signing a contract doesn't end the work. Successful outsourcing requires active management, clear communication, and continuous refinement of processes.

Creating Effective Annotation Guidelines

Annotation quality depends heavily on guideline clarity. Vague instructions produce inconsistent results. Detailed, example-rich guidelines help annotators understand exactly what's needed.

Good guidelines include visual examples showing both correct and incorrect annotations. They address edge cases explicitly—what happens when objects are partially obscured? How should overlapping objects get handled? At what point does an object become too small to annotate?

Write guidelines assuming annotators have no context about the project. What seems obvious to domain experts often isn't clear to annotators seeing the data for the first time.

Update guidelines iteratively. As annotation work progresses, new edge cases appear. Document decisions about handling these cases and distribute updates to the annotation team promptly.

Setting Up Quality Monitoring

Trust but verify. Even with providers handling internal QA, maintain independent quality checks on delivered annotations.

Sample random batches regularly and review them internally. Track quality metrics over time. Are error rates increasing? Are certain annotation types consistently problematic? Address issues immediately rather than letting them compound.

Schedule regular quality review meetings with the provider. Discuss problematic cases, clarify guidelines, and align on quality expectations. Treat quality as a collaborative goal rather than a contractual battle.

Handling Revisions and Feedback Loops

Annotation projects rarely go perfectly on the first attempt. Build revision processes into workflows from the start.

When quality issues appear, provide specific feedback with examples. Don't just reject batches—explain what's wrong and how to fix it. The more specific feedback gets, the faster quality improves.

Track common error patterns. If the same mistakes keep appearing, the problem likely sits in guidelines or training rather than individual annotator performance.

Scaling Operations Gradually

Don't immediately scale to full production volumes. Start with pilot batches to validate quality and processes. Once pilot results meet expectations, gradually increase volume.

This staged approach catches problems when they're small and manageable. It's far easier to fix issues affecting 100 videos than issues affecting 10,000.

Monitor how quality changes as volume increases. Some providers maintain quality well at scale, while others struggle when stretched thin across multiple projects.

Common Challenges and How to Address Them

Video annotation outsourcing involves predictable challenges. Teams that anticipate these issues and plan accordingly get better results.

Annotation Consistency Across Large Teams

Multiple annotators working on the same project introduce variability. What one annotator considers worth labeling, another might skip. Boundary placement varies between individuals.

Establish clear consensus on ambiguous cases early. When annotators disagree about handling specific situations, document the official decision and communicate it team-wide.

Regular calibration sessions help. Have annotators label the same sample set periodically, then compare results and discuss discrepancies. This builds shared understanding of guidelines.

Handling Domain-Specific Complexity

Generic annotation providers sometimes struggle with specialized domains. Medical imaging, rare industrial processes, or niche scientific applications require specific knowledge.

Invest time in thorough training for domain-specific projects. Provide background materials, domain glossaries, and access to subject matter experts for questions.

Consider hybrid approaches where domain experts handle the most complex annotations while general annotators handle straightforward cases. This balances cost with quality on specialized projects.

Data Privacy and Security Concerns

Sending sensitive video data to third parties creates legitimate security concerns. Medical records, proprietary designs, unreleased products, and personal information all require careful handling.

Use data anonymization where possible before sending to annotators. Remove identifying information, blur faces or license plates, or substitute synthetic data for particularly sensitive elements.

Verify provider security practices thoroughly. Request security audit reports, review their data handling procedures, and ensure contracts include strong confidentiality clauses with meaningful penalties for breaches.

For highly sensitive projects, consider on-premise annotation where provider teams work within secure facilities under direct oversight.

Managing Turnaround Time Expectations

Annotation speed matters but shouldn't compromise quality. Aggressive timelines often lead to rushed work and increased error rates.

Set realistic timelines based on annotation complexity and volume. A minute of dense urban driving footage with 30 object classes takes longer to annotate than simple scenes with few objects.

Build buffer time into project schedules. Annotation delays happen—unclear edge cases, guideline updates, quality issues requiring rework. Plans that assume perfect execution inevitably slip.

Cost Overruns from Scope Changes

Annotation requirements often evolve as projects progress. New object classes get added, annotation detail increases, or edge case handling becomes more complex.

Each scope change impacts pricing. What seemed like a minor tweak—adding one more object class—can significantly increase annotation time per frame.

Manage scope carefully. When requirements change, discuss cost implications with the provider before proceeding. Track how changes affect per-unit costs to avoid budget surprises.

Pricing Models and Budget Planning

Video annotation costs vary dramatically based on complexity, volume, quality requirements, and turnaround speed. Understanding pricing models helps with accurate budgeting.

Typical Pricing Structures

Providers structure pricing in several ways. Per-frame pricing charges a fixed rate for each annotated frame, with rates varying by annotation complexity. Simple bounding boxes cost less than detailed polygon segmentation.

Per-video pricing sets rates based on video duration and annotation requirements. This works well when annotation density stays consistent throughout videos.

Per-hour pricing bills for actual annotator time. This makes sense for complex projects where annotation time per frame varies significantly.

Some providers offer hybrid models combining base fees with per-unit charges. Others structure pricing around project milestones rather than individual units.

Factors Affecting Cost

Annotation complexity drives the biggest cost differences. Drawing bounding boxes around large, clear objects costs far less than pixel-perfect segmentation of irregular shapes.

Object density matters. Frames with dozens of objects take longer to annotate than sparse scenes. Tracking objects across frames adds complexity compared to annotating individual frames independently.

Quality requirements affect pricing. Higher quality standards require more review layers, increasing costs. Projects tolerating some error rate cost less than those demanding near-perfect accuracy.

Turnaround time impacts price. Rush jobs typically cost 20-50% more than standard timelines. Providers must dedicate more resources to meet tight deadlines.

Budget Planning Strategies

Start with pilot projects to establish realistic per-unit costs before committing to large-scale annotation. Actual costs often differ from initial estimates once real data reveals complexity.

Build contingency into budgets. Plan for 15-20% cost overruns from scope changes, quality revisions, and unexpected challenges.

Consider volume discounts for large projects. Many providers offer significantly reduced rates once volumes exceed certain thresholds.

Balance cost against quality needs. Not every frame requires perfect annotation. Focus quality investment on critical data while accepting lower precision for less important samples.

Best Practices for Successful Outsourcing

Teams that consistently get good results from outsourced annotation follow common patterns. These practices minimize issues and maximize value.

Start With Crystal-Clear Requirements

Ambiguous requirements cause most annotation problems. Before engaging providers, document exactly what needs annotation, at what quality level, and with what specifications.

Create detailed annotation guidelines with visual examples. Define every object class precisely. Specify how to handle edge cases. The more clarity upfront, the fewer issues downstream.

Don't assume providers will interpret requirements the same way internal teams do. Make everything explicit.

Run Thorough Pilot Projects

Never commit to large-scale annotation without testing providers on representative samples first. Pilots reveal quality issues, communication gaps, and process inefficiencies while stakes remain low.

Use pilot results to refine guidelines, improve training, and optimize workflows before scaling. The time invested in pilots pays back many times over by preventing large-scale quality issues.

Maintain Regular Communication

Treat outsourcing partners as extensions of internal teams rather than distant vendors. Schedule regular check-ins to discuss progress, address issues, and align on priorities.

Create channels for annotators to ask questions about ambiguous cases. Quick clarifications prevent incorrect annotations from propagating through large datasets.

Implement Staged Quality Gates

Don't wait until project completion to check quality. Build quality gates at regular intervals—review samples after the first 5% of work, then 25%, then 50%.

Catching quality issues early allows corrections before massive rework becomes necessary. Staged gates also build confidence that projects stay on track.

Document Everything

Keep detailed records of decisions, guideline changes, edge case resolutions, and quality issues. This documentation becomes invaluable when scaling projects or onboarding new team members.

Create knowledge bases that capture lessons learned. When similar projects arise later, teams can reference past decisions rather than solving the same problems repeatedly.

Build Long-Term Partnerships

Switching providers frequently means constant re-training and quality inconsistency. Finding a reliable provider and building a long-term relationship pays dividends.

Providers who work with teams repeatedly understand requirements better, produce higher quality work, and become genuinely invested in project success.

Tools and Technologies for Video Annotation

The annotation platform significantly impacts efficiency and quality. Modern tools offer features that dramatically speed up video annotation while reducing errors.

Key Platform Capabilities

Interpolation features automatically track objects between keyframes. Annotators mark an object's position in frame 1 and frame 30, and the tool interpolates positions for frames 2-29. This reduces manual work by 90% or more for tracking tasks.

Auto-segmentation uses machine learning to suggest object boundaries. Annotators refine suggestions rather than drawing from scratch, significantly speeding polygon and segmentation work.

Collaborative features allow multiple annotators to work on different sections of the same video simultaneously. Built-in communication tools help teams resolve ambiguous cases quickly.

Version control tracks annotation changes over time. When guidelines evolve or errors get discovered, teams can identify which annotations need updating.

Export flexibility matters for integration with training pipelines. Quality tools support multiple format options—COCO, Pascal VOC, YOLO, custom JSON schemas.

Cloud vs. On-Premise Solutions

Cloud-based annotation platforms offer accessibility and scalability. Annotators work from anywhere, and infrastructure scales automatically with team size.

On-premise solutions provide more control over data security. Video files never leave internal networks, addressing concerns about sensitive content.

Hybrid approaches are becoming common—annotation tools run in the cloud while video storage remains on-premise. Tools stream video data securely without requiring full uploads.

Automation and AI-Assisted Annotation

Pre-annotation using existing models speeds up human annotation. Models generate initial labels that humans review and correct. This works especially well when models achieve 70-80% accuracy—good enough to provide helpful starting points.

Active learning identifies which frames need human attention most. Rather than annotating every frame, systems flag frames where model confidence drops or where annotation will improve model performance most.

Research on quality control mechanisms suggests dynamic approaches that adapt annotation intensity based on data characteristics deliver better efficiency-quality tradeoffs than fixed processes.

Industry-Specific Video Annotation Considerations

Different industries have unique annotation requirements that affect outsourcing decisions.

Autonomous Vehicles

Self-driving car training data requires extreme precision and completeness. Every object potentially matters for safety—vehicles, pedestrians, cyclists, animals, debris, traffic signs, lane markings.

Annotation density is high. A single frame might contain dozens of objects requiring precise boundaries. Temporal tracking becomes crucial for understanding object behavior and predicting movements.

Edge cases matter enormously. Rare situations—unusual weather conditions, uncommon traffic scenarios, edge case pedestrian behaviors—require careful annotation even though they appear infrequently.

Medical and Healthcare

Medical video annotation demands specialized knowledge. Annotators need training to identify anatomical structures, recognize pathologies, and understand clinical contexts.

Data sensitivity requires strict security measures. HIPAA compliance is non-negotiable for US healthcare data. Providers must demonstrate appropriate safeguards for patient privacy.

Research from MIT examining dermatology image datasets emphasizes the importance of transparency in medical annotation work, including careful consideration of annotator expertise and demographic representation.

Retail and E-Commerce

Retail applications often focus on action recognition—identifying customer behaviors, detecting shelf gaps, monitoring checkout processes.

Annotation requirements tend toward event tagging and temporal annotation rather than detailed object segmentation. Speed often matters more than extreme precision.

Security and Surveillance

Surveillance annotation focuses on detecting specific behaviors and events—unauthorized access, suspicious activities, safety violations.

Privacy concerns require careful handling. Face blurring and identity protection often happen before annotation to protect individual privacy while maintaining useful training data.

Sports and Entertainment

Sports analytics requires tracking player positions, ball location, and game events. Pose estimation and action recognition drive most applications.

Temporal precision matters—events need timestamps accurate to fractions of a second. Content moderation for entertainment platforms requires identifying specific content types according to detailed taxonomies.

Future Trends in Video Annotation Outsourcing

The annotation landscape continues evolving as technology advances and market dynamics shift.

Increasing Automation

AI-assisted annotation tools keep improving. Models handle more initial labeling work, with humans focusing on correction and quality assurance rather than annotation from scratch.

This shift changes the skill profile for annotation work. Quality verification and edge case handling become more important than pure labeling speed.

Specialized Provider Niches

The annotation market is fragmenting into specialized niches. Rather than general-purpose providers, specialized companies focus on specific domains—medical imaging, autonomous vehicles, agricultural monitoring.

Domain specialization enables higher quality through focused expertise but requires more careful provider matching to project needs.

Ethical Considerations

Growing attention focuses on annotation workforce conditions. Research from Stanford and Brookings examining responsible AI development highlights concerns about data annotation labor practices, particularly when outsourcing to developing regions.

Ethical sourcing considerations increasingly influence provider selection. Companies examine worker treatment, fair compensation, and working conditions alongside traditional quality and cost metrics.

Synthetic Data Alternatives

Synthetic video generation offers alternatives to manual annotation for some applications. Computer graphics generate training scenarios with automatic ground truth labels.

Synthetic approaches work best when real-world data collection is expensive or dangerous—extreme weather conditions, rare traffic scenarios, hazardous environments.

But synthetic data doesn't fully replace real-world annotation yet. Domain gap between synthetic and real data remains an active research challenge.

Making the Right Decision for Your Project

Video annotation outsourcing isn't one-size-fits-all. The right approach depends on project specifics, organizational capabilities, and strategic priorities.

Small pilot projects with a few hundred clips often make sense in-house. This builds internal understanding of annotation challenges and requirements before committing to external partnerships.

But as projects scale, outsourcing usually becomes the more practical option. The cost, speed, and quality benefits of specialized providers outweigh the coordination overhead for most teams.

Focus provider selection on quality first, cost second. Poor annotations waste far more money than they save. A provider charging 30% more but delivering 98% accuracy costs less overall than a cheap provider at 85% accuracy requiring extensive rework.

Start relationships with pilot projects. Test quality, communication, and processes before committing to large-scale work. The time invested in thorough vetting pays back many times over.

Build partnerships, not transactions. Long-term relationships with reliable providers deliver better results than constantly switching vendors chasing marginal cost savings.

Document everything. Capture decisions, lessons learned, and edge case resolutions. This knowledge becomes invaluable as projects scale and team members change.

Remember that annotation is just one piece of the machine learning pipeline. The best annotations in the world don't help if model architecture, training procedures, or evaluation methods have fundamental issues. Treat annotation as an important component of a larger system rather than the entire solution.

The AI and computer vision landscape continues evolving rapidly. Annotation requirements, tools, and best practices will keep changing. Stay informed about developments in the field. Maintain flexibility in approaches rather than locking into rigid processes.

Teams that approach video annotation outsourcing strategically—with clear requirements, careful provider selection, strong communication, and continuous quality monitoring—consistently achieve better results while building valuable partnerships that scale with their needs.

Frequently Asked Questions

Topics

Related Blogs

Related Podcasts